DOI: https://doi.org/10.1016/j.aiia.2024.07.001

تاريخ النشر: 2024-07-17

مقارنة بين YOLOv8 و Mask R-CNN لتجزئة الكائنات في بيئات البساتين المعقدة

الملخص

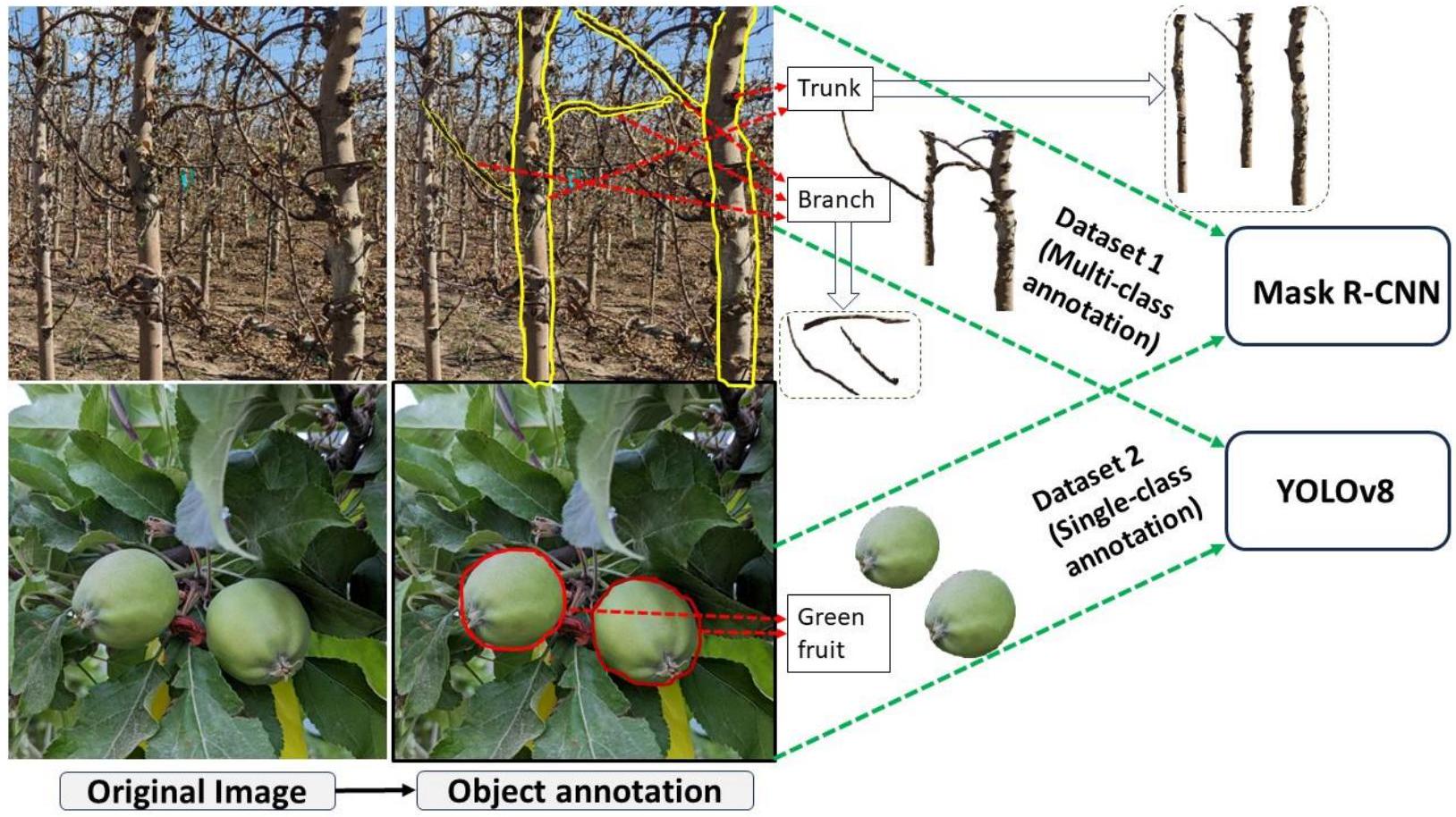

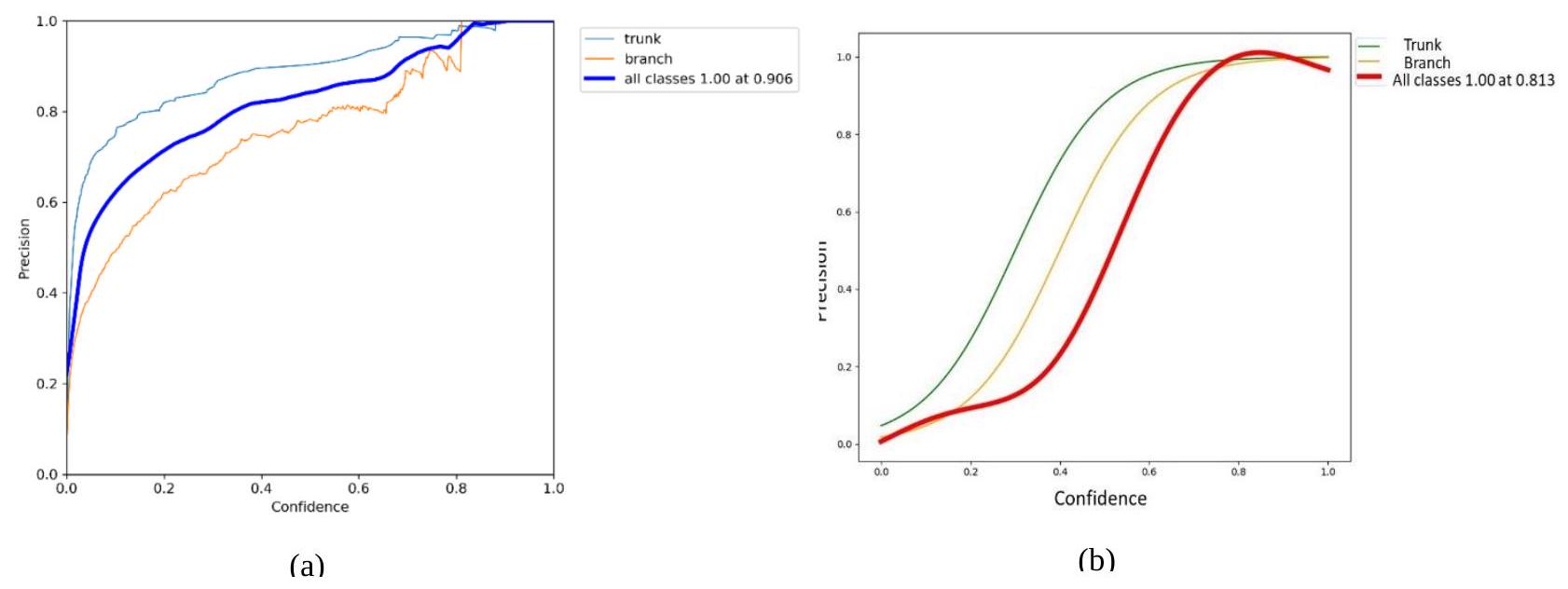

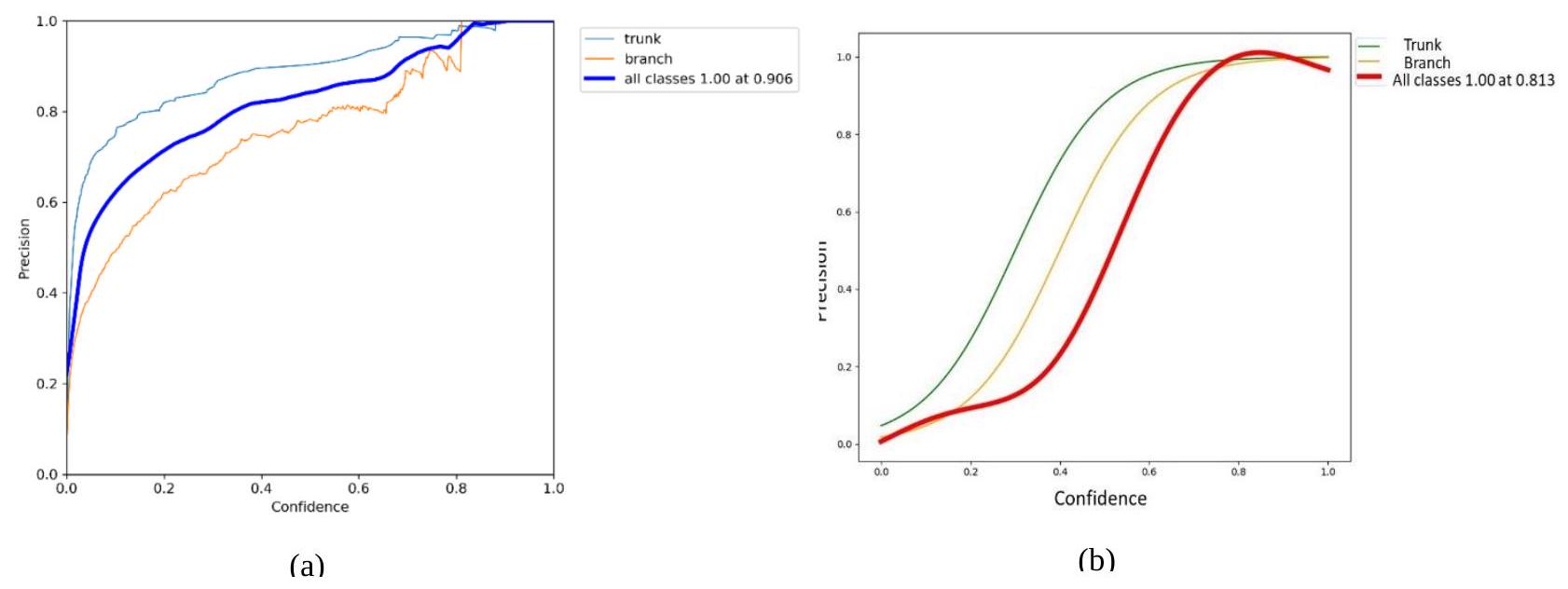

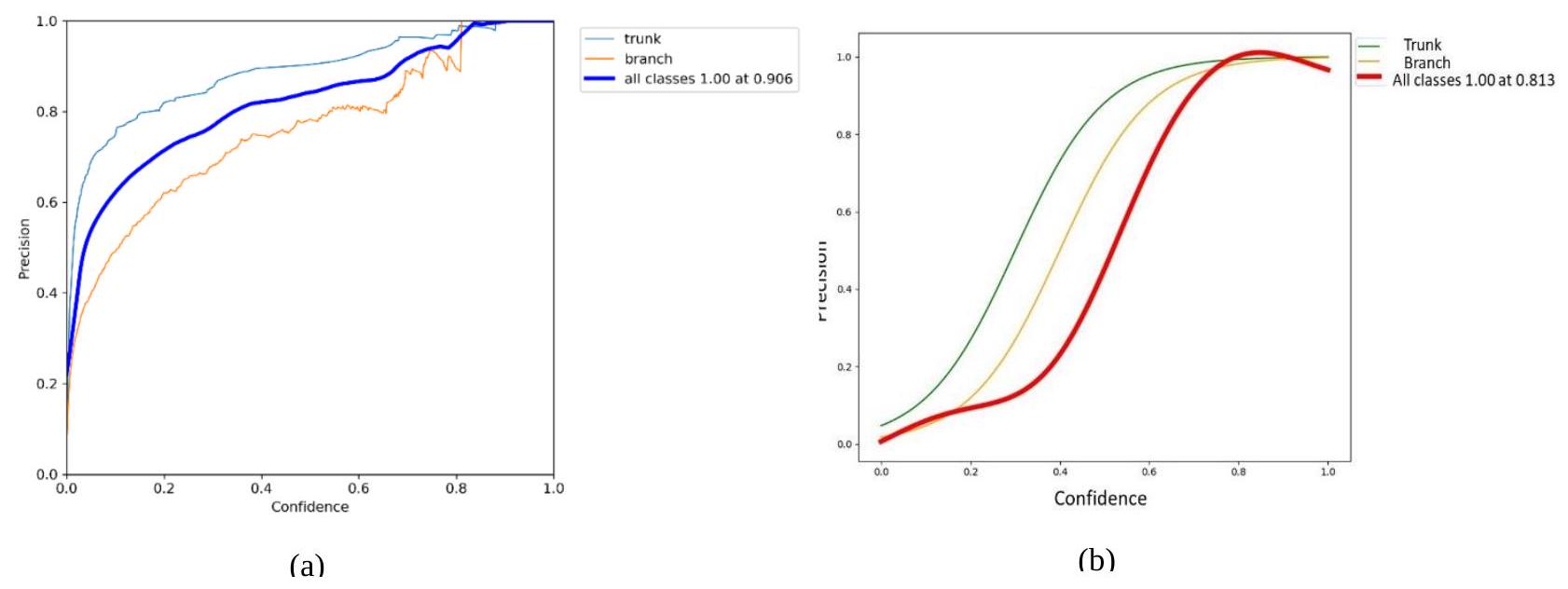

تجزئة الكائنات، وهي عملية معالجة صور مهمة للأتمتة في الزراعة، تُستخدم لتحديد الكائنات الفردية ذات الاهتمام بدقة داخل الصور، مما يوفر معلومات أساسية لمهام آلية أو روبوتية متنوعة مثل الحصاد الانتقائي والتقليم الدقيق. تقارن هذه الدراسة بين نموذجين من التعلم الآلي، YOLOv8 أحادي المرحلة و Mask R-CNN ثنائي المرحلة لتجزئة الكائنات تحت ظروف بستانية متغيرة عبر مجموعتين من البيانات. تتضمن مجموعة البيانات 1، التي تم جمعها في موسم السكون، صورًا لأشجار التفاح الساكنة، والتي تم استخدامها لتدريب نماذج تجزئة متعددة الكائنات التي تحدد فروع الأشجار وجذوعها. تتضمن مجموعة البيانات 2، التي تم جمعها في بداية موسم النمو، صورًا لأشجار التفاح مع أوراق خضراء وتفاح غير ناضج (أخضر) (يُسمى أيضًا ثمرة صغيرة)، والتي تم استخدامها لتدريب نماذج تجزئة كائن واحد تحدد فقط التفاح الأخضر غير الناضج. أظهرت النتائج أن YOLOv8 أدت أداءً أفضل من Mask R-CNN، محققة دقة جيدة واسترجاعًا قريبًا من الكمال عبر كلا مجموعتي البيانات عند عتبة ثقة تبلغ 0.5. على وجه التحديد، لمجموعة البيانات 1، حقق YOLOv8 دقة قدرها 0.90 واسترجاعًا قدره 0.95 لجميع الفئات. بالمقارنة، أظهر Mask R-CNN دقة قدرها 0.81 واسترجاعًا قدره 0.81 لنفس مجموعة البيانات. مع مجموعة البيانات 2، حقق YOLOv8 دقة قدرها 0.93 واسترجاعًا قدره 0.97. حقق Mask R-CNN، في هذا السيناريو ذو الفئة الواحدة، دقة قدرها 0.85 واسترجاعًا قدره 0.88. بالإضافة إلى ذلك، كانت أوقات الاستدلال لـ YOLOv8 10.9 مللي ثانية لتجزئة متعددة الفئات (مجموعة البيانات 1) و7.8 مللي ثانية لتجزئة فئة واحدة (مجموعة البيانات 2)، مقارنة بـ 15.6 مللي ثانية و12.8 مللي ثانية التي حققها Mask R-CNN، على التوالي. تُظهر هذه النتائج دقة وكفاءة YOLOv8 المتفوقة في تطبيقات التعلم الآلي مقارنة بالنماذج ثنائية المرحلة، وخاصة Mask-R-CNN، مما يشير إلى ملاءمتها في تطوير عمليات بستانية ذكية وآلية، خاصة عندما تكون التطبيقات في الوقت الحقيقي ضرورية في مثل هذه الحالات مثل الحصاد الروبوتي وتخفيف الثمار الخضراء غير الناضجة.

1. المقدمة

وقت المعالجة ولكن أيضًا يعزز قدرة النماذج على التكيف مع سيناريوهات جديدة وغير مرئية، وهي ميزة أساسية للتطبيقات الزراعية القابلة للتعميم والتوسع. تعتبر هذه القدرة على التعلم الديناميكي تحسينًا كبيرًا مقارنة بالطرق التقليدية التي تكون ثابتة ومقيدة بصرامتها الخوارزمية.

تمت دراسة تقسيم أجزاء سقف النباتات في الكروم الساكنة على نطاق واسع باستخدام تقنيات تعلم عميقة مختلفة (مثل [61].[100]). ظهرت نماذج أخرى مثل ViNet [62]، التي تقدم حلول تعلم عميق لتقدير هياكل الكروم. تشمل التطورات الإضافية تطبيق التعلم العميق والقيود الهندسية لتقسيم الفروع المخفية وإعادة البناء ثلاثي الأبعاد [63]، بالإضافة إلى استخدام خوارزميات استعمار الفضاء لتقليم السدر في نباتات السدر [59]. نظام استشعار قائم على التعلم العميق (يسمى SPGnet) لنبات السدر بواسطة باوجيان وآخرون [58]، واكتشاف الفروع في أشجار التفاح باستخدام R-CNN بواسطة زانغ وآخرون، 2014 [64]، وMask R-CNN الصغيرة من لين وآخرون لإعادة بناء فروع الجوافة [65] هي دراسات حديثة أخرى في هذا المجال. بالإضافة إلى ذلك، استكشف أغييار وآخرون [66] تقسيم الجذع باستخدام نهج تقسيم دلالي قائم على التعلم العميق مع كاشف متعدد الصناديق (SSD). بالمقارنة مع مقاييس الأداء التي أبلغت عنها هذه المنهجيات الحديثة والمبتكرة المتاحة في الأدبيات، قدم نموذج YOLOv8 المقدم في هذه الدراسة أداءً أفضل في تقسيم جذوع الأشجار من حيث الدقة (0.95) والاسترجاع (0.97) و

| المراجع | السنة | نموذج DL | الأهداف |

| [69]، [70] | 2021 | YOLO-V4 | اكتشاف التفاح في مشهد معقد |

| [71]، [72] | 2021 | Mask R-CNN | اكتشاف التفاح المعتمد على التعلم العميق |

| [71]، [73] | 2021 | YOLO-V3 | اكتشاف الفواكه الخضراء (التفاح، المانجو) |

| [73]، [74] | 2021 | YOLO-V5 | اكتشاف الثمار الصغيرة لتخفيف الثمار |

| [75] | 2022 | Mask R-CNN | تحديد الفروع وتحديد نقاط التقاطع في أشجار التفاح؛ تحديد الجذع وتقسيمه |

| [76]، [77] | 2022 | YOLO-V4 | اكتشاف التفاح، العد، وتتبع جذوع الأشجار في البساتين الحديثة |

| [78] | 2022 | YOLO-V4 | اكتشاف التفاح الناضج/غير الناضج على هياكل الأشجار ذات الأوراق الكثيفة لتقدير الحمل المبكر للمحاصيل |

| [79] | 2022 | YOLO-V5 | طريقة تحديد نمط نمو التفاح في البستان |

| [80] | 2022 | YOLO-V5 | اكتشاف جذع الشجرة والعوائق في بساتين التفاح |

| [81]، [82] | 2022 | Mask R-CNN | تقسيم التفاح الناضج والأخضر في البساتين |

| [83] | 2022 | Mask R-CNN | تقسيم الشجرة وتاج الشجرة في البساتين |

| [84] | 2023 | YOLO-V3 | اكتشاف جودة ثمار التفاح |

| [85] | 2023 | YOLO-V7 | اكتشاف وعدّ التفاح الصغير المستهدف |

| [56]، [82] | 2023 | Mask R-CNN | تقسيم التفاح الأخضر |

- لمقارنة أداء نماذج YOLOv8 وMask R-CNN في تقسيم الكائنات ذات الفئة الواحدة، وبشكل خاص التفاح الأخضر (الثمار الصغيرة)، في الصور المجمعة من بيئات بستان متغيرة في موسم النمو المبكر؛ و

- لتقييم قدرات هذين النموذجين في تقسيم الكائنات متعددة الفئات، وبشكل خاص الفروع الرئيسية وجذوع أشجار التفاح في الصور المجمعة من بستان تفاح نموذجي خلال موسم السكون.

بتوقع الصناديق المحيطة ولكن أيضًا لإنشاء أقنعة كائنات دقيقة، مما يجعل وظائفه تتماشى بشكل أقرب مع تلك الخاصة بـ Mask R-CNN، التي كانت معيارًا في تقسيم الكائنات. لذلك، فإن مقارنة هذين النموذجين ذات صلة حيث أن كلاهما الآن يقدم حلولًا قوية لتقسيم الكائنات، مما يجعل تقييم أدائهما في التطبيقات الزراعية، حيث تكون سرعة الاكتشاف ودقة التقسيم حاسمة، ذا صلة عالية ومبرر علميًا.

2. نماذج التعلم العميق

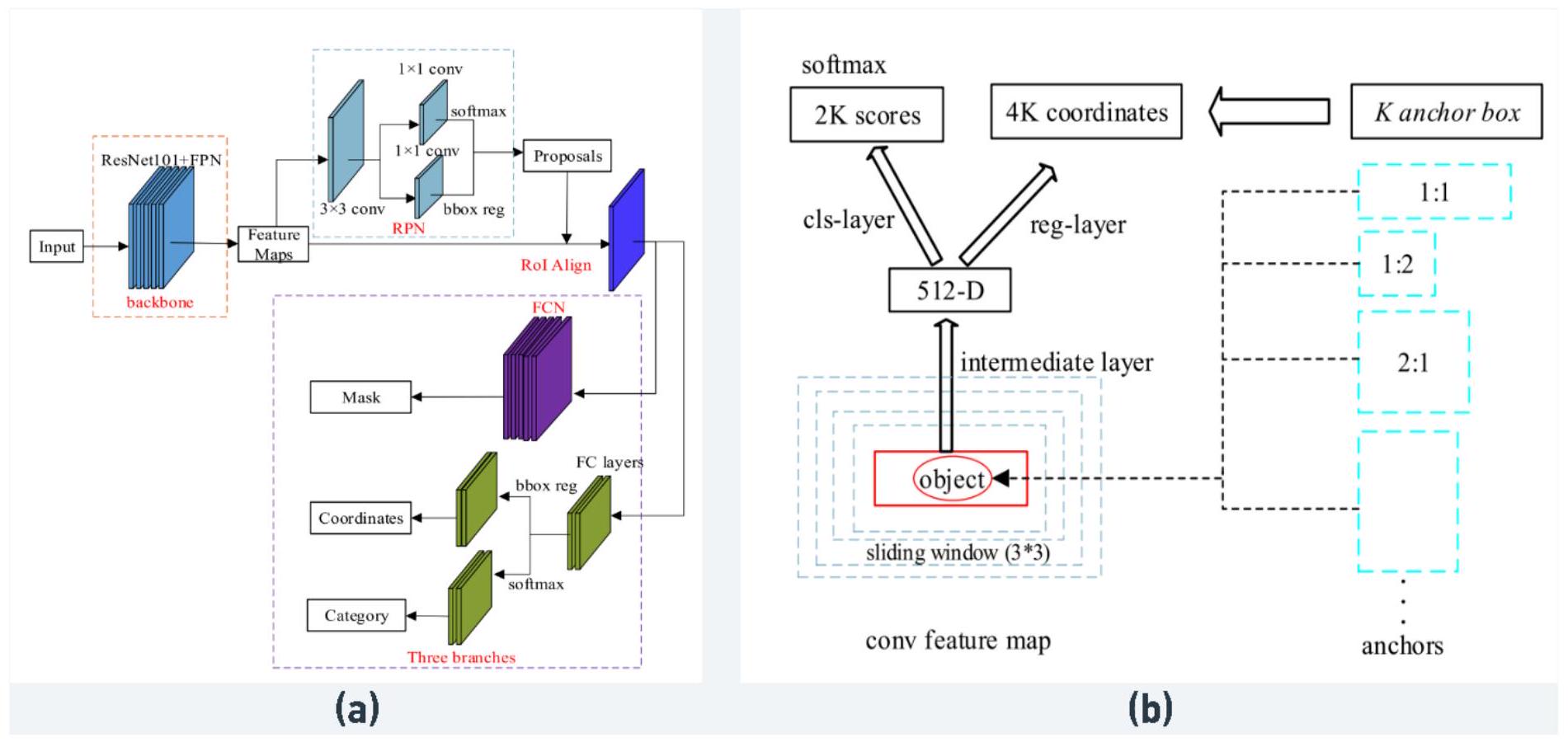

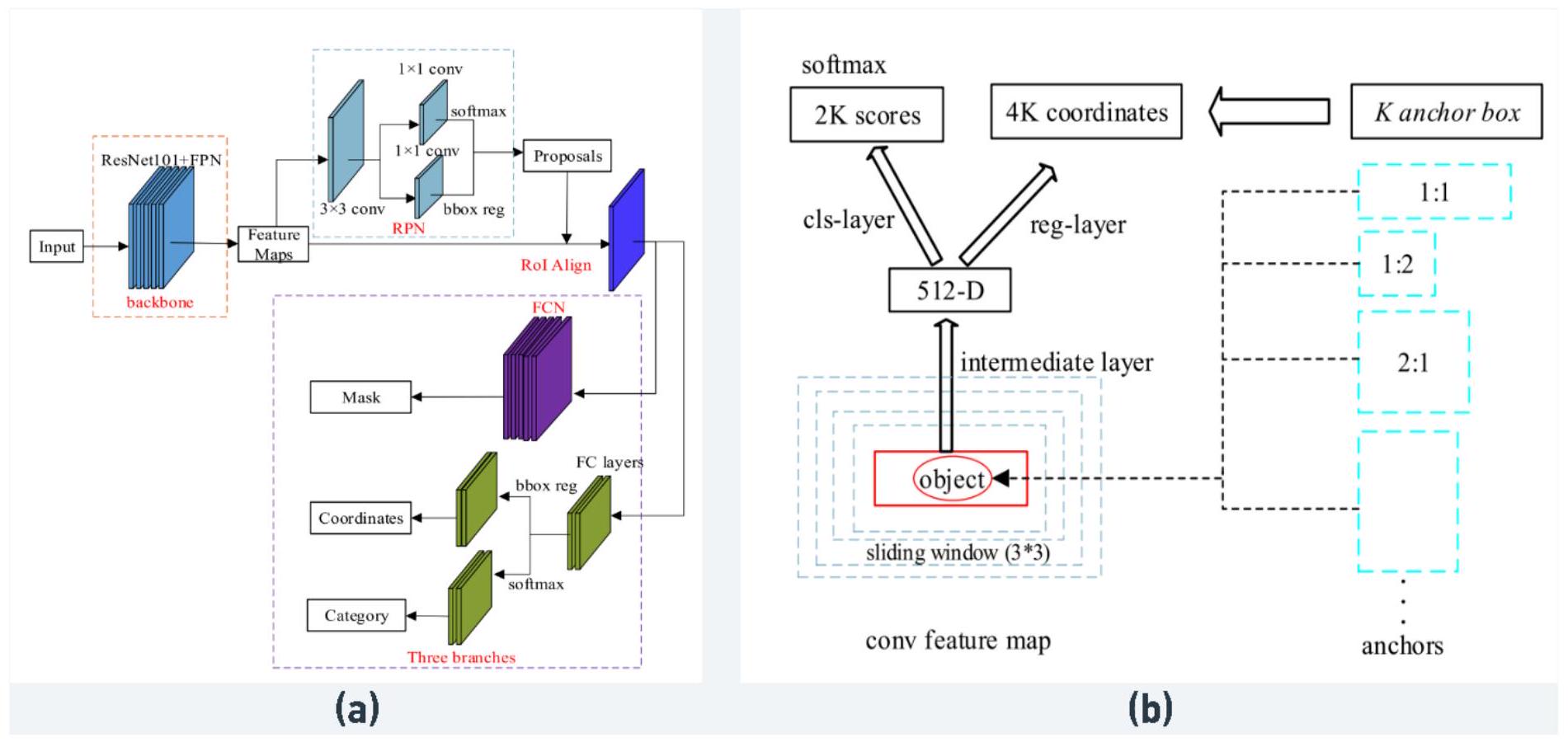

2.1 Mask R-CNN

شبكة العمود الفقري. يتوقع فرع الصندوق المحيط تصنيف الكائنات وإحداثيات الصندوق المحيط لكل اقتراح منطقة، بينما يتوقع فرع القناع قناعًا ثنائيًا لكل كائن ضمن الصندوق المحيط.

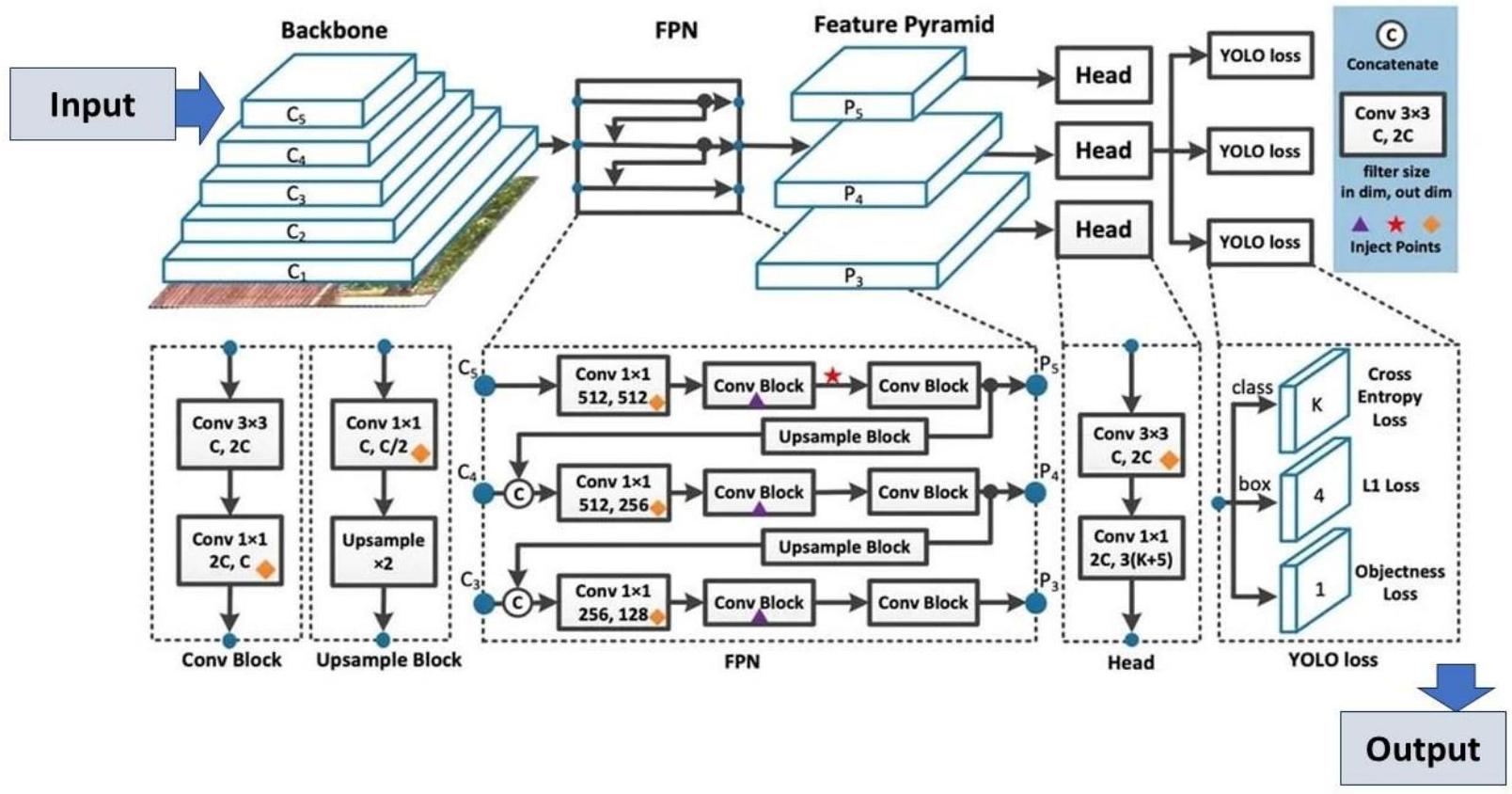

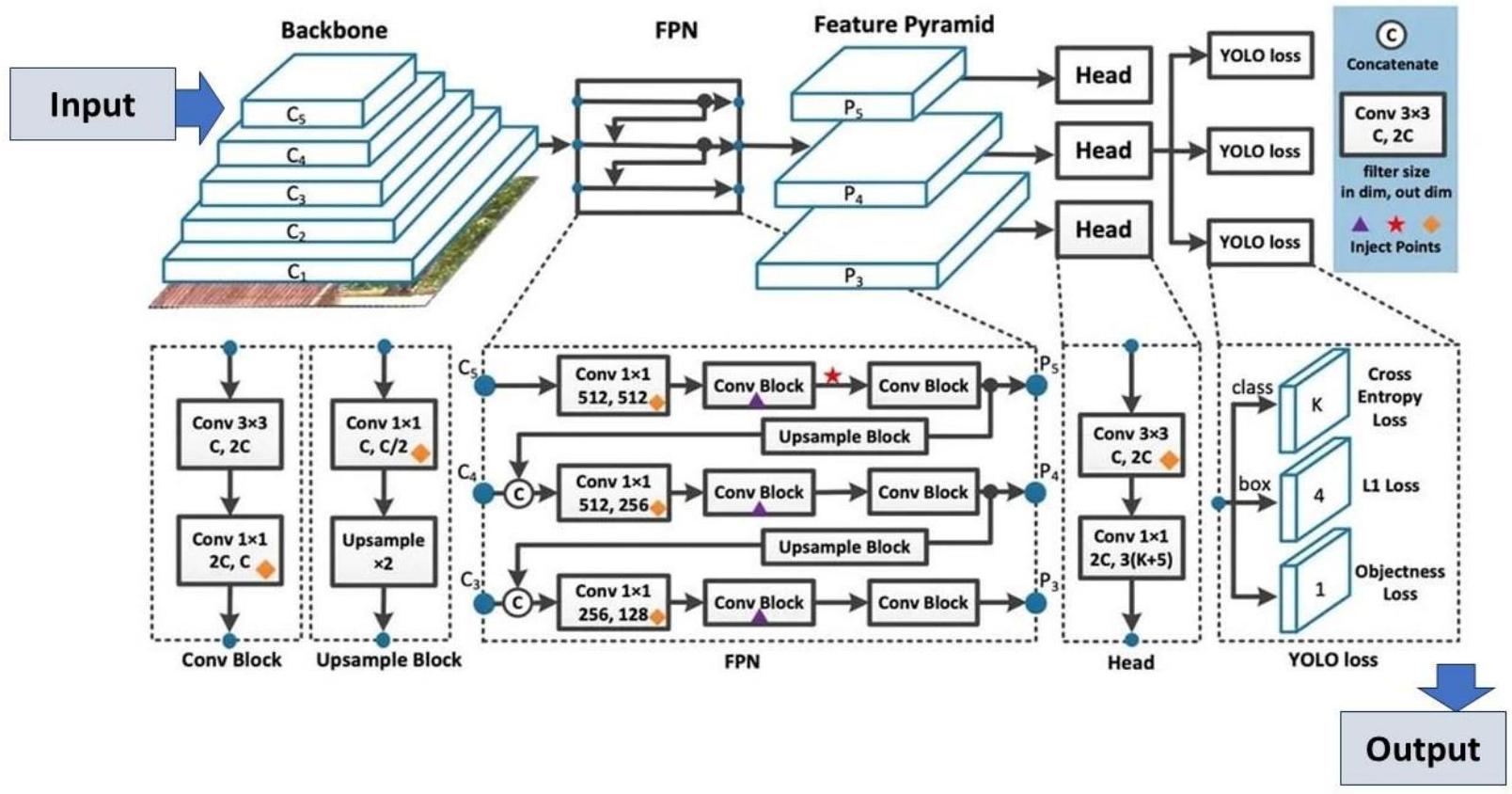

2.2 YOLOv8

تحسين معالجة الميزات منخفضة المستوى، يصبح YOLOv8 أداة قوية للكشف المبكر عن علامات خفية للآفات والأمراض الزراعية، وهو أمر حاسم للحفاظ على صحة المحاصيل [102]. على سبيل المثال، تم تطبيق إصدارات محسنة من YOLOv8 للكشف عن الأمراض في الخضروات داخل البيوت الزجاجية [103] لضمان الكشف المبكر وإدارة أمراض النباتات. تشمل الابتكارات الأخرى دمج آليات الانتباه في YOLOv8 لتعزيز قدرات الكشف عن الأجسام، والتي تم اختبارها بواسطة [104] لتحسين دقة الكشف عن الطماطم في البيئات الزراعية المزدحمة. وبالمثل، ركز [93] على دمج YOLOv8 مع هياكل المحولات الخفيفة لتحسين عملية استخراج الميزات، مما ساعد في تحسين الكشف عن نضج الفراولة في البيئات الحقلية. في بيئات البساتين، تم استخدام YOLOv8 مع تقنيات ملاءمة الشكل للكشف الدقيق عن حجم التفاح الأخضر غير الناضج، وهو أمر حيوي لتقدير الإنتاج ومراقبة النمو [106]. وبالمثل، تم تطوير نموذج YOLOv8 متخصص لمراقبة عملية نضج البطيخ المتغير اللون، مما يمثل خطوة كبيرة نحو تطبيقات مخصصة في الزراعة [107].

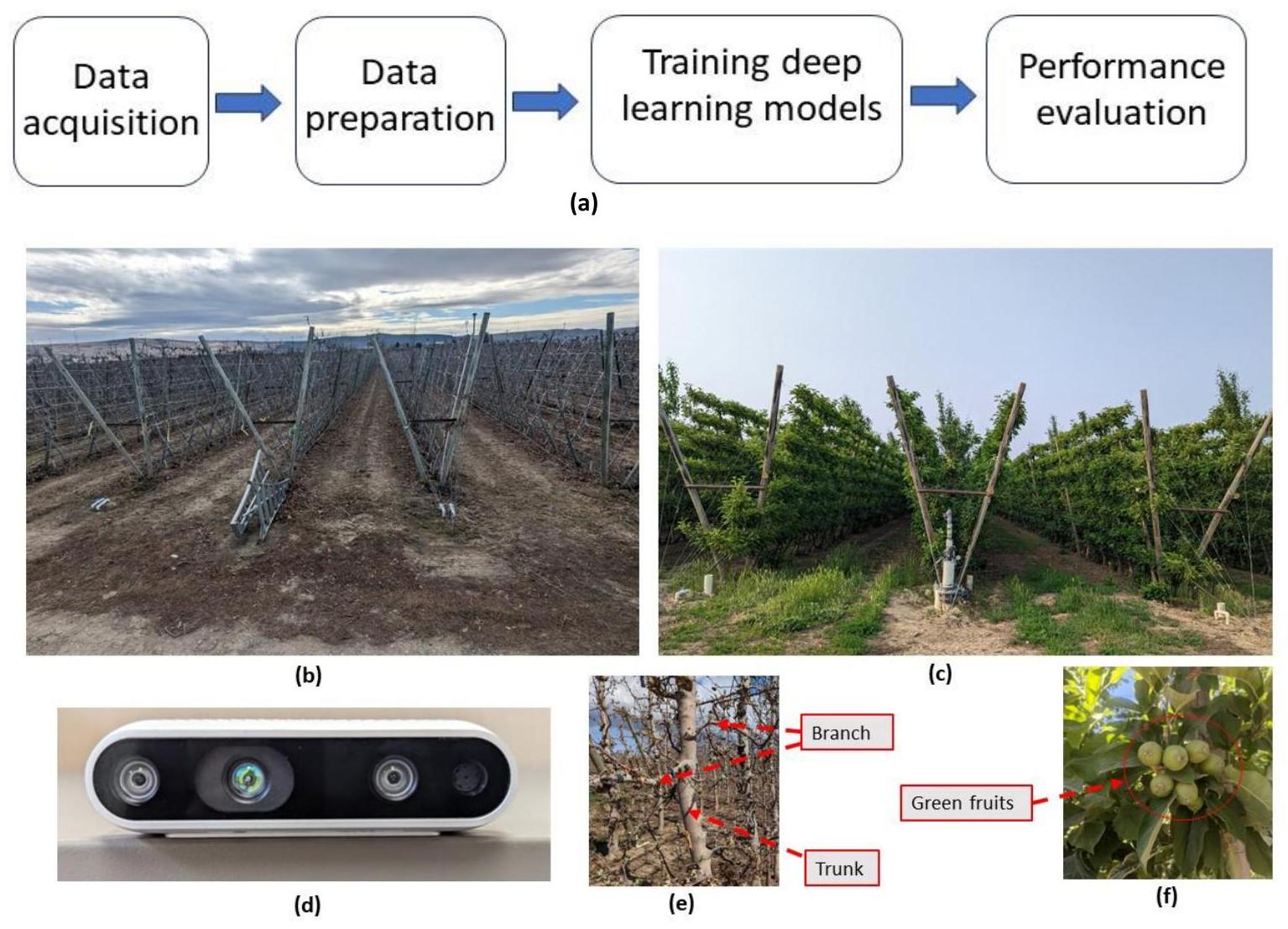

3. المواد والأساليب

3.1 موقع الدراسة والحصول على البيانات

3.2 إعداد البيانات

3.3 تنفيذ نموذج التعلم العميق

| الطرق المطبقة | قيمة |

| زيادة اللون (كسر) | 0.015 |

| زيادة التشبع (الكسور) | 0.7 |

| زيادة القيمة (كسر) | 0.4 |

| دوران | 0.0 |

| ترجمة | 0.1 |

| مقياس | 0.5 |

| اقلب يسارًا ويمينًا (احتمالية) | 0.5 |

| الموزاييك (الاحتمالية) | 1.0 |

| تآكل الوزن | 0.0005 |

3.4 تقييم الأداء

عبر k فئات (المعادلة 4)، كان حاسماً في تقييم دقة النموذج عند عتبة

يتم حساب هذه المقاييس على النحو التالي:

حيث تمثل TP و FP و FN حالات الكائنات الإيجابية الحقيقية والسلبية الزائفة والسلبية الحقيقية على التوالي. المتغير ‘k’ يمثل العدد الإجمالي لفئات الكائنات، و (AP)

4. النتائج والمناقشة

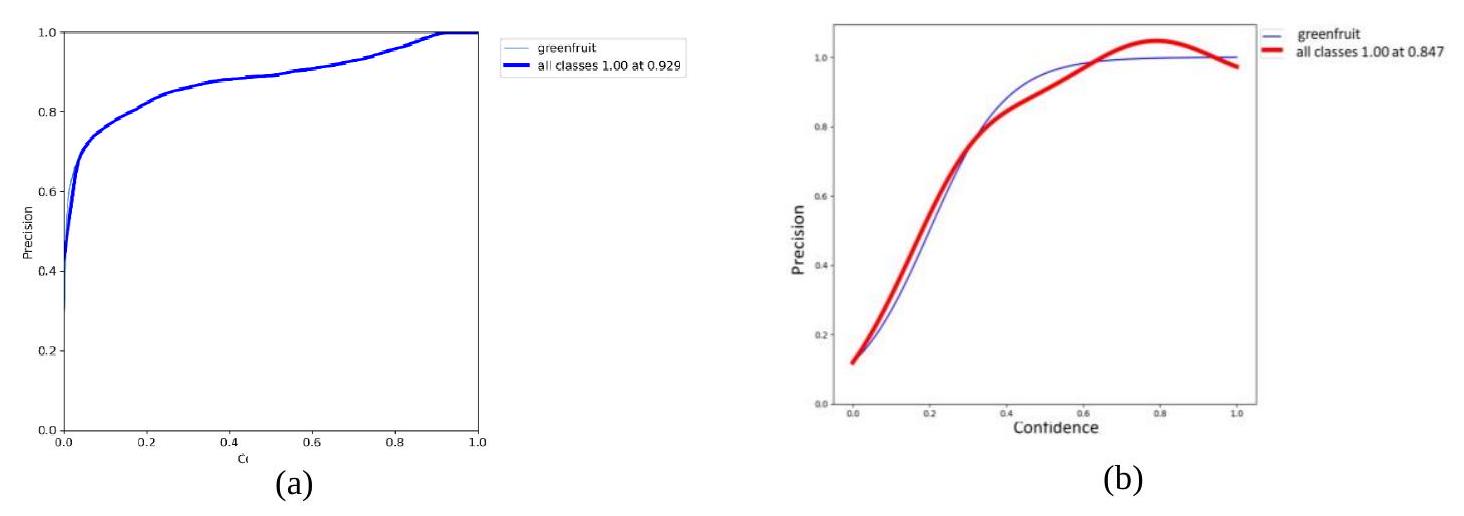

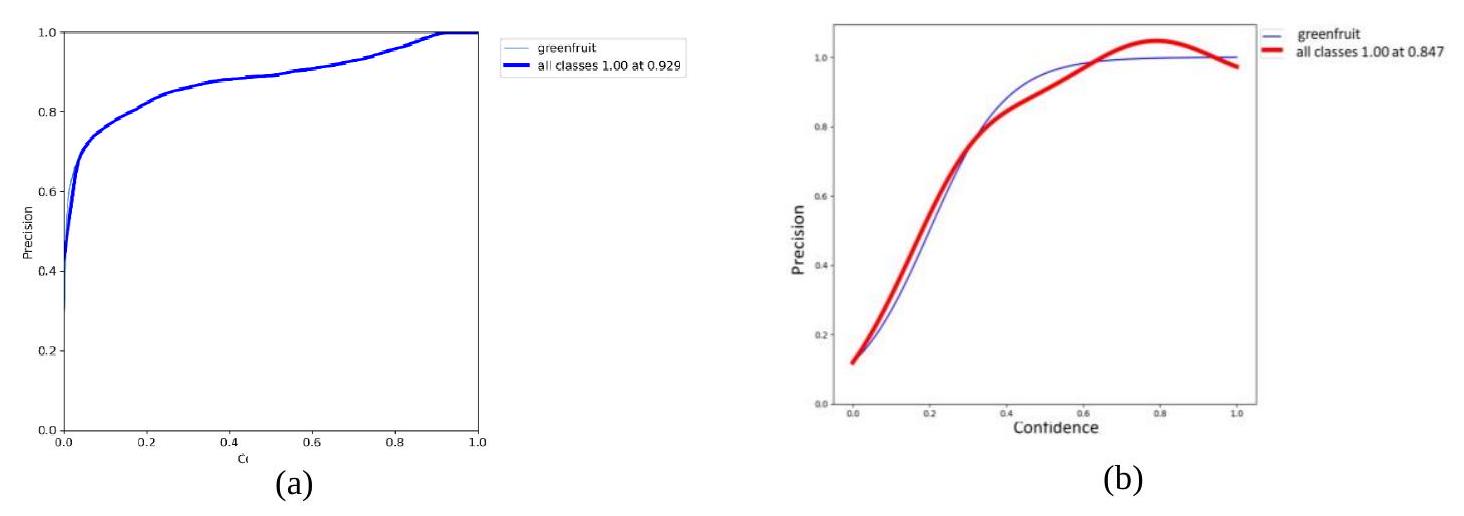

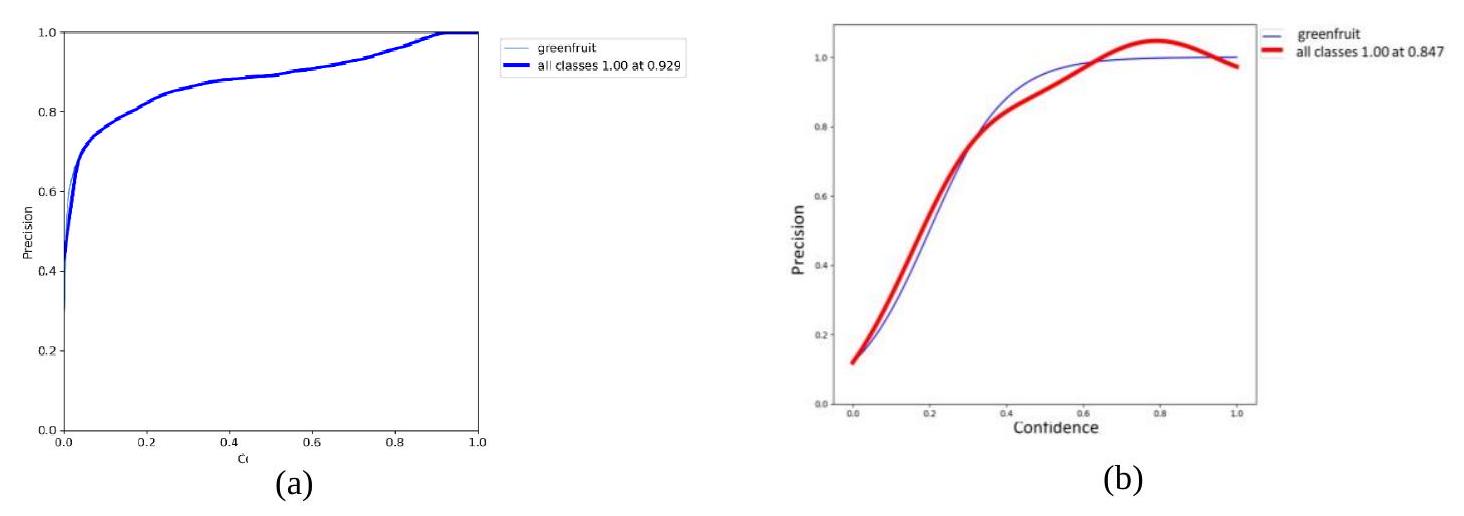

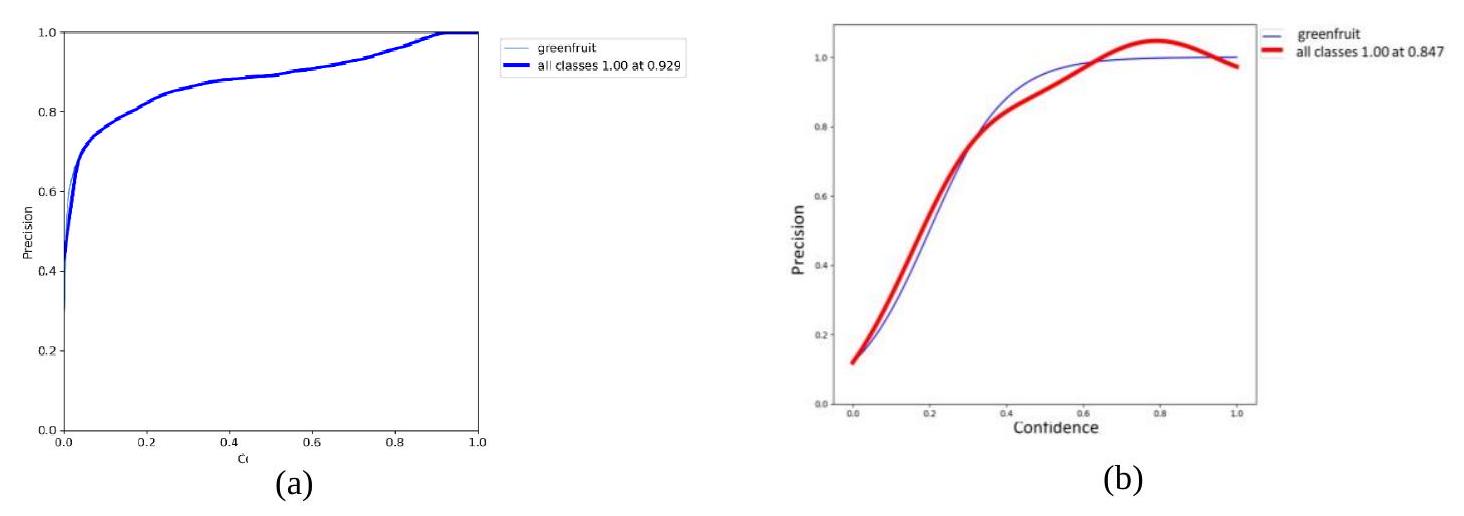

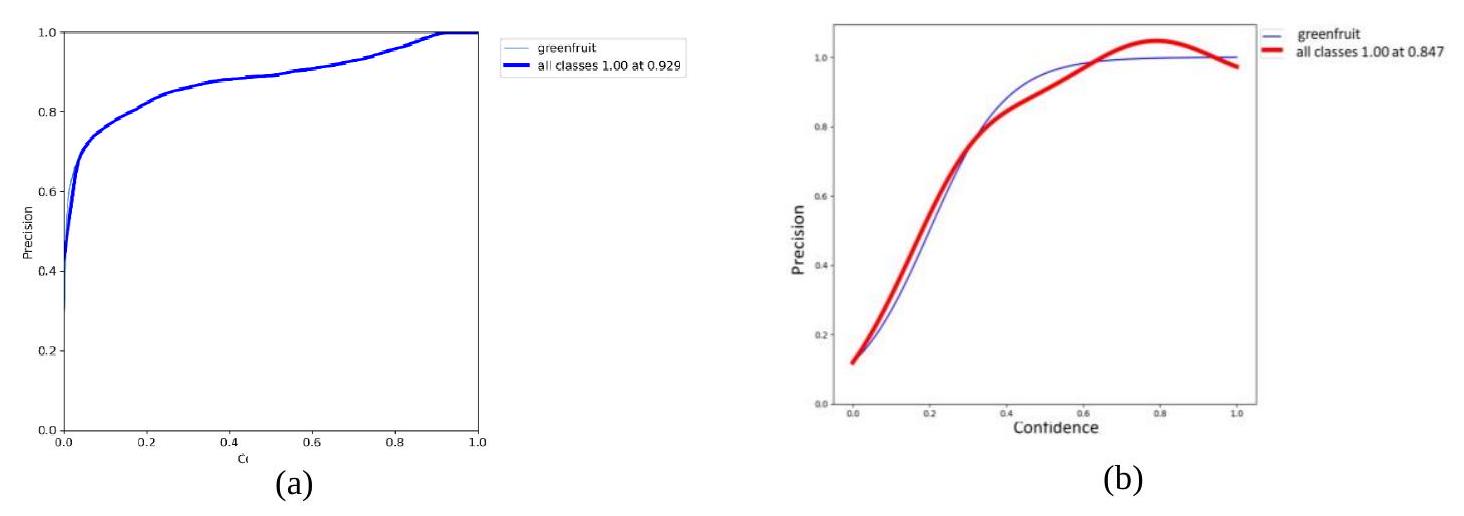

4.1 تقسيم الكائنات من فئة واحدة للتفاح الأخضر غير الناضج (الثمار الصغيرة)

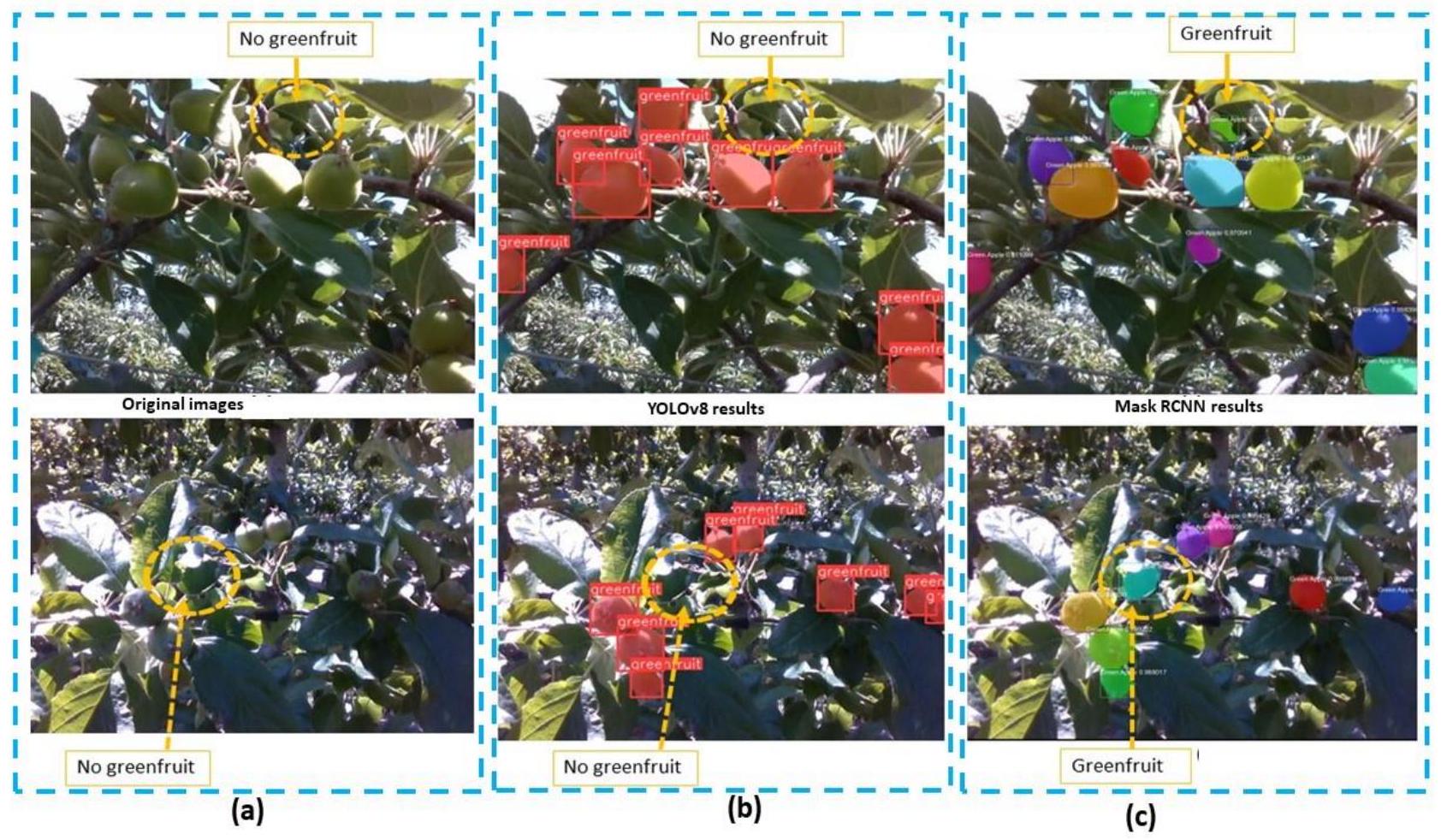

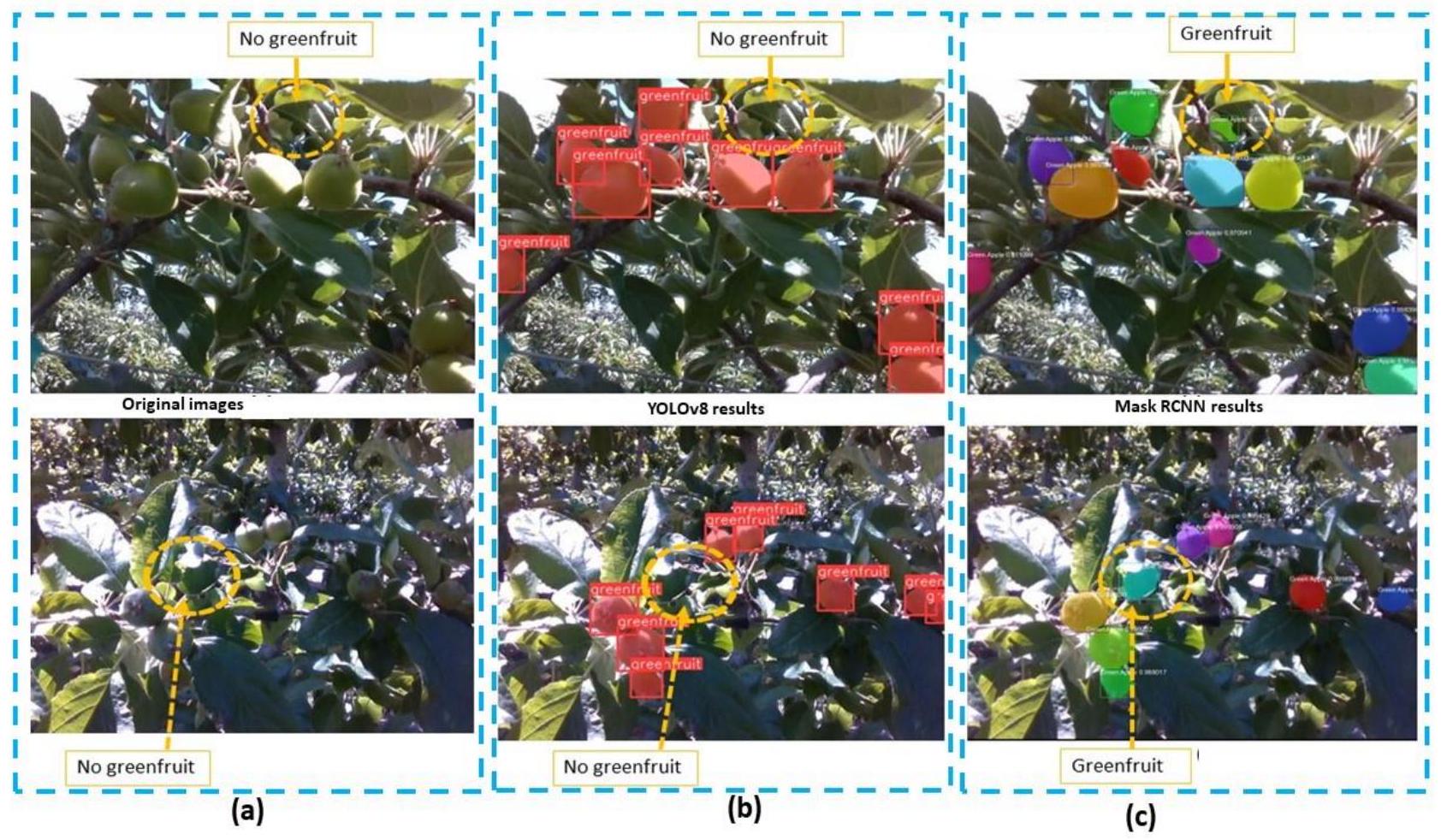

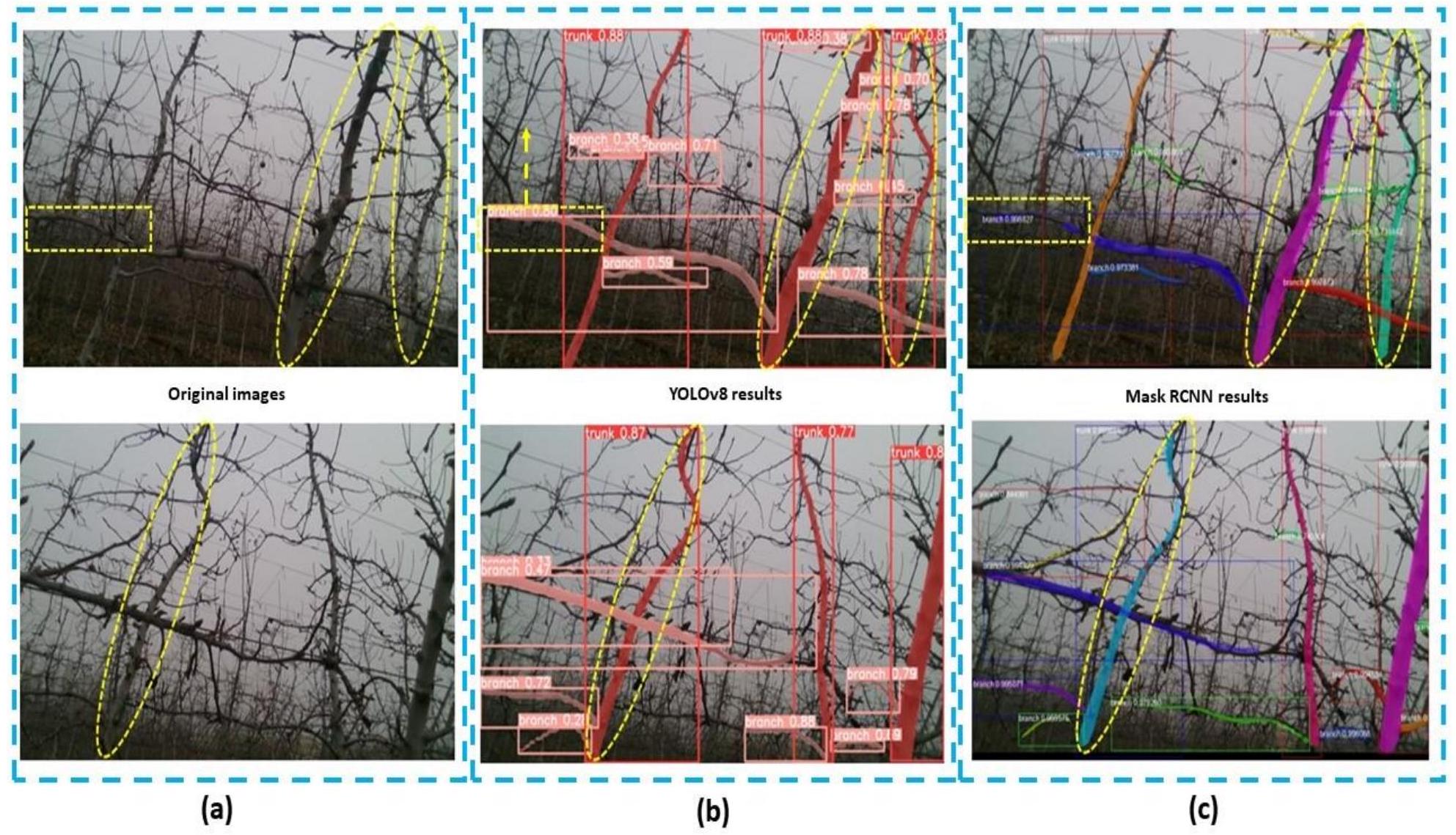

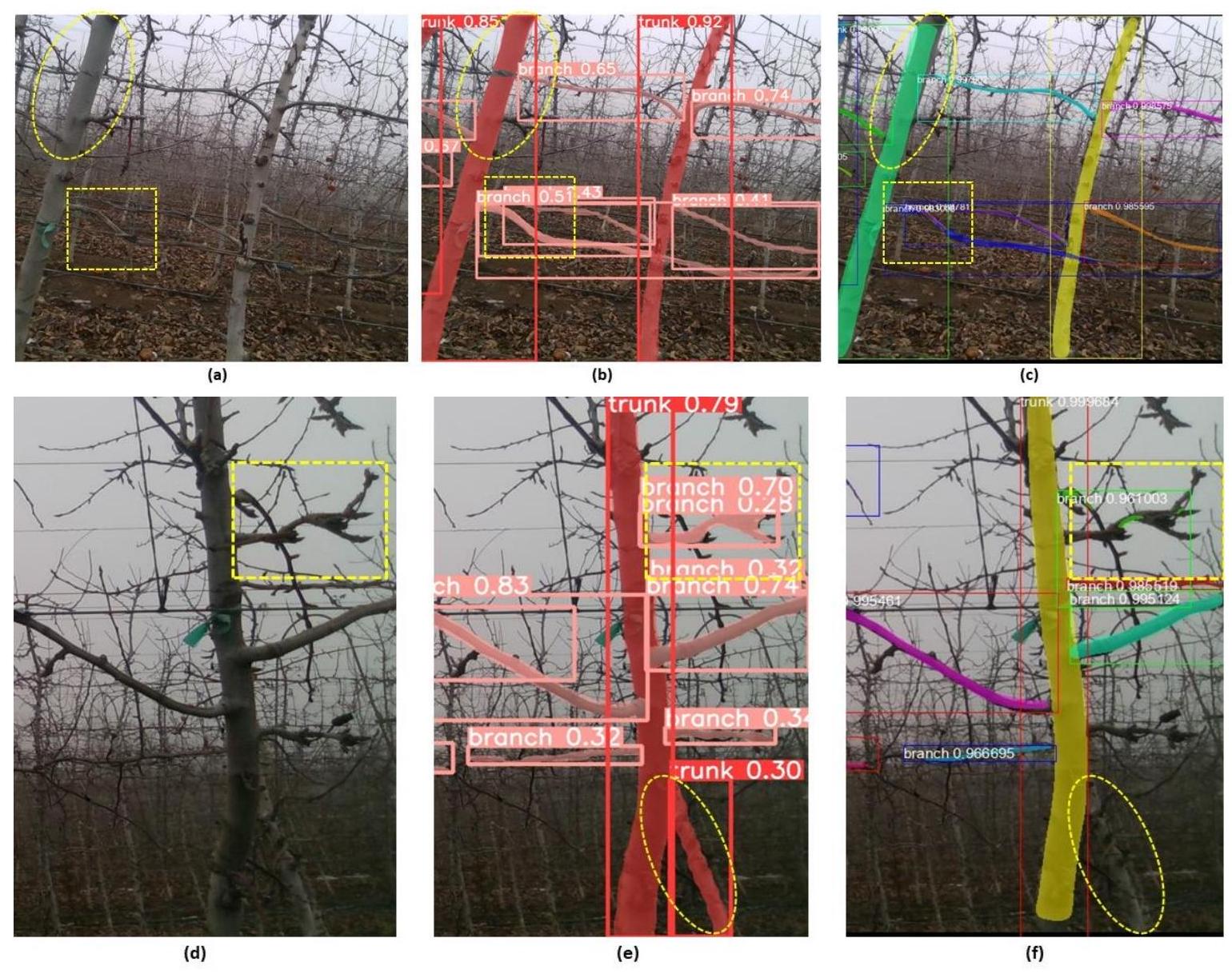

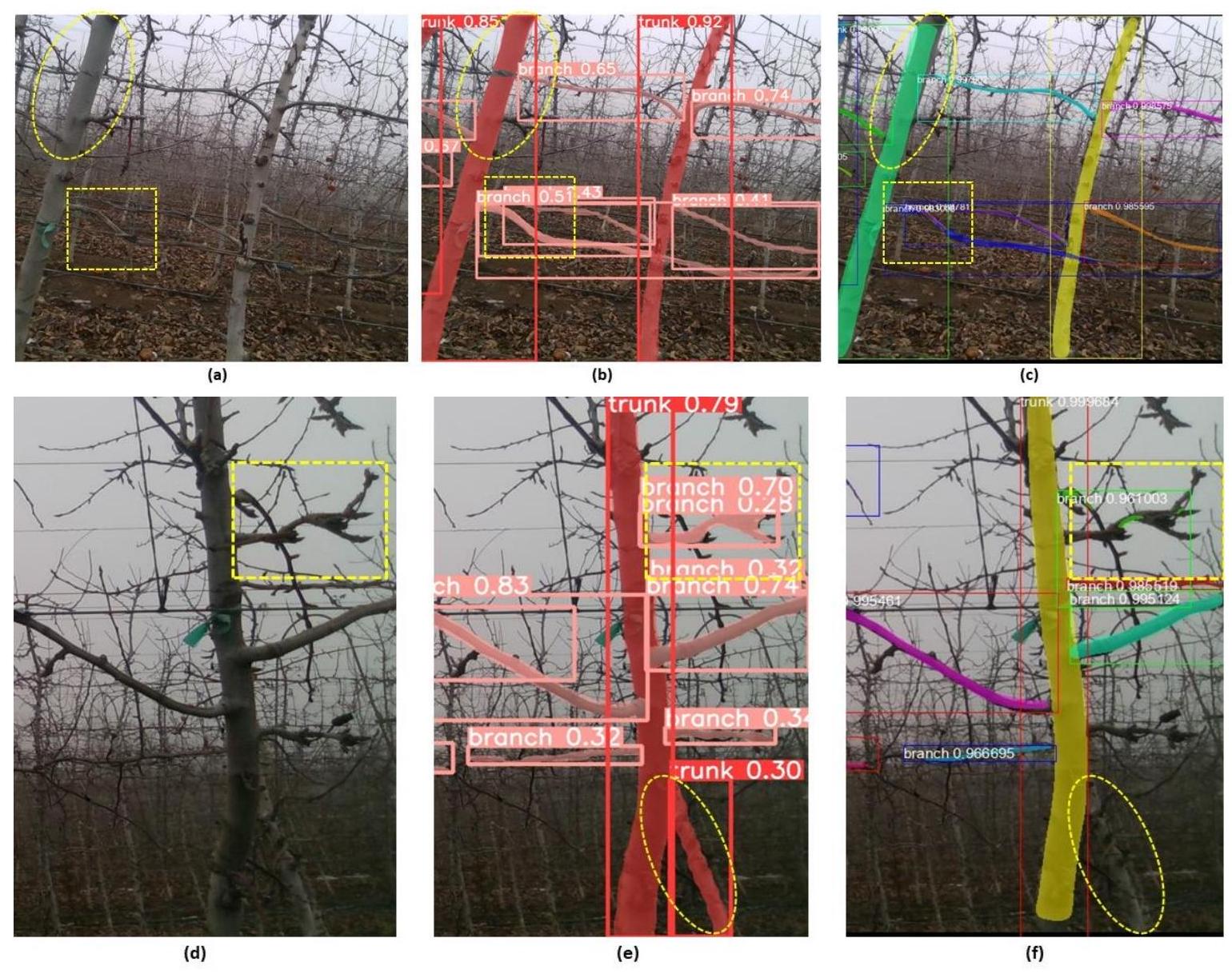

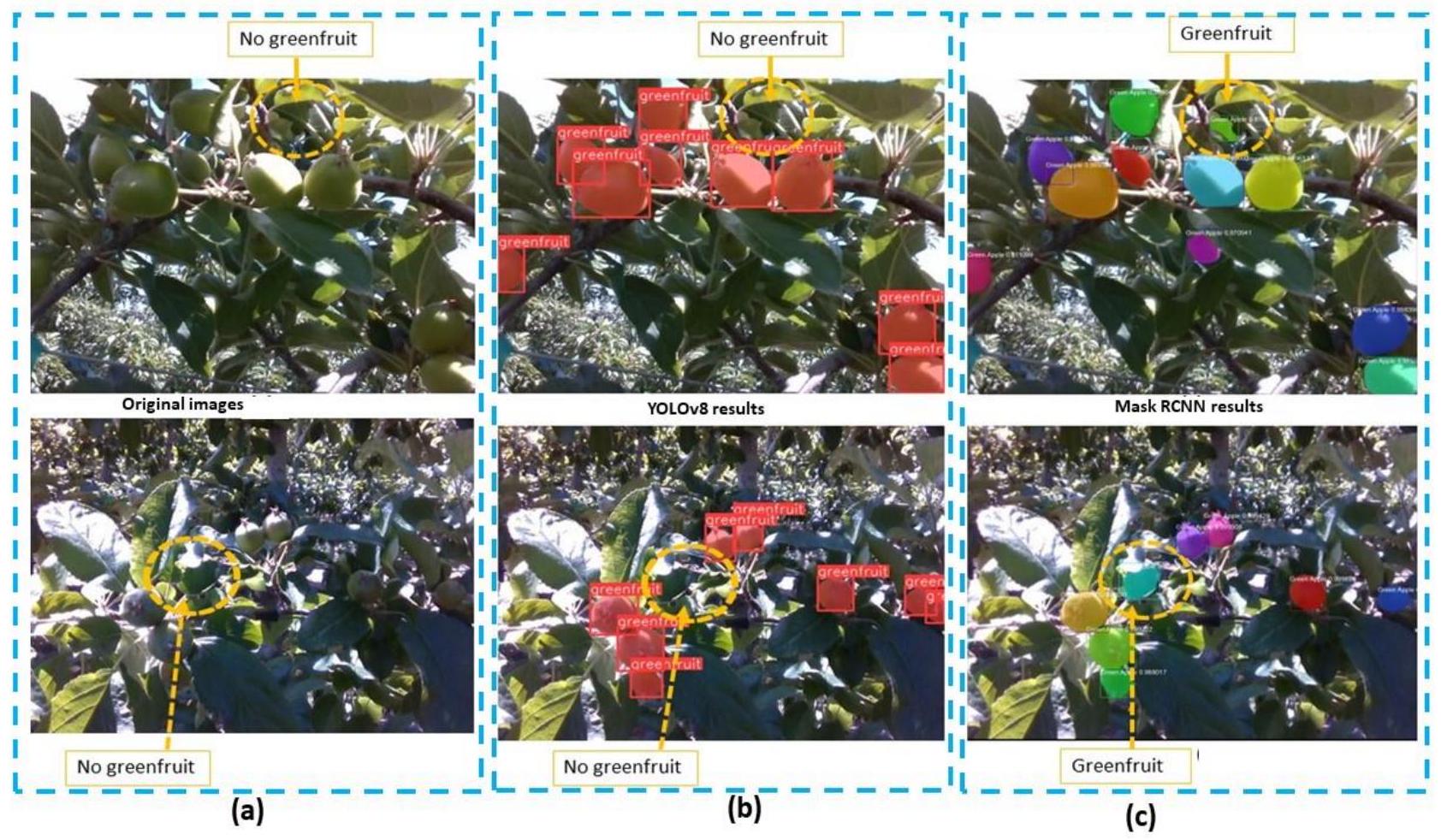

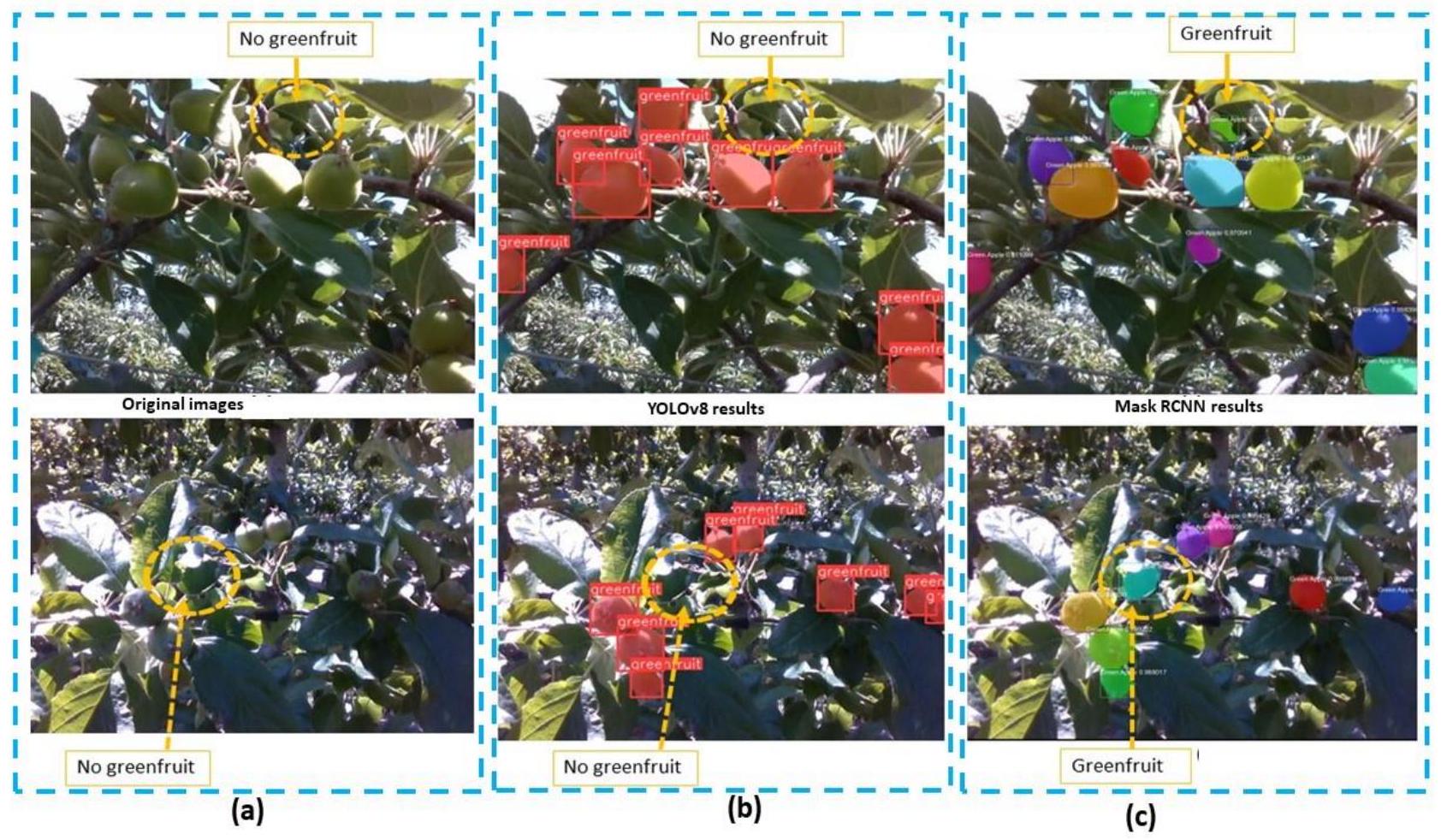

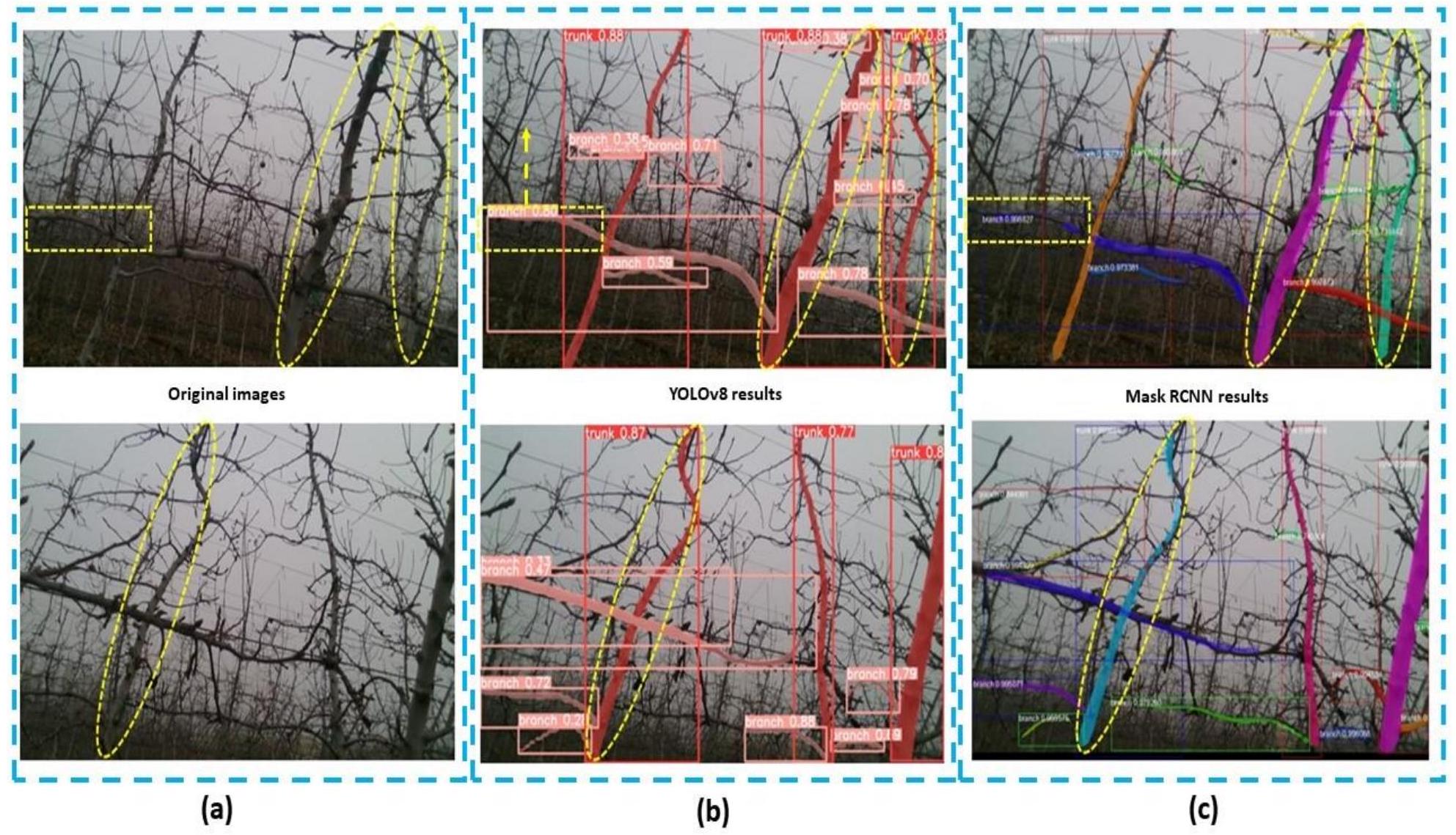

تظهر الفروق في الأداء بين YOLOv8 و Mask R-CNN عمومًا الطبيعة المميزة لهياكلهما والطريقة التي تعالج بها الصور. تم تصميم YOLOv8، ككاشف من مرحلة واحدة، للسرعة والدقة، مما يجعله قادرًا على استبعاد المناطق غير المستهدفة المتشابهة، كما لوحظ في مهام التقسيم (الشكل 8ب). إن نهجه المباشر في كشف الكائنات يتجنب خطوة اقتراح المنطقة، مما يؤدي إلى عدد أقل من الإيجابيات الكاذبة في مناطق السقف التي تشبه الثمار المستهدفة في اللون. من ناحية أخرى، يستخدم Mask R-CNN عملية من مرحلتين، تتضمن إنشاء اقتراحات المناطق قبل تصنيف الكائنات وتقسيمها. يمكن أن يؤدي ذلك أحيانًا إلى تضمين مناطق غير مستهدفة، مثل الأوراق والسيقان التي يتم تصنيفها بشكل خاطئ كثمار (الشكل 8ج). علاوة على ذلك، يبدو أن أدائه أكثر حساسية لتغيرات الإضاءة، مما يمكن أن يؤدي إلى أخطاء في تحديد الكائنات تحت ظروف الإضاءة المتطرفة مثل ضوء الشمس الساطع المباشر والظلال الداكنة (الشكل 9ج). على الرغم من هذه الفروق، هناك حالات معينة قد تظل فيها Mask R-CNN الخيار المفضل. يمكن أن تكون عمليتها ذات المرحلتين، وخاصة خطوة اقتراح المنطقة، مفيدة في مهام التقسيم المعقدة حيث تكون الدقة حاسمة، وتكون الكائنات متقاربة أو مخفية جزئيًا. في الماضي، تم التحقيق في تقسيم الثمار الخضراء باستخدام أساليب متنوعة. إطار عمل Wei et al. D2D [108]، وتركيز GHFormer على الكشف في الليل [109]، وLiu et al.

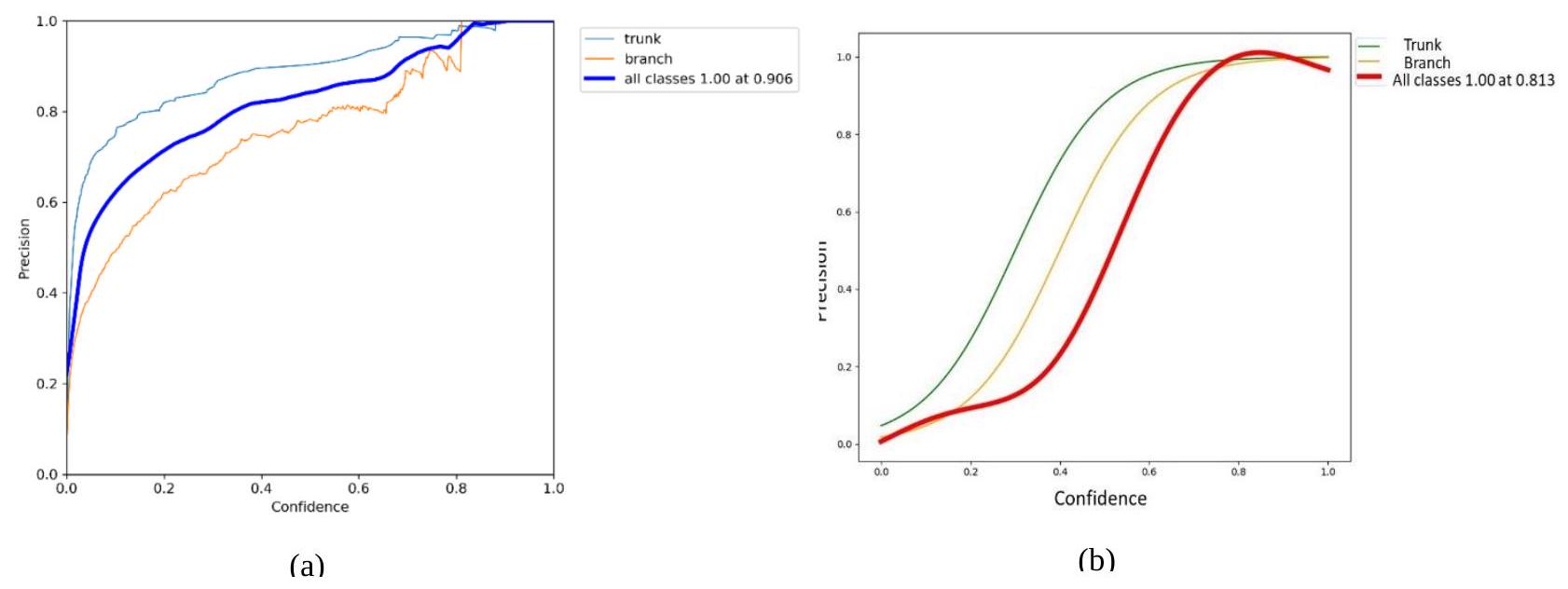

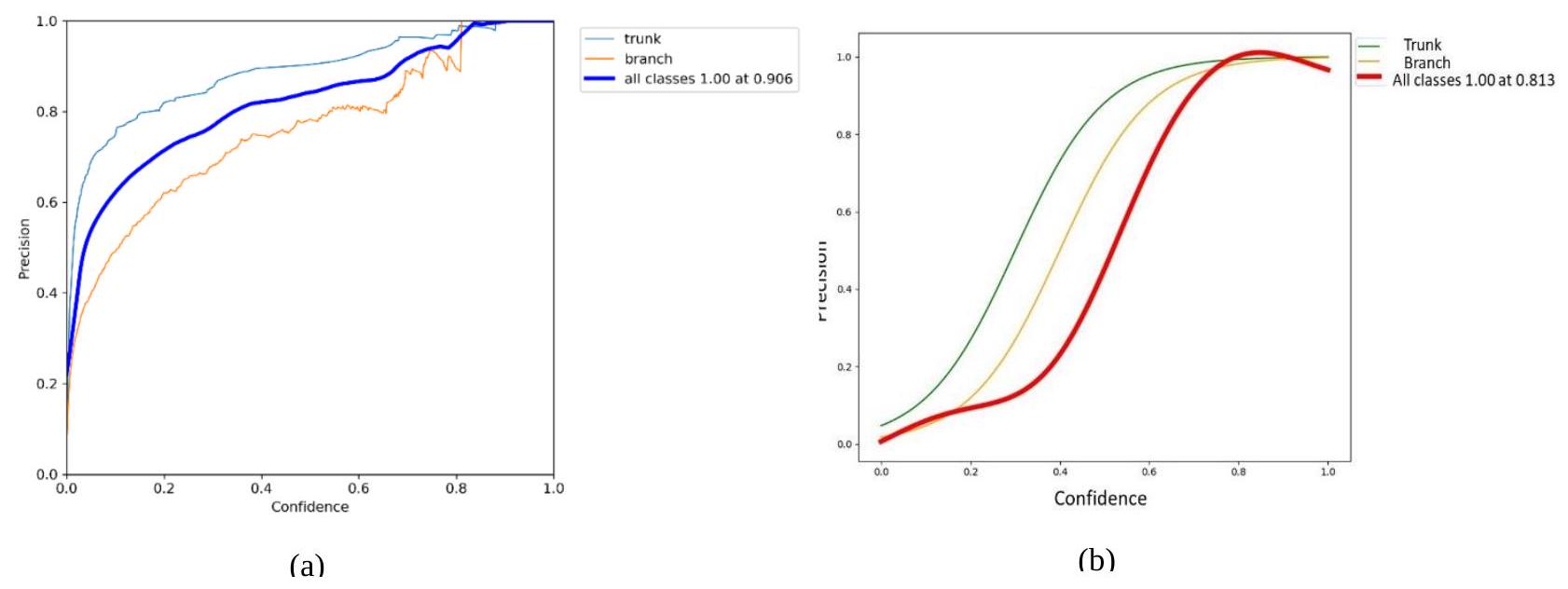

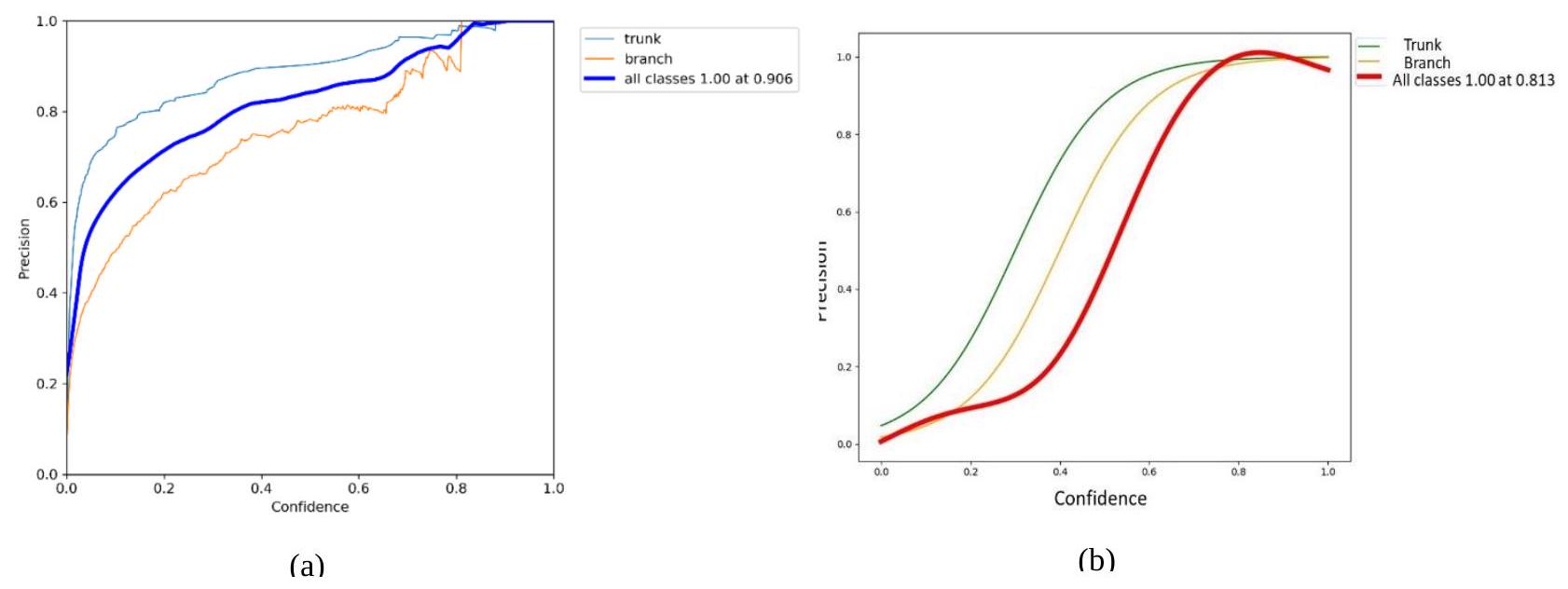

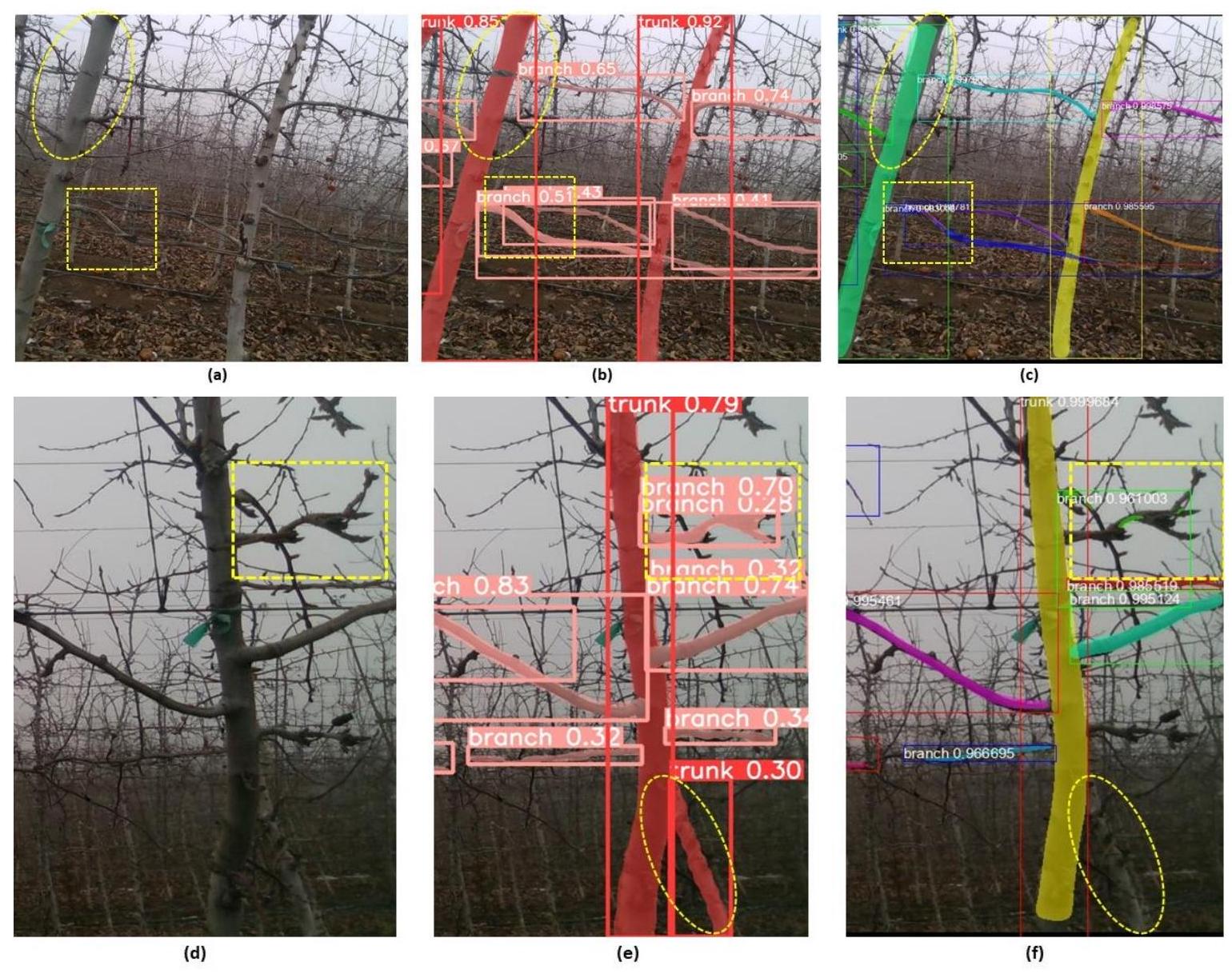

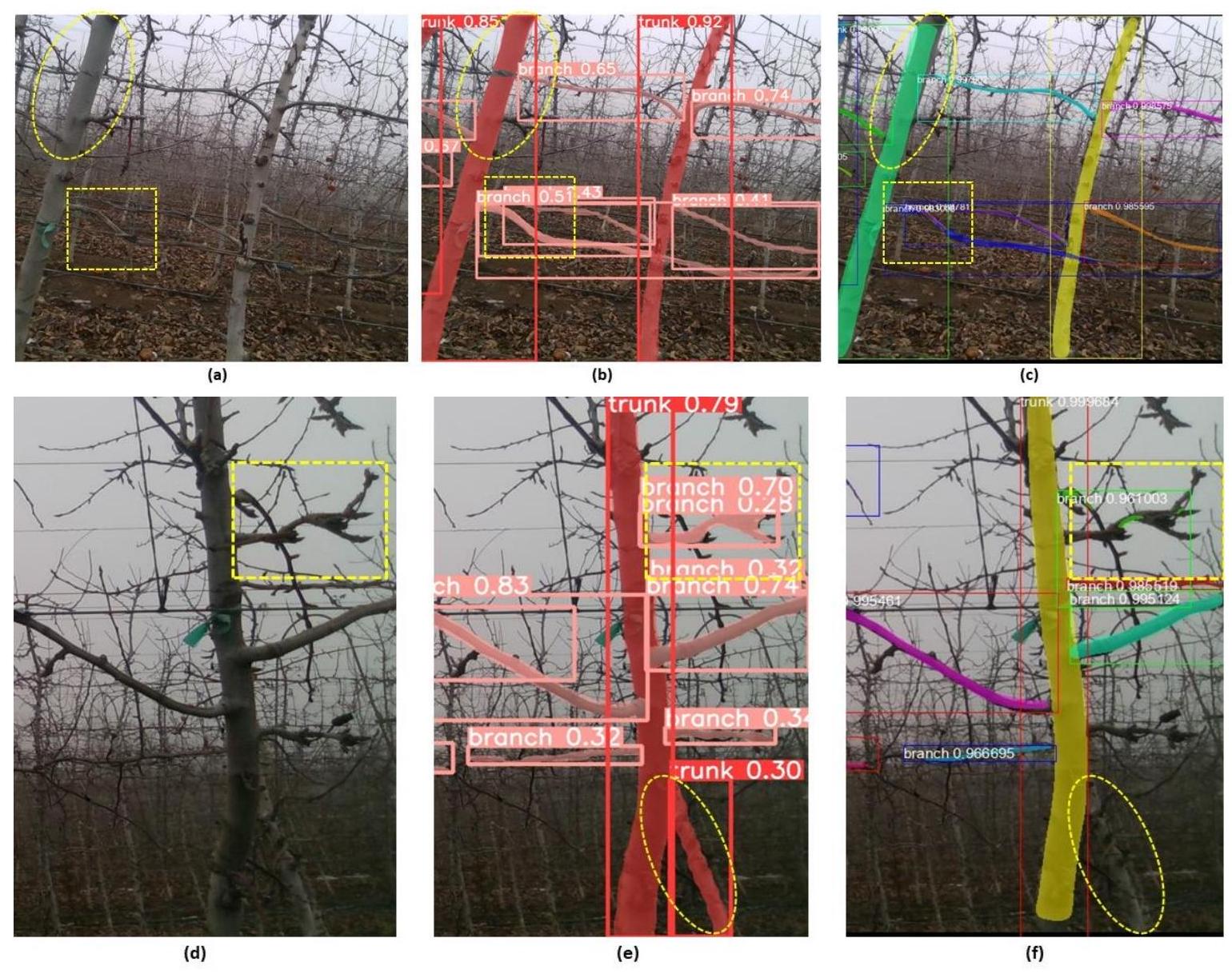

4.2 تقسيم الكائنات متعددة الفئات في صور أشجار التفاح الساكنة

| النموذج | الدقة | الاسترجاع | mAP@0.5 | وقت الاستدلال (مللي ثانية) | الإطارات في الثانية (FPS) |

| YOLOv8 (فئة واحدة) | 92.9 | 97 | 0.902 | 7.8 | 128.21 |

| Mask R-CNN (فئة واحدة) | 84.7 | 88 | 0.85 | 12.8 | 78.13 |

| YOLOv8 (فئات متعددة) | 90.6 | 95 | 0.74 | 10.9 | 91.74 |

| Mask R-CNN (فئات متعددة) | 81.3 | 83.7 | 0.700 | 15.6 | 64.10 |

4.3 المناقشة

تتميز YOLOv8 بسرعتها، حيث تحقق 128 إطارًا في الثانية (أسرع بـ 1.65 مرة من Mask R-CNN) لتجزئة الفئة الواحدة و 92 إطارًا في الثانية (أسرع بـ 1.43 مرة من Mask R-CNN) لتجزئة الفئات المتعددة باستخدام نفس البنية التحتية للتصوير والحوسبة المستخدمة لاختبار Mask R-CNN. تعتبر سرعة الاستدلال الأعلى لهذا النموذج ميزة خاصة للمهام الزراعية في الوقت الحقيقي كما تم مناقشته أعلاه. تؤكد مقاييس الدقة والاسترجاع العالية أيضًا على أدائه القوي عبر بيئات متنوعة بما في ذلك ظروف الإضاءة المتغيرة. ومع ذلك، بينما تقدم YOLOv8 تحسينات كبيرة في السرعة والدقة، قد تضحي ببعض التفاصيل في التجزئة مقارنة بالنماذج ذات المرحلتين مثل Mask R-CNN، مما يجعلها أقل ملاءمة قليلاً حيث تكون التفاصيل الدقيقة أكثر أهمية من سرعة المعالجة.

5. الخاتمة

- أداء التجزئة في ظروف متنوعة: قامت كل من YOLOv8 و Mask R-CNN بتجزئة صور تاج شجرة التفاح بفعالية من كل من الموسم الساكن وموسم النمو المبكر. تُظهر YOLOv8 أداءً أفضل قليلاً في البيئات التي تتميز بخصائص لونية مشابهة بين الكائنات والخلفيات وتحت ظروف إضاءة متغيرة.

- تجزئة الفئة الواحدة (الفواكه الخضراء غير الناضجة): تتفوق YOLOv8 في تجزئة الفئة الواحدة للفواكه الخضراء غير الناضجة، حيث تحقق دقة قدرها 0.92 واسترجاع قدره 0.97. بالمقارنة، تُظهر Mask R-CNN قدرات تجزئة أقل فعالية قليلاً بدقة قدرها 0.84 واسترجاع قدره 0.88.

- تجزئة الفئات المتعددة (اكتشاف الجذع والفروع): في اكتشاف كل من الجذع والفروع، تُظهر YOLOv8 دقة أعلى، حيث تحقق مقاييس الدقة والاسترجاع 0.90 و 0.95، على التوالي. حققت Mask R-CNN دقة واسترجاع أقل، عند 0.81 و 0.83 على التوالي، مما يشير إلى فعالية أقل في مهام تجزئة الفئات المتعددة.

- سرعة الاستدلال لتجزئة متعددة الفئات: يحافظ YOLOv8 على أداء قوي في سيناريوهات التجزئة متعددة الفئات بسرعة 91.74 إطارًا في الثانية. بالمقابل، تشير سرعة الاستدلال الأبطأ لـ Mask R-CNN البالغة 64.10 إطارًا في الثانية إلى قيود في التعامل مع التطبيقات التي تتطلب استجابات سريعة.

6. العمل المستقبلي

شكر وتقدير

مساهمة المؤلف

REFERENCES

[2] Q. Zhang, Y. Liu, C. Gong, Y. Chen, and H. Yu, ‘Applications of deep learning for dense scenes analysis in agriculture: A review’, Sensors, vol. 20, no. 5, p. 1520, 2020.

[3] J. Champ, A. Mora-Fallas, H. Goëau, E. Mata-Montero, P. Bonnet, and A. Joly, ‘Instance segmentation for the fine detection of crop and weed plants by precision agricultural robots’, Appl Plant Sci, vol. 8, no. 7, p. e11373, 2020.

[4] Y. Chen, S. Baireddy, E. Cai, C. Yang, and E. J. Delp, ‘Leaf segmentation by functional modeling’, in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2019, p. 0.

[5] N. Lüling, D. Reiser, and H. W. Griepentrog, ‘Volume and leaf area calculation of cabbage with a neural network-based instance segmentation’, in Precision agriculture’21, Wageningen Academic Publishers, 2021, pp. 2719-2745.

[6] C. Niu, H. Li, Y. Niu, Z. Zhou, Y. Bu, and W. Zheng, ‘Segmentation of cotton leaves based on improved watershed algorithm’, in Computer and Computing Technologies in Agriculture IX: 9th IFIP WG 5.14 International Conference, CCTA 2015, Beijing, China, September 27-30, 2015, Revised Selected Papers, Part I 9, Springer, 2016, pp. 425-436.

[7] V. H. Pham and B. R. Lee, ‘An image segmentation approach for fruit defect detection using k-means clustering and graph-based algorithm’, Vietnam Journal of Computer Science, vol. 2, pp. 25-33, 2015.

[8] M. G. S. Jayanthi and D. R. Shashikumar, ‘Leaf disease segmentation from agricultural images via hybridization of active contour model and OFA’, Journal of Intelligent Systems, vol. 29, no. 1, pp. 35-52, 2017.

[9] J. Clement, N. Novas, J.-A. Gazquez, and F. Manzano-Agugliaro, ‘An active contour computer algorithm for the classification of cucumbers’, Comput Electron Agric, vol. 92, pp. 75-81, 2013.

[10] Y. A. N. Gao, J. F. Mas, N. Kerle, and J. A. Navarrete Pacheco, ‘Optimal region growing segmentation and its effect on classification accuracy’, Int J Remote Sens, vol. 32, no. 13, pp. 3747-3763, 2011.

[11] N. Jothiaruna, K. J. A. Sundar, and B. Karthikeyan, ‘A segmentation method for disease spot images incorporating chrominance in comprehensive color feature and region growing’, Comput Electron Agric, vol. 165, p. 104934, 2019.

[12] J. Ma, K. Du, L. Zhang, F. Zheng, J. Chu, and Z. Sun, ‘A segmentation method for greenhouse vegetable foliar disease spots images using color information and region growing’, Comput Electron Agric, vol. 142, pp. 110-117, 2017.

[13] V. Gupta, N. Sengar, M. K. Dutta, C. M. Travieso, and J. B. Alonso, ‘Automated segmentation of powdery mildew disease from cherry leaves using image processing’, in 2017 International Conference and Workshop on Bioinspired Intelligence (IWOBI), IEEE, 2017, pp. 1-4.

[14] S. D. Khirade and A. B. Patil, ‘Plant disease detection using image processing’, in 2015 International conference on computing communication control and automation, IEEE, 2015, pp. 768-771.

[15] K. Tian, J. Li, J. Zeng, A. Evans, and L. Zhang, ‘Segmentation of tomato leaf images based on adaptive clustering number of K-means algorithm’, Comput Electron Agric, vol. 165, p. 104962, 2019.

[16] T. Arsan and M. M. N. Hameez, ‘A clustering-based approach for improving the accuracy of UWB sensorbased indoor positioning system’, Mobile Information Systems, vol. 2019, pp. 1-13, 2019.

[17] L. C. Ngugi, M. Abelwahab, and M. Abo-Zahhad, ‘Recent advances in image processing techniques for automated leaf pest and disease recognition-A review’, Information processing in agriculture, vol. 8, no. 1, pp. 27-51, 2021.

[18] Q. Zeng, Y. Miao, C. Liu, and S. Wang, ‘Algorithm based on marker-controlled watershed transform for overlapping plant fruit segmentation’, Optical Engineering, vol. 48, no. 2, p. 27201, 2009.

[19] M. G. S. Jayanthi and D. R. Shashikumar, ‘Leaf disease segmentation from agricultural images via hybridization of active contour model and OFA’, Journal of Intelligent Systems, vol. 29, no. 1, pp. 35-52, 2019.

[20] S. Coulibaly, B. Kamsu-Foguem, D. Kamissoko, and D. Traore, ‘Deep learning for precision agriculture: A bibliometric analysis’, Intelligent Systems with Applications, vol. 16, p. 200102, 2022.

[21] N. Siddique, S. Paheding, C. P. Elkin, and V. Devabhaktuni, ‘U-net and its variants for medical image segmentation: A review of theory and applications’, Ieee Access, vol. 9, pp. 82031-82057, 2021.

[22] K. He, G. Gkioxari, P. Dollár, and R. Girshick, ‘Mask r-cnn’, in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2961-2969.

[23] J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, ‘You only look once: Unified, real-time object detection’, in Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 779-788.

[24] J. Rashid, I. Khan, G. Ali, F. Alturise, and T. Alkhalifah, ‘Real-Time Multiple Guava Leaf Disease Detection from a Single Leaf Using Hybrid Deep Learning Technique.’, Computers, Materials & Continua, vol. 74, no. 1, 2023.

[25] Y. Tian, G. Yang, Z. Wang, E. Li, and Z. Liang, ‘Instance segmentation of apple flowers using the improved mask R-CNN model’, Biosyst Eng, vol. 193, pp. 264-278, 2020.

[26] A. K. Maji, S. Marwaha, S. Kumar, A. Arora, V. Chinnusamy, and S. Islam, ‘SlypNet: Spikelet-based yield prediction of wheat using advanced plant phenotyping and computer vision techniques’, Front Plant Sci, vol. 13, p. 889853, 2022.

[27] J. Liu and X. Wang, ‘Tomato diseases and pests detection based on improved Yolo V3 convolutional neural network’, Front Plant Sci, vol. 11, p. 898, 2020.

[28] M. Lippi, N. Bonucci, R. F. Carpio, M. Contarini, S. Speranza, and A. Gasparri, ‘A yolo-based pest detection system for precision agriculture’, in 2021 29th Mediterranean Conference on Control and Automation (MED), IEEE, 2021, pp. 342-347.

[29] X. Qu, J. Wang, X. Wang, Y. Hu, T. Zeng, and T. Tan, ‘Gravelly soil uniformity identification based on the optimized Mask R-CNN modeľ, Expert Syst Appl, vol. 212, p. 118837, 2023.

[30] L. Zu, Y. Zhao, J. Liu, F. Su, Y. Zhang, and P. Liu, ‘Detection and segmentation of mature green tomatoes based on mask R-CNN with automatic image acquisition approach’, Sensors, vol. 21, no. 23, p. 7842, 2021.

[31] Q. Wang, M. Cheng, S. Huang, Z. Cai, J. Zhang, and H. Yuan, ‘A deep learning approach incorporating YOLO v5 and attention mechanisms for field real-time detection of the invasive weed Solanum rostratum Dunal seedlings’, Comput Electron Agric, vol. 199, p. 107194, 2022.

[32] H. Li et al., ‘Design of field real-time target spraying system based on improved YOLOv5’, Front Plant Sci, vol. 13, p. 1072631, 2022.

[33] C. Hu, J. A. Thomasson, and M. V Bagavathiannan, ‘A powerful image synthesis and semi-supervised learning pipeline for site-specific weed detection’, Comput Electron Agric, vol. 190, p. 106423, 2021.

[34] S. Chen et al., ‘An approach for rice bacterial leaf streak disease segmentation and disease severity estimation’, Agriculture, vol. 11, no. 5, p. 420, 2021.

[35] Y. Tian, G. Yang, Z. Wang, E. Li, and Z. Liang, ‘Instance segmentation of apple flowers using the improved mask R-CNN model’, Biosyst Eng, vol. 193, pp. 264-278, 2020.

[36] G. Lin, Y. Tang, X. Zou, and C. Wang, ‘Three-dimensional reconstruction of guava fruits and branches using instance segmentation and geometry analysis’, Comput Electron Agric, vol. 184, p. 106107, 2021.

[37] K. Jha, A. Doshi, P. Patel, and M. Shah, ‘A comprehensive review on automation in agriculture using artificial intelligence’, Artificial Intelligence in Agriculture, vol. 2, pp. 1-12, 2019.

[38] A. You et al., ‘Semiautonomous Precision Pruning of Upright Fruiting Offshoot Orchard Systems: An Integrated Approach’, IEEE Robot Autom Mag, 2023.

[39] W. Jia, Y. Tian, R. Luo, Z. Zhang, J. Lian, and Y. Zheng, ‘Detection and segmentation of overlapped fruits based on optimized mask R-CNN application in apple harvesting robot’, Comput Electron Agric, vol. 172, p. 105380, 2020.

[40] Y. Yu, K. Zhang, L. Yang, and D. Zhang, ‘Fruit detection for strawberry harvesting robot in non-structural environment based on Mask-RCNN’, Comput Electron Agric, vol. 163, p. 104846, 2019.

[41] L. Zu, Y. Zhao, J. Liu, F. Su, Y. Zhang, and P. Liu, ‘Detection and segmentation of mature green tomatoes based on mask R-CNN with automatic image acquisition approach’, Sensors, vol. 21, no. 23, p. 7842, 2021.

[42] S. Xie, C. Hu, M. Bagavathiannan, and D. Song, ‘Toward robotic weed control: detection of nutsedge weed in bermudagrass turf using inaccurate and insufficient training data’, IEEE Robot Autom Lett, vol. 6, no. 4, pp. 7365-7372, 2021.

[43] J. Champ, A. Mora-Fallas, H. Goëau, E. Mata-Montero, P. Bonnet, and A. Joly, ‘Instance segmentation for the fine detection of crop and weed plants by precision agricultural robots’, Appl Plant Sci, vol. 8, no. 7, p. e11373, 2020.

[44] K. He, G. Gkioxari, P. Dollár, and R. Girshick, ‘Mask r-cnn’, in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2961-2969.

[45] S. Wang, G. Sun, B. Zheng, and Y. Du, ‘A crop image segmentation and extraction algorithm based on Mask RCNN’, Entropy, vol. 23, no. 9, p. 1160, 2021.

[46] P. Ganesh, K. Volle, T. F. Burks, and S. S. Mehta, ‘Deep orange: Mask R-CNN based orange detection and segmentation’, IFAC-PapersOnLine, vol. 52, no. 30, pp. 70-75, 2019.

[47] U. Afzaal, B. Bhattarai, Y. R. Pandeya, and J. Lee, ‘An instance segmentation model for strawberry diseases based on mask R-CNN’, Sensors, vol. 21, no. 19, p. 6565, 2021.

[48] T.-L. Lin, H.-Y. Chang, and K.-H. Chen, ‘The pest and disease identification in the growth of sweet peppers using faster R-CNN and mask R-CNN’, Journal of Internet Technology, vol. 21, no. 2, pp. 605-614, 2020.

[49] Z. U. Rehman et al., ‘Recognizing apple leaf diseases using a novel parallel real-time processing framework based on MASK RCNN and transfer learning: An application for smart agriculture’, IET Image Process, vol. 15, no. 10, pp. 2157-2168, 2021.

[50] G. H. Krishnan and T. Rajasenbagam, ‘A Comprehensive Survey for Weed Classification and Detection in Agriculture Lands’, Journal of Information Technology, vol. 3, no. 4, pp. 281-289, 2021.

[51] K. Osorio, A. Puerto, C. Pedraza, D. Jamaica, and L. Rodríguez, ‘A deep learning approach for weed detection in lettuce crops using multispectral images’, AgriEngineering, vol. 2, no. 3, pp. 471-488, 2020.

[52] T. Zhao, Y. Yang, H. Niu, D. Wang, and Y. Chen, ‘Comparing U-Net convolutional network with mask RCNN in the performances of pomegranate tree canopy segmentation’, in Multispectral, hyperspectral, and ultraspectral remote sensing technology, techniques and applications VII, SPIE, 2018, pp. 210-218.

[53] A. Safonova, E. Guirado, Y. Maglinets, D. Alcaraz-Segura, and S. Tabik, ‘Olive tree biovolume from UAV multi-resolution image segmentation with mask R-CNN’, Sensors, vol. 21, no. 5, p. 1617, 2021.

[54] P. Soviany and R. T. Ionescu, ‘Optimizing the trade-off between single-stage and two-stage deep object detectors using image difficulty prediction’, in 2018 20th International Symposium on Symbolic and Numeric Algorithms for Scientific Computing (SYNASC), IEEE, 2018, pp. 209-214.

[55] A. You et al., ‘Semiautonomous Precision Pruning of Upright Fruiting Offshoot Orchard Systems: An Integrated Approach’, IEEE Robot Autom Mag, 2023.

[56] M. Hussain, L. He, J. Schupp, D. Lyons, and P. Heinemann, ‘Green fruit segmentation and orientation estimation for robotic green fruit thinning of apples’, Comput Electron Agric, vol. 207, p. 107734, 2023.

[57] J. Seol, J. Kim, and H. Il Son, ‘Field evaluations of a deep learning-based intelligent spraying robot with flow control for pear orchards’, Precis Agric, vol. 23, no. 2, pp. 712-732, 2022.

[58] B. Ma, J. Du, L. Wang, H. Jiang, and M. Zhou, ‘Automatic branch detection of jujube trees based on 3D reconstruction for dormant pruning using the deep learning-based method’, Comput Electron Agric, vol. 190, p. 106484, 2021.

[59] Y. Fu et al., ‘Skeleton extraction and pruning point identification of jujube tree for dormant pruning using space colonization algorithm’, Front Plant Sci, vol. 13, p. 1103794, 2023.

[60] J. Zhang, L. He, M. Karkee, Q. Zhang, X. Zhang, and Z. Gao, ‘Branch detection for apple trees trained in fruiting wall architecture using depth features and Regions-Convolutional Neural Network (R-CNN)’, Comput Electron Agric, vol. 155, pp. 386-393, 2018.

[61] P. Guadagna et al., ‘Using deep learning for pruning region detection and plant organ segmentation in dormant spur-pruned grapevines’, Precis Agric, pp. 1-23, 2023.

[62] T. Gentilhomme, M. Villamizar, J. Corre, and J.-M. Odobez, ‘Towards smart pruning: ViNet, a deeplearning approach for grapevine structure estimation’, Comput Electron Agric, vol. 207, p. 107736, 2023.

[63] E. Kok, X. Wang, and C. Chen, ‘Obscured tree branches segmentation and 3D reconstruction using deep learning and geometrical constraints’, Comput Electron Agric, vol. 210, p. 107884, 2023.

[64] J. Zhang, L. He, M. Karkee, Q. Zhang, X. Zhang, and Z. Gao, ‘Branch detection for apple trees trained in fruiting wall architecture using depth features and Regions-Convolutional Neural Network (R-CNN)’, Comput Electron Agric, vol. 155, pp. 386-393, 2018.

[65] G. Lin, Y. Tang, X. Zou, and C. Wang, ‘Three-dimensional reconstruction of guava fruits and branches using instance segmentation and geometry analysis’, Comput Electron Agric, vol. 184, p. 106107, 2021.

[66] A. S. Aguiar et al., ‘Bringing semantics to the vineyard: An approach on deep learning-based vine trunk detection’, Agriculture, vol. 11, no. 2, p. 131, 2021.

[67] S. Tong, Y. Yue, W. Li, Y. Wang, F. Kang, and C. Feng, ‘Branch Identification and Junction Points Location for Apple Trees Based on Deep Learning’, Remote Sens (Basel), vol. 14, no. 18, p. 4495, 2022.

[68] R. Xiang, M. Zhang, and J. Zhang, ‘Recognition for stems of tomato plants at night based on a hybrid joint neural network’, Agriculture, vol. 12, no. 6, p. 743, 2022.

[69] L. Wu, J. Ma, Y. Zhao, and H. Liu, ‘Apple detection in complex scene using the improved YOLOv4 model’, Agronomy, vol. 11, no. 3, p. 476, 2021.

[70] W. Chen, J. Zhang, B. Guo, Q. Wei, and Z. Zhu, ‘An apple detection method based on des-YOLO v4 algorithm for harvesting robots in complex environment’, Math Probl Eng, vol. 2021, pp. 1-12, 2021.

[71] Z. Huang, P. Zhang, R. Liu, and D. Li, ‘Immature apple detection method based on improved Yolov3’, ASP Transactions on Internet of Things, vol. 1, no. 1, pp. 9-13, 2021.

[72] Y. Liu, G. Yang, Y. Huang, and Y. Yin, ‘SE-Mask R-CNN: An improved Mask R-CNN for apple detection and segmentation’, Journal of Intelligent & Fuzzy Systems, vol. 41, no. 6, pp. 6715-6725, 2021.

[73] A. Kuznetsova, T. Maleva, and V. Soloviev, ‘YOLOv5 versus YOLOv3 for apple detection’, in CyberPhysical Systems: Modelling and Intelligent Control, Springer, 2021, pp. 349-358.

[74] D. Wang and D. He, ‘Channel pruned YOLO V5s-based deep learning approach for rapid and accurate apple fruitlet detection before fruit thinning’, Biosyst Eng, vol. 210, pp. 271-281, 2021.

[75] S. Tong, Y. Yue, W. Li, Y. Wang, F. Kang, and C. Feng, ‘Branch Identification and Junction Points Location for Apple Trees Based on Deep Learning’, Remote Sens (Basel), vol. 14, no. 18, p. 4495, 2022.

[76] F. Gao et al., ‘A novel apple fruit detection and counting methodology based on deep learning and trunk tracking in modern orchard’, Comput Electron Agric, vol. 197, p. 107000, 2022.

[77] C. Zhang, F. Kang, and Y. Wang, ‘An improved apple object detection method based on lightweight YOLOv4 in complex backgrounds’, Remote Sens (Basel), vol. 14, no. 17, p. 4150, 2022.

[78] S. Lu, W. Chen, X. Zhang, and M. Karkee, ‘Canopy-attention-YOLOv4-based immature/mature apple fruit detection on dense-foliage tree architectures for early crop load estimation’, Comput Electron Agric, vol. 193, p. 106696, 2022.

[79] J. Lv et al., ‘A visual identification method for the apple growth forms in the orchard’, Comput Electron Agric, vol. 197, p. 106954, 2022.

[80] F. Su et al., ‘Tree Trunk and Obstacle Detection in Apple Orchard Based on Improved YOLOv5s Model’, Agronomy, vol. 12, no. 10, p. 2427, 2022.

[81] D. Wang and D. He, ‘Fusion of Mask RCNN and attention mechanism for instance segmentation of apples under complex background’, Comput Electron Agric, vol. 196, p. 106864, 2022.

[82] W. Jia et al., ‘Accurate segmentation of green fruit based on optimized mask RCNN application in complex orchard’, Front Plant Sci, vol. 13, p. 955256, 2022.

[83] P. Cong, J. Zhou, S. Li, K. Lv, and H. Feng, ‘Citrus Tree Crown Segmentation of Orchard Spraying Robot Based on RGB-D Image and Improved Mask R-CNN’, Applied Sciences, vol. 13, no. 1, p. 164, 2022.

[84] M. Karthikeyan, T. S. Subashini, R. Srinivasan, C. Santhanakrishnan, and A. Ahilan, ‘YOLOAPPLE: Augment Yolov3 deep learning algorithm for apple fruit quality detection’, Signal Image Video Process, pp. 1-10, 2023.

[85] L. Ma, L. Zhao, Z. Wang, J. Zhang, and G. Chen, ‘Detection and Counting of Small Target Apples under Complicated Environments by Using Improved YOLOv7-tiny’, Agronomy, vol. 13, no. 5, p. 1419, 2023.

[86] M. Carranza-García, J. Torres-Mateo, P. Lara-Benítez, and J. García-Gutiérrez, ‘On the performance of onestage and two-stage object detectors in autonomous vehicles using camera data’, Remote Sens (Basel), vol. 13, no. 1, p. 89, 2020.

[87] G. Yang, J. Wang, Z. Nie, H. Yang, and S. Yu, ‘A lightweight YOLOv8 tomato detection algorithm combining feature enhancement and attention’, Agronomy, vol. 13, no. 7, p. 1824, 2023.

[88] X. Yue, K. Qi, X. Na, Y. Zhang, Y. Liu, and C. Liu, ‘Improved YOLOv8-Seg Network for Instance Segmentation of Healthy and Diseased Tomato Plants in the Growth Stage’, Agriculture, vol. 13, no. 8, p. 1643, 2023.

[89] B. Jabir, K. El Moutaouakil, and N. Falih, ‘Developing an Efficient System with Mask R-CNN for Agricultural Applications’, Agris on-line Papers in Economics and Informatics, vol. 15, no. 1, pp. 61-72, 2023.

[90] H. Duong-Trung and N. Duong-Trung, ‘Integrating YOLOv8-agri and DeepSORT for Advanced Motion Detection in Agriculture and Fisheries’, EAI Endorsed Transactions on Industrial Networks and Intelligent Systems, vol. 11, no. 1, pp. e4-e4, 2024.

[91] B. Xu et al., ‘Livestock classification and counting in quadcopter aerial images using Mask R-CNN’, Int

[92] X. Mu, L. He, P. Heinemann, J. Schupp, and M. Karkee, ‘Mask R-CNN based apple flower detection and king flower identification for precision pollination’, Smart Agricultural Technology, vol. 4, p. 100151, 2023.

[93] C. Yu et al., ‘Segmentation and density statistics of mariculture cages from remote sensing images using mask R-CNN’, Information Processing in Agriculture, vol. 9, no. 3, pp. 417-430, 2022.

[94] P. Bharati and A. Pramanik, ‘Deep learning techniques-R-CNN to mask R-CNN: a survey’, Computational Intelligence in Pattern Recognition: Proceedings of CIPR 2019, pp. 657-668, 2020.

[95] G. Hoogenboom, ‘Contribution of agrometeorology to the simulation of crop production and its applications’, Agric For Meteorol, vol. 103, no. 1-2, pp. 137-157, 2000.

[96] L. Zhang, J. Wu, Y. Fan, H. Gao, and Y. Shao, ‘An efficient building extraction method from high spatial resolution remote sensing images based on improved mask R-CNN’, Sensors, vol. 20, no. 5, p. 1465, 2020.

[97] T. Wang et al., ‘Tea picking point detection and location based on Mask-RCNN’, Information Processing in Agriculture, vol. 10, no. 2, pp. 267-275, 2023.

[98] C. Tang et al., ‘A fine recognition method of strawberry ripeness combining Mask R-CNN and region segmentation’, Front Plant Sci, vol. 14, p. 1211830, 2023.

[99] B. R. Amogi, R. Ranjan, and L. R. Khot, ‘Mask R-CNN aided fruit surface temperature monitoring algorithm with edge compute enabled internet of things system for automated apple heat stress management’, Information Processing in Agriculture, 2023.

[100] Y. Li, Q. Fan, H. Huang, Z. Han, and Q. Gu, ‘A modified YOLOv8 detection network for UAV aerial image recognition’, Drones, vol. 7, no. 5, p. 304, 2023.

[101] G. Wang, Y. Chen, P. An, H. Hong, J. Hu, and T. Huang, ‘UAV-YOLOv8: a small-object-detection model based on improved YOLOv8 for UAV aerial photography scenarios’, Sensors, vol. 23, no. 16, p. 7190, 2023.

[102] L. Zhang, G. Ding, C. Li, and D. Li, ‘DCF-Yolov8: An Improved Algorithm for Aggregating Low-Level Features to Detect Agricultural Pests and Diseases’, Agronomy, vol. 13, no. 8, p. 2012, 2023.

[103] X. Wang and J. Liu, ‘Vegetable disease detection using an improved YOLOv8 algorithm in the greenhouse plant environment’, Sci Rep, vol. 14, no. 1, p. 4261, 2024.

[104] G. Yang, J. Wang, Z. Nie, H. Yang, and S. Yu, ‘A lightweight YOLOv8 tomato detection algorithm combining feature enhancement and attention’, Agronomy, vol. 13, no. 7, p. 1824, 2023.

[105] S. Yang, W. Wang, S. Gao, and Z. Deng, ‘Strawberry ripeness detection based on YOLOv8 algorithm fused with LW-Swin Transformer’, Comput Electron Agric, vol. 215, p. 108360, 2023.

[106] R. Sapkota, D. Ahmed, M. Churuvija, and M. Karkee, ‘Immature Green Apple Detection and Sizing in Commercial Orchards using YOLOv8 and Shape Fitting Techniques’, IEEE Access, vol. 12, pp. 4343643452, 2024.

[107] G. Chen, Y. Hou, T. Cui, H. Li, F. Shangguan, and L. Cao, ‘YOLOv8-CML: A lightweight target detection method for Color-changing melon ripening in intelligent agriculture’, 2023.

[108] J. Wei, Y. Ding, J. Liu, M. Z. Ullah, X. Yin, and W. Jia, ‘Novel green-fruit detection algorithm based on D2D framework’, International Journal of Agricultural and Biological Engineering, vol. 15, no. 1, pp. 251259, 2022.

[109] M. Sun, L. Xu, R. Luo, Y. Lu, and W. Jia, ‘GHFormer-Net: Towards more accurate small green apple/begonia fruit detection in the nighttime’, Journal of King Saud University-Computer and Information Sciences, vol. 34, no. 7, pp. 4421-4432, 2022.

[110] M. Liu, W. Jia, Z. Wang, Y. Niu, X. Yang, and C. Ruan, ‘An accurate detection and segmentation model of obscured green fruits’, Comput Electron Agric, vol. 197, p. 106984, 2022.

[111] W. Jia et al., ‘FoveaMask: A fast and accurate deep learning model for green fruit instance segmentation’, Comput Electron Agric, vol. 191, p. 106488, 2021.

[112] S. Sun, M. Jiang, D. He, Y. Long, and H. Song, ‘Recognition of green apples in an orchard environment by combining the GrabCut model and Ncut algorithm’, Biosyst Eng, vol. 187, pp. 201-213, 2019.

[113] A. Prabhu and N. S. Rani, ‘Semiautomated Segmentation Model to Extract Fruit Images from Trees’, in 2021 International Conference on Intelligent Technologies (CONIT), IEEE, 2021, pp. 1-13.

[114] Y. Tian, G. Yang, Z. Wang, H. Wang, E. Li, and Z. Liang, ‘Apple detection during different growth stages in orchards using the improved YOLO-V3 model’, Comput Electron Agric, vol. 157, pp. 417-426, 2019.

[115] S. Wan and S. Goudos, ‘Faster R-CNN for multi-class fruit detection using a robotic vision system’, Computer Networks, vol. 168, p. 107036, 2020.

[116] M. Liu, W. Jia, Z. Wang, Y. Niu, X. Yang, and C. Ruan, ‘An accurate detection and segmentation model of obscured green fruits’, Comput Electron Agric, vol. 197, p. 106984, 2022.

[117] E. Kok, X. Wang, and C. Chen, ‘Obscured tree branches segmentation and 3D reconstruction using deep learning and geometrical constraints’, Comput Electron Agric, vol. 210, p. 107884, 2023.

[118] D.-H. Kim, C.-U. Ko, D.-G. Kim, J.-T. Kang, J.-M. Park, and H.-J. Cho, ‘Automated Segmentation of Individual Tree Structures Using Deep Learning over LiDAR Point Cloud Data’, Forests, vol. 14, no. 6, p. 1159, 2023.

[119] R. Sapkota et al., ‘YOLOv10 to Its Genesis: A Decadal and Comprehensive Review of The You Only Look Once Series’, arXiv preprint arXiv:2406.19407, 2024.

[120] A. Wang et al., ‘Yolov10: Real-time end-to-end object detection’, arXiv preprint arXiv:2405.14458, 2024.

DOI: https://doi.org/10.1016/j.aiia.2024.07.001

Publication Date: 2024-07-17

Comparing YOLOv8 and Mask R-CNN for instance segmentation in complex orchard environments

Abstract

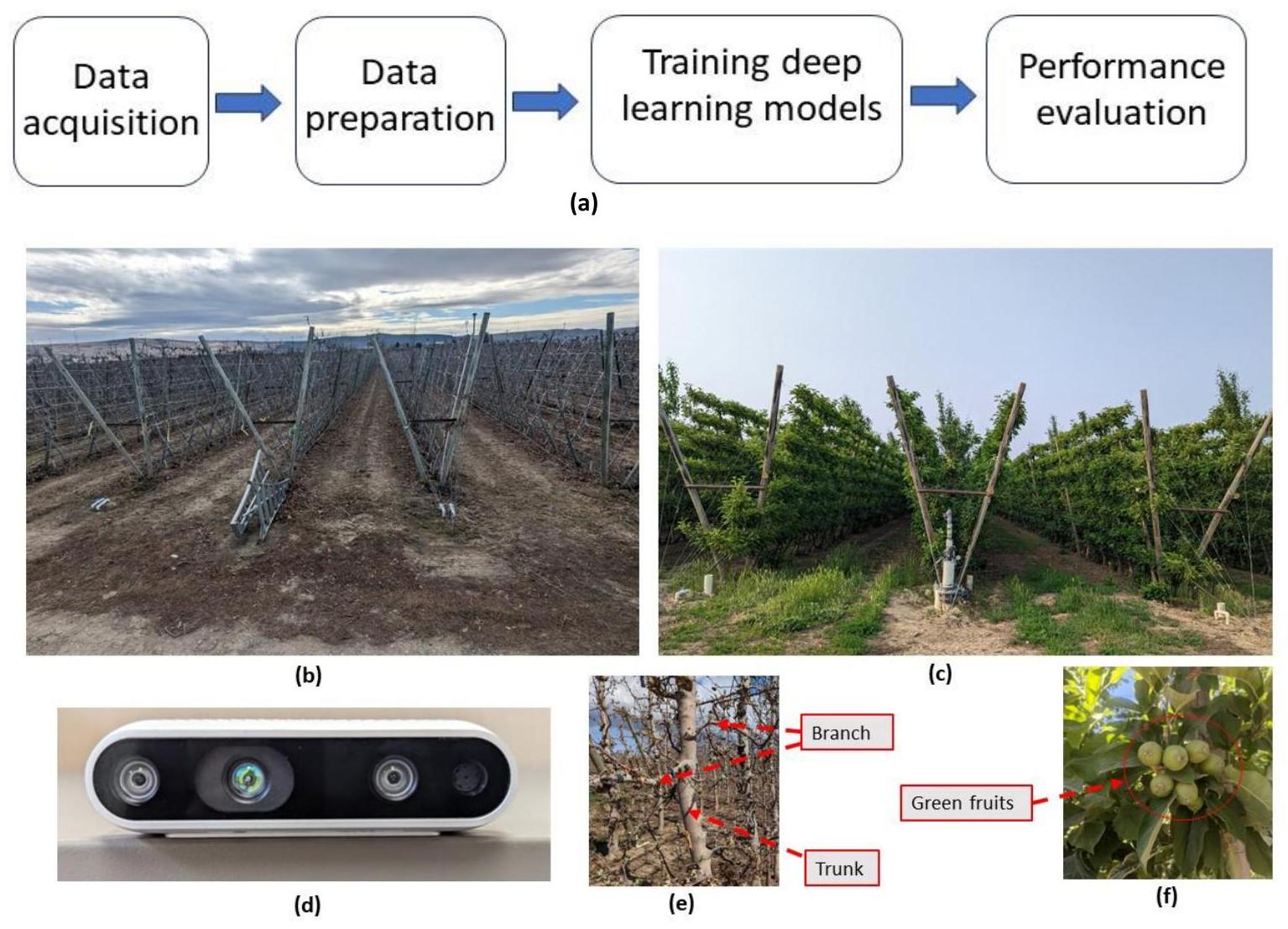

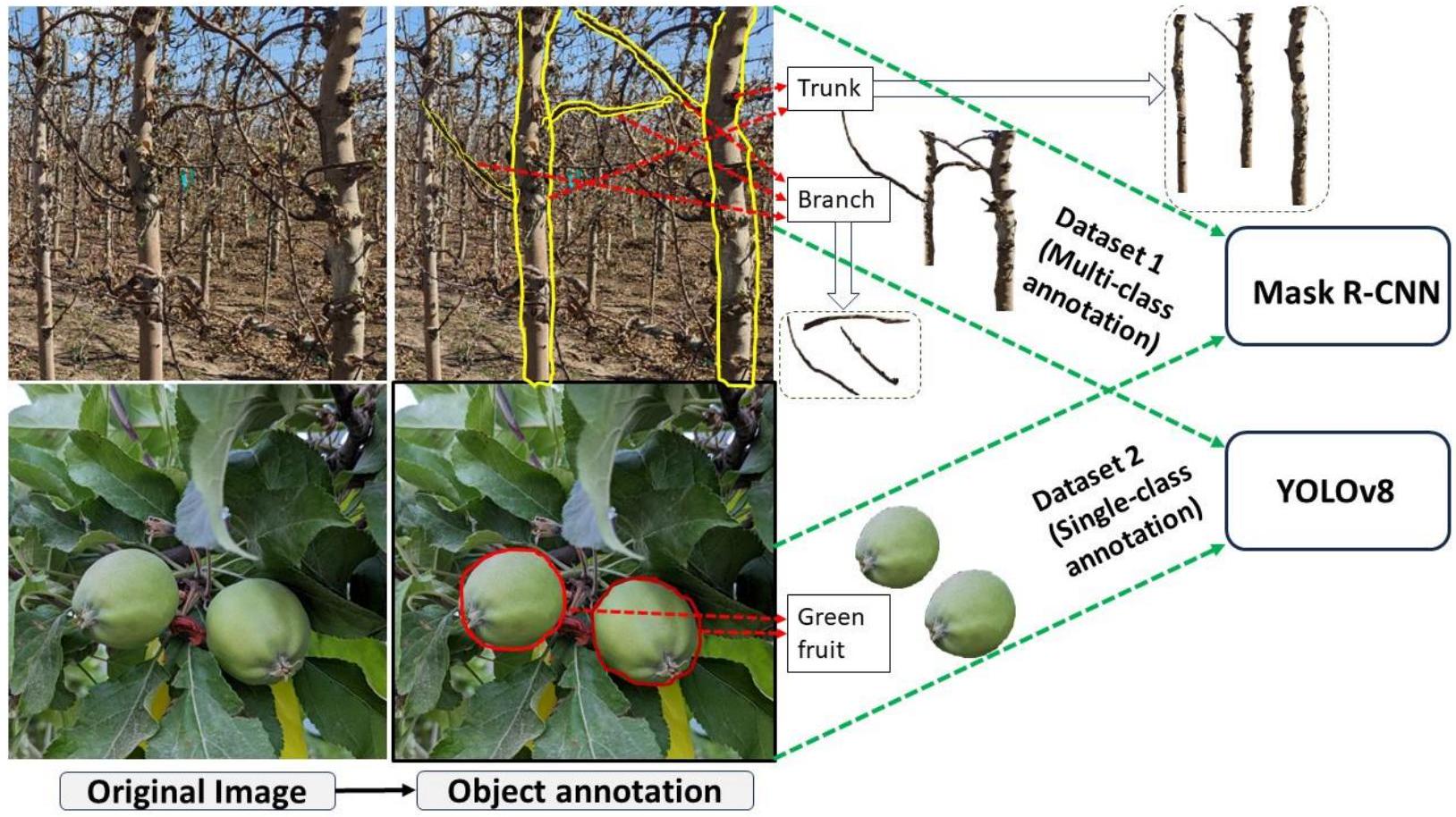

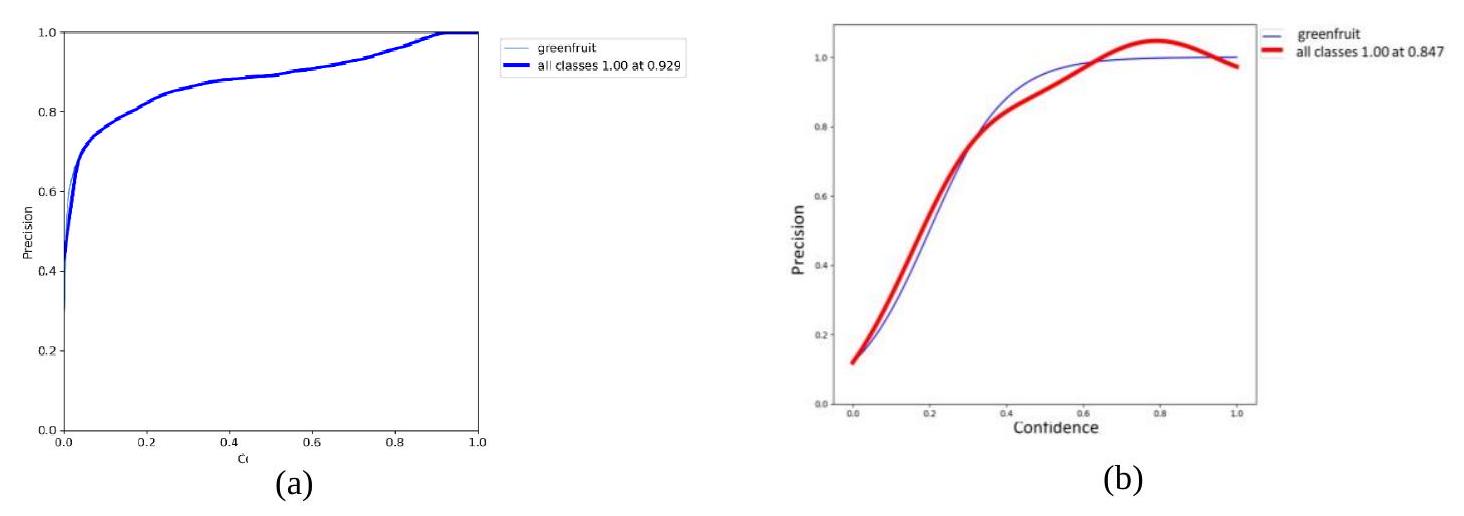

Instance segmentation, an important image processing operation for automation in agriculture, is used to precisely delineate individual objects of interest within images, which provides foundational information for various automated or robotic tasks such as selective harvesting and precision pruning. This study compares the one-stage YOLOv8 and the two-stage Mask R-CNN machine learning models for instance segmentation under varying orchard conditions across two datasets. Dataset 1, collected in dormant season, includes images of dormant apple trees, which were used to train multi-object segmentation models delineating tree branches and trunks. Dataset 2, collected in the early growing season, includes images of apple tree canopies with green foliage and immature (green) apples (also called fruitlet), which were used to train single-object segmentation models delineating only immature green apples. The results showed that YOLOv8 performed better than Mask R-CNN, achieving good precision and near-perfect recall across both datasets at a confidence threshold of 0.5 . Specifically, for Dataset 1, YOLOv8 achieved a precision of 0.90 and a recall of 0.95 for all classes. In comparison, Mask R-CNN demonstrated a precision of 0.81 and a recall of 0.81 for the same dataset. With Dataset 2, YOLOv8 achieved a precision of 0.93 and a recall of 0.97 . Mask R-CNN, in this single-class scenario, achieved a precision of 0.85 and a recall of 0.88 . Additionally, the inference times for YOLOv8 were 10.9 ms for multi-class segmentation (Dataset 1) and 7.8 ms for single-class segmentation (Dataset 2), compared to 15.6 ms and 12.8 ms achieved by Mask R-CNN’s, respectively. These findings show YOLOv8’s superior accuracy and efficiency in machine learning applications compared to two-stage models, specifically Mask-R-CNN, which suggests its suitability in developing smart and automated orchard operations, particularly when real-time applications are necessary in such cases as robotic harvesting and robotic immature green fruit thinning.

1. Introduction

processing time but also enhances the adaptability of the models to new, unseen scenarios, an essential feature for generalizable and scalable agricultural applications. This dynamic learning ability is a significant improvement over traditional methods that are static and constrained by their algorithmic rigidity.

wall apple trees. Segmenting plant canopy parts in dormant grapevines has also been studied widely using different deep learning techniques (e.g., [61].[100]). Other models like ViNet [62] have emerged, providing deep learning solutions for estimating grapevine structures. Further advancements include the application of deep learning and geometric constraints for obscured branch segmentation and three-dimensional reconstruction [63], as well as the use of space colonization algorithms for dormant pruning in jujubee plants [59]. A deep learning-based sensing system (called SPGnet) for jujubee plant by Baojian et al. [58], branch detection in apple trees using R-CNN by Zhang et al., 2014 [64], and Lin et al.’s tiny Mask R-CNN for guava branch reconstruction [65] are other recent studies in this field. Additionally, Aguiar et al. [66] explored trunk segmentation using a semantic segmentation-based deep learning approach with a Single Shot Multibox Detector (SSD). In comparison with the performance measures reported by these latest, innovative methodologies available in the literature, YOLOv8 model presented in this study performed better in segmenting tree trunks in terms of both precision ( 0.95 ), recall ( 0.97 ) and

| References | Year | DL model | Objectives |

| [69], [70] | 2021 | YOLO-V4 | Apple detection in a complex scene |

| [71], [72] | 2021 | Mask R-CNN | Deep learning-based apple detection |

| [71], [73] | 2021 | YOLO-V3 | Green fruit detection (apples, mangoes) |

| [73], [74] | 2021 | YOLO-V5 | Apple fruitlet detection for fruitlet thinning |

| [75] | 2022 | Mask R-CNN | Branch identification and junction points localization in apple trees; Trunk identification and segmentation |

| [76], [77] | 2022 | YOLO-V4 | Apple detection, counting, and tree trunk tracking in modern orchards |

| [78] | 2022 | YOLO-V4 | Immature/mature apple detection on dense-foliage tree architectures for early crop-load estimation |

| [79] | 2022 | YOLO-V5 | Identification method for the apple growth pattern in the orchard |

| [80] | 2022 | YOLO-V5 | Tree trunk and obstacle detection in apple orchards |

| [81], [82] | 2022 | Mask R-CNN | Ripe and green apple segmentation in orchards |

| [83] | 2022 | Mask R-CNN | Tree and tree crown segmentation in orchards |

| [84] | 2023 | YOLO-V3 | Apple fruit quality detection |

| [85] | 2023 | YOLO-V7 | Detection and counting of small target apples |

| [56], [82] | 2023 | Mask R-CNN | Green apple segmentation |

- To compare the performances of YOLOv8 and Mask R-CNN models in segmenting single-class objects, specifically green apples (fruitlets), in images collected from variable orchard environments in the early growing season; and

- To evaluate the capabilities of these two models in segmenting multi-class objects, specifically primary branches and tree trunks of apple trees in images collected from a model apple orchard during the dormant season.

predict bounding boxes but also to generate precise object masks, aligning its functionalities more closely with those of Mask R-CNN, which has been a standard in instance segmentation. Therefore, comparing these two models is pertinent as both now offer robust solutions for instance segmentation, making the evaluation of their performance in agricultural applications, where both detection speed and segmentation accuracy are critical, highly relevant and scientifically justified.

2. Deep Learning Models

2.1 Mask R-CNN

backbone network. The bounding box branch predicts the class label and bounding box coordinates for each region proposal, while the mask branch predicts a binary mask for each object instance within the bounding box.

2.2 YOLOv8

optimizing the processing of low-level features, YOLOv8 becomes a powerful tool for the early detection of subtle signs of agricultural pests and diseases, critical for maintaining crop health [102]. For example, enhanced versions of YOLOv8 have been applied to detect diseases in vegetables within greenhouse environments [103] ensuring early detection and management of plant diseases. Further innovations include the integration of attention mechanisms into YOLOv8 to enhance the object detection capabilities, which was tested by [104] to improve tomato detection accuracy in cluttered agricultural environments. Similarly, [93] focused on fusion of YOLOv8 with lightweight transformer architectures to enhance feature extraction process, which helped improve strawberry ripeness detection in field environmentsClick or tap here to enter text.. In orchard environments, YOLOv8 coupled with shape fitting techniques has been employed for the accurate detection and sizing of immature green apples, vital for yield estimation and growth monitoring [106]. Similarly, the a specialized YOLOv8 model has been developed for monitoring the ripening process of color-changing melons representing a significant step towards tailored applications in agriculture[107].

3. Materials and Methods

3.1 Study site and data acquisition

3.2 Data preparation

3.3 Deep Learning Model Implementation

| Methods Applied | Value |

| Hue augmentation (fraction) | 0.015 |

| Saturation augmentation (fraction) | 0.7 |

| Value augmentation (fraction) | 0.4 |

| Rotation | 0.0 |

| Translation | 0.1 |

| Scale | 0.5 |

| Flip left-right (probability) | 0.5 |

| Mosaic (probability) | 1.0 |

| Weight decay | 0.0005 |

3.4 Performance Evaluation

across k categories (equation 4), was crucial in evaluating the model’s precision at a threshold of

These metrics are calculated as follows:

where TP, FP, and FN represent true positive, false positive, and false negative object instances respectively. Variable ‘ k ‘ represents the total number of object classes, and ( AP )

4. Results and Discussion

4.1 Single-class Object Segmentation of Immature Green Apples (Fruitlets)

The performance differences between YOLOv8 and Mask R-CNN generally reflected the distinct nature of their architectures and the way they process images. YOLOv8, being a one-stage detector, is designed for speed and accuracy, making it capable of excluding similar non-target areas, as observed in the segmentation tasks (Figure 8b). Its direct approach to object detection avoids the region proposal step, leading to fewer false positives in areas of the canopy that resembled the target fruit in color. Mask R-CNN, on the other hand, uses a two-stage process, which involved generating region proposals before classifying and segmenting objects. This can sometimes result in the inclusion of non-target areas, such as leaves and stems being misclassified as fruits (Figure 8c). Moreover, its performance appears to be more sensitive to lighting variations, which can lead to errors in object identification under the extreme sides of lighting situation such as bright, direct sun-light and dark shadows (Figure 9c). Despite these differences, there are specific situations where Mask R-CNN could still be the preferred choice. Its two-stage process, particularly the region proposal step, can be advantageous in complex segmentation tasks where precision is critical, and objects are densely packed or partially obscured. In the past, green fruit segmentation has been investigated using various approaches. Wei et al.’s D2D framework [108], GHFormer’s focus on night-time detection [109], Liu et al.’s

4.2 Multi-class Object Segmentation in Images of Dormant Apple Trees

| Model | Precision | Recall | mAP@0.5 | Inference Time (ms) | Frames Per Second (FPS) |

| YOLOv8 (Single-class) | 92.9 | 97 | 0.902 | 7.8 | 128.21 |

| Mask R-CNN (Single-class) | 84.7 | 88 | 0.85 | 12.8 | 78.13 |

| YOLOv8 (Multi-class) | 90.6 | 95 | 0.74 | 10.9 | 91.74 |

| Mask R-CNN (Multi-class) | 81.3 | 83.7 | 0.700 | 15.6 | 64.10 |

4.3 Discussion

YOLOv8 stands out for its speed, achieving 128 FPS (1.65)times faster than Mask R-CNN) for single-class and 92 FPS ( 1.43 time faster than Mask R-CNN) for multi-class segmentation with the same imaging and computational infrastructure used to test Mask R-CNN. Comparatively higher inference speed of this model is particularly advantageous for real-time agricultural tasks as discussed above. Its high precision and recall metrics further emphasize its robust performance across diverse environmental settings including variable light conditions. However, while YOLOv8 offers substantial improvements in speed and accuracy, it may sacrifice some granularity in segmentation compared to two-stage models like Mask R-CNN, which makes it slightly less applicable where minute detail is more critical than processing speed.

5. Conclusion

- Segmentation Performance in Diverse Conditions: Both YOLOv8 and Mask R-CNN effectively segmented apple tree canopy images from both dormant and early growing seasons. YOLOv8 shows slightly better performance in environments with similar color features between objects and backgrounds and under varying light intensities.

- Single-Class Segmentation (Immature Green Fruit): YOLOv8 outperforms in single-class segmentation of immature green fruits, achieving a precision of 0.92 and a recall of 0.97 . In comparison, Mask R-CNN exhibits slightly less effective segmentation capabilities with a precision of 0.84 and a recall of 0.88 .

- Multi-Class Segmentation (Trunk and Branch Detection): In the detection of both trunk and branches, YOLOv8 displays higher accuracy, achieving the precision and recall metrics of 0.90 and 0.95 , respectively. Mask R-CNN achieved lower precision and recall, at 0.81 and 0.83 respectively, indicating reduced effectiveness in multi-class segmentation tasks.

- Inference Speed for Multi-Class Segmentation: YOLOv8 maintains robust performance in multi-class segmentation scenarios with a speed of 91.74 FPS. In contrast, Mask R-CNN’s slower inference speed of 64.10 FPS suggests limitations in handling applications requiring rapid responses.

6. Future Work

Acknowledgement

Author’s Contribution

REFERENCES

[2] Q. Zhang, Y. Liu, C. Gong, Y. Chen, and H. Yu, ‘Applications of deep learning for dense scenes analysis in agriculture: A review’, Sensors, vol. 20, no. 5, p. 1520, 2020.

[3] J. Champ, A. Mora-Fallas, H. Goëau, E. Mata-Montero, P. Bonnet, and A. Joly, ‘Instance segmentation for the fine detection of crop and weed plants by precision agricultural robots’, Appl Plant Sci, vol. 8, no. 7, p. e11373, 2020.

[4] Y. Chen, S. Baireddy, E. Cai, C. Yang, and E. J. Delp, ‘Leaf segmentation by functional modeling’, in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2019, p. 0.

[5] N. Lüling, D. Reiser, and H. W. Griepentrog, ‘Volume and leaf area calculation of cabbage with a neural network-based instance segmentation’, in Precision agriculture’21, Wageningen Academic Publishers, 2021, pp. 2719-2745.

[6] C. Niu, H. Li, Y. Niu, Z. Zhou, Y. Bu, and W. Zheng, ‘Segmentation of cotton leaves based on improved watershed algorithm’, in Computer and Computing Technologies in Agriculture IX: 9th IFIP WG 5.14 International Conference, CCTA 2015, Beijing, China, September 27-30, 2015, Revised Selected Papers, Part I 9, Springer, 2016, pp. 425-436.

[7] V. H. Pham and B. R. Lee, ‘An image segmentation approach for fruit defect detection using k-means clustering and graph-based algorithm’, Vietnam Journal of Computer Science, vol. 2, pp. 25-33, 2015.

[8] M. G. S. Jayanthi and D. R. Shashikumar, ‘Leaf disease segmentation from agricultural images via hybridization of active contour model and OFA’, Journal of Intelligent Systems, vol. 29, no. 1, pp. 35-52, 2017.

[9] J. Clement, N. Novas, J.-A. Gazquez, and F. Manzano-Agugliaro, ‘An active contour computer algorithm for the classification of cucumbers’, Comput Electron Agric, vol. 92, pp. 75-81, 2013.

[10] Y. A. N. Gao, J. F. Mas, N. Kerle, and J. A. Navarrete Pacheco, ‘Optimal region growing segmentation and its effect on classification accuracy’, Int J Remote Sens, vol. 32, no. 13, pp. 3747-3763, 2011.

[11] N. Jothiaruna, K. J. A. Sundar, and B. Karthikeyan, ‘A segmentation method for disease spot images incorporating chrominance in comprehensive color feature and region growing’, Comput Electron Agric, vol. 165, p. 104934, 2019.

[12] J. Ma, K. Du, L. Zhang, F. Zheng, J. Chu, and Z. Sun, ‘A segmentation method for greenhouse vegetable foliar disease spots images using color information and region growing’, Comput Electron Agric, vol. 142, pp. 110-117, 2017.

[13] V. Gupta, N. Sengar, M. K. Dutta, C. M. Travieso, and J. B. Alonso, ‘Automated segmentation of powdery mildew disease from cherry leaves using image processing’, in 2017 International Conference and Workshop on Bioinspired Intelligence (IWOBI), IEEE, 2017, pp. 1-4.

[14] S. D. Khirade and A. B. Patil, ‘Plant disease detection using image processing’, in 2015 International conference on computing communication control and automation, IEEE, 2015, pp. 768-771.

[15] K. Tian, J. Li, J. Zeng, A. Evans, and L. Zhang, ‘Segmentation of tomato leaf images based on adaptive clustering number of K-means algorithm’, Comput Electron Agric, vol. 165, p. 104962, 2019.

[16] T. Arsan and M. M. N. Hameez, ‘A clustering-based approach for improving the accuracy of UWB sensorbased indoor positioning system’, Mobile Information Systems, vol. 2019, pp. 1-13, 2019.

[17] L. C. Ngugi, M. Abelwahab, and M. Abo-Zahhad, ‘Recent advances in image processing techniques for automated leaf pest and disease recognition-A review’, Information processing in agriculture, vol. 8, no. 1, pp. 27-51, 2021.

[18] Q. Zeng, Y. Miao, C. Liu, and S. Wang, ‘Algorithm based on marker-controlled watershed transform for overlapping plant fruit segmentation’, Optical Engineering, vol. 48, no. 2, p. 27201, 2009.

[19] M. G. S. Jayanthi and D. R. Shashikumar, ‘Leaf disease segmentation from agricultural images via hybridization of active contour model and OFA’, Journal of Intelligent Systems, vol. 29, no. 1, pp. 35-52, 2019.

[20] S. Coulibaly, B. Kamsu-Foguem, D. Kamissoko, and D. Traore, ‘Deep learning for precision agriculture: A bibliometric analysis’, Intelligent Systems with Applications, vol. 16, p. 200102, 2022.

[21] N. Siddique, S. Paheding, C. P. Elkin, and V. Devabhaktuni, ‘U-net and its variants for medical image segmentation: A review of theory and applications’, Ieee Access, vol. 9, pp. 82031-82057, 2021.

[22] K. He, G. Gkioxari, P. Dollár, and R. Girshick, ‘Mask r-cnn’, in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2961-2969.

[23] J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, ‘You only look once: Unified, real-time object detection’, in Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 779-788.

[24] J. Rashid, I. Khan, G. Ali, F. Alturise, and T. Alkhalifah, ‘Real-Time Multiple Guava Leaf Disease Detection from a Single Leaf Using Hybrid Deep Learning Technique.’, Computers, Materials & Continua, vol. 74, no. 1, 2023.

[25] Y. Tian, G. Yang, Z. Wang, E. Li, and Z. Liang, ‘Instance segmentation of apple flowers using the improved mask R-CNN model’, Biosyst Eng, vol. 193, pp. 264-278, 2020.

[26] A. K. Maji, S. Marwaha, S. Kumar, A. Arora, V. Chinnusamy, and S. Islam, ‘SlypNet: Spikelet-based yield prediction of wheat using advanced plant phenotyping and computer vision techniques’, Front Plant Sci, vol. 13, p. 889853, 2022.

[27] J. Liu and X. Wang, ‘Tomato diseases and pests detection based on improved Yolo V3 convolutional neural network’, Front Plant Sci, vol. 11, p. 898, 2020.

[28] M. Lippi, N. Bonucci, R. F. Carpio, M. Contarini, S. Speranza, and A. Gasparri, ‘A yolo-based pest detection system for precision agriculture’, in 2021 29th Mediterranean Conference on Control and Automation (MED), IEEE, 2021, pp. 342-347.

[29] X. Qu, J. Wang, X. Wang, Y. Hu, T. Zeng, and T. Tan, ‘Gravelly soil uniformity identification based on the optimized Mask R-CNN modeľ, Expert Syst Appl, vol. 212, p. 118837, 2023.

[30] L. Zu, Y. Zhao, J. Liu, F. Su, Y. Zhang, and P. Liu, ‘Detection and segmentation of mature green tomatoes based on mask R-CNN with automatic image acquisition approach’, Sensors, vol. 21, no. 23, p. 7842, 2021.

[31] Q. Wang, M. Cheng, S. Huang, Z. Cai, J. Zhang, and H. Yuan, ‘A deep learning approach incorporating YOLO v5 and attention mechanisms for field real-time detection of the invasive weed Solanum rostratum Dunal seedlings’, Comput Electron Agric, vol. 199, p. 107194, 2022.

[32] H. Li et al., ‘Design of field real-time target spraying system based on improved YOLOv5’, Front Plant Sci, vol. 13, p. 1072631, 2022.

[33] C. Hu, J. A. Thomasson, and M. V Bagavathiannan, ‘A powerful image synthesis and semi-supervised learning pipeline for site-specific weed detection’, Comput Electron Agric, vol. 190, p. 106423, 2021.

[34] S. Chen et al., ‘An approach for rice bacterial leaf streak disease segmentation and disease severity estimation’, Agriculture, vol. 11, no. 5, p. 420, 2021.

[35] Y. Tian, G. Yang, Z. Wang, E. Li, and Z. Liang, ‘Instance segmentation of apple flowers using the improved mask R-CNN model’, Biosyst Eng, vol. 193, pp. 264-278, 2020.

[36] G. Lin, Y. Tang, X. Zou, and C. Wang, ‘Three-dimensional reconstruction of guava fruits and branches using instance segmentation and geometry analysis’, Comput Electron Agric, vol. 184, p. 106107, 2021.

[37] K. Jha, A. Doshi, P. Patel, and M. Shah, ‘A comprehensive review on automation in agriculture using artificial intelligence’, Artificial Intelligence in Agriculture, vol. 2, pp. 1-12, 2019.

[38] A. You et al., ‘Semiautonomous Precision Pruning of Upright Fruiting Offshoot Orchard Systems: An Integrated Approach’, IEEE Robot Autom Mag, 2023.

[39] W. Jia, Y. Tian, R. Luo, Z. Zhang, J. Lian, and Y. Zheng, ‘Detection and segmentation of overlapped fruits based on optimized mask R-CNN application in apple harvesting robot’, Comput Electron Agric, vol. 172, p. 105380, 2020.

[40] Y. Yu, K. Zhang, L. Yang, and D. Zhang, ‘Fruit detection for strawberry harvesting robot in non-structural environment based on Mask-RCNN’, Comput Electron Agric, vol. 163, p. 104846, 2019.

[41] L. Zu, Y. Zhao, J. Liu, F. Su, Y. Zhang, and P. Liu, ‘Detection and segmentation of mature green tomatoes based on mask R-CNN with automatic image acquisition approach’, Sensors, vol. 21, no. 23, p. 7842, 2021.

[42] S. Xie, C. Hu, M. Bagavathiannan, and D. Song, ‘Toward robotic weed control: detection of nutsedge weed in bermudagrass turf using inaccurate and insufficient training data’, IEEE Robot Autom Lett, vol. 6, no. 4, pp. 7365-7372, 2021.

[43] J. Champ, A. Mora-Fallas, H. Goëau, E. Mata-Montero, P. Bonnet, and A. Joly, ‘Instance segmentation for the fine detection of crop and weed plants by precision agricultural robots’, Appl Plant Sci, vol. 8, no. 7, p. e11373, 2020.

[44] K. He, G. Gkioxari, P. Dollár, and R. Girshick, ‘Mask r-cnn’, in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2961-2969.

[45] S. Wang, G. Sun, B. Zheng, and Y. Du, ‘A crop image segmentation and extraction algorithm based on Mask RCNN’, Entropy, vol. 23, no. 9, p. 1160, 2021.

[46] P. Ganesh, K. Volle, T. F. Burks, and S. S. Mehta, ‘Deep orange: Mask R-CNN based orange detection and segmentation’, IFAC-PapersOnLine, vol. 52, no. 30, pp. 70-75, 2019.

[47] U. Afzaal, B. Bhattarai, Y. R. Pandeya, and J. Lee, ‘An instance segmentation model for strawberry diseases based on mask R-CNN’, Sensors, vol. 21, no. 19, p. 6565, 2021.

[48] T.-L. Lin, H.-Y. Chang, and K.-H. Chen, ‘The pest and disease identification in the growth of sweet peppers using faster R-CNN and mask R-CNN’, Journal of Internet Technology, vol. 21, no. 2, pp. 605-614, 2020.

[49] Z. U. Rehman et al., ‘Recognizing apple leaf diseases using a novel parallel real-time processing framework based on MASK RCNN and transfer learning: An application for smart agriculture’, IET Image Process, vol. 15, no. 10, pp. 2157-2168, 2021.

[50] G. H. Krishnan and T. Rajasenbagam, ‘A Comprehensive Survey for Weed Classification and Detection in Agriculture Lands’, Journal of Information Technology, vol. 3, no. 4, pp. 281-289, 2021.

[51] K. Osorio, A. Puerto, C. Pedraza, D. Jamaica, and L. Rodríguez, ‘A deep learning approach for weed detection in lettuce crops using multispectral images’, AgriEngineering, vol. 2, no. 3, pp. 471-488, 2020.

[52] T. Zhao, Y. Yang, H. Niu, D. Wang, and Y. Chen, ‘Comparing U-Net convolutional network with mask RCNN in the performances of pomegranate tree canopy segmentation’, in Multispectral, hyperspectral, and ultraspectral remote sensing technology, techniques and applications VII, SPIE, 2018, pp. 210-218.

[53] A. Safonova, E. Guirado, Y. Maglinets, D. Alcaraz-Segura, and S. Tabik, ‘Olive tree biovolume from UAV multi-resolution image segmentation with mask R-CNN’, Sensors, vol. 21, no. 5, p. 1617, 2021.

[54] P. Soviany and R. T. Ionescu, ‘Optimizing the trade-off between single-stage and two-stage deep object detectors using image difficulty prediction’, in 2018 20th International Symposium on Symbolic and Numeric Algorithms for Scientific Computing (SYNASC), IEEE, 2018, pp. 209-214.

[55] A. You et al., ‘Semiautonomous Precision Pruning of Upright Fruiting Offshoot Orchard Systems: An Integrated Approach’, IEEE Robot Autom Mag, 2023.

[56] M. Hussain, L. He, J. Schupp, D. Lyons, and P. Heinemann, ‘Green fruit segmentation and orientation estimation for robotic green fruit thinning of apples’, Comput Electron Agric, vol. 207, p. 107734, 2023.

[57] J. Seol, J. Kim, and H. Il Son, ‘Field evaluations of a deep learning-based intelligent spraying robot with flow control for pear orchards’, Precis Agric, vol. 23, no. 2, pp. 712-732, 2022.

[58] B. Ma, J. Du, L. Wang, H. Jiang, and M. Zhou, ‘Automatic branch detection of jujube trees based on 3D reconstruction for dormant pruning using the deep learning-based method’, Comput Electron Agric, vol. 190, p. 106484, 2021.

[59] Y. Fu et al., ‘Skeleton extraction and pruning point identification of jujube tree for dormant pruning using space colonization algorithm’, Front Plant Sci, vol. 13, p. 1103794, 2023.

[60] J. Zhang, L. He, M. Karkee, Q. Zhang, X. Zhang, and Z. Gao, ‘Branch detection for apple trees trained in fruiting wall architecture using depth features and Regions-Convolutional Neural Network (R-CNN)’, Comput Electron Agric, vol. 155, pp. 386-393, 2018.

[61] P. Guadagna et al., ‘Using deep learning for pruning region detection and plant organ segmentation in dormant spur-pruned grapevines’, Precis Agric, pp. 1-23, 2023.

[62] T. Gentilhomme, M. Villamizar, J. Corre, and J.-M. Odobez, ‘Towards smart pruning: ViNet, a deeplearning approach for grapevine structure estimation’, Comput Electron Agric, vol. 207, p. 107736, 2023.

[63] E. Kok, X. Wang, and C. Chen, ‘Obscured tree branches segmentation and 3D reconstruction using deep learning and geometrical constraints’, Comput Electron Agric, vol. 210, p. 107884, 2023.

[64] J. Zhang, L. He, M. Karkee, Q. Zhang, X. Zhang, and Z. Gao, ‘Branch detection for apple trees trained in fruiting wall architecture using depth features and Regions-Convolutional Neural Network (R-CNN)’, Comput Electron Agric, vol. 155, pp. 386-393, 2018.

[65] G. Lin, Y. Tang, X. Zou, and C. Wang, ‘Three-dimensional reconstruction of guava fruits and branches using instance segmentation and geometry analysis’, Comput Electron Agric, vol. 184, p. 106107, 2021.

[66] A. S. Aguiar et al., ‘Bringing semantics to the vineyard: An approach on deep learning-based vine trunk detection’, Agriculture, vol. 11, no. 2, p. 131, 2021.

[67] S. Tong, Y. Yue, W. Li, Y. Wang, F. Kang, and C. Feng, ‘Branch Identification and Junction Points Location for Apple Trees Based on Deep Learning’, Remote Sens (Basel), vol. 14, no. 18, p. 4495, 2022.

[68] R. Xiang, M. Zhang, and J. Zhang, ‘Recognition for stems of tomato plants at night based on a hybrid joint neural network’, Agriculture, vol. 12, no. 6, p. 743, 2022.

[69] L. Wu, J. Ma, Y. Zhao, and H. Liu, ‘Apple detection in complex scene using the improved YOLOv4 model’, Agronomy, vol. 11, no. 3, p. 476, 2021.

[70] W. Chen, J. Zhang, B. Guo, Q. Wei, and Z. Zhu, ‘An apple detection method based on des-YOLO v4 algorithm for harvesting robots in complex environment’, Math Probl Eng, vol. 2021, pp. 1-12, 2021.

[71] Z. Huang, P. Zhang, R. Liu, and D. Li, ‘Immature apple detection method based on improved Yolov3’, ASP Transactions on Internet of Things, vol. 1, no. 1, pp. 9-13, 2021.

[72] Y. Liu, G. Yang, Y. Huang, and Y. Yin, ‘SE-Mask R-CNN: An improved Mask R-CNN for apple detection and segmentation’, Journal of Intelligent & Fuzzy Systems, vol. 41, no. 6, pp. 6715-6725, 2021.

[73] A. Kuznetsova, T. Maleva, and V. Soloviev, ‘YOLOv5 versus YOLOv3 for apple detection’, in CyberPhysical Systems: Modelling and Intelligent Control, Springer, 2021, pp. 349-358.

[74] D. Wang and D. He, ‘Channel pruned YOLO V5s-based deep learning approach for rapid and accurate apple fruitlet detection before fruit thinning’, Biosyst Eng, vol. 210, pp. 271-281, 2021.

[75] S. Tong, Y. Yue, W. Li, Y. Wang, F. Kang, and C. Feng, ‘Branch Identification and Junction Points Location for Apple Trees Based on Deep Learning’, Remote Sens (Basel), vol. 14, no. 18, p. 4495, 2022.

[76] F. Gao et al., ‘A novel apple fruit detection and counting methodology based on deep learning and trunk tracking in modern orchard’, Comput Electron Agric, vol. 197, p. 107000, 2022.

[77] C. Zhang, F. Kang, and Y. Wang, ‘An improved apple object detection method based on lightweight YOLOv4 in complex backgrounds’, Remote Sens (Basel), vol. 14, no. 17, p. 4150, 2022.

[78] S. Lu, W. Chen, X. Zhang, and M. Karkee, ‘Canopy-attention-YOLOv4-based immature/mature apple fruit detection on dense-foliage tree architectures for early crop load estimation’, Comput Electron Agric, vol. 193, p. 106696, 2022.

[79] J. Lv et al., ‘A visual identification method for the apple growth forms in the orchard’, Comput Electron Agric, vol. 197, p. 106954, 2022.

[80] F. Su et al., ‘Tree Trunk and Obstacle Detection in Apple Orchard Based on Improved YOLOv5s Model’, Agronomy, vol. 12, no. 10, p. 2427, 2022.

[81] D. Wang and D. He, ‘Fusion of Mask RCNN and attention mechanism for instance segmentation of apples under complex background’, Comput Electron Agric, vol. 196, p. 106864, 2022.

[82] W. Jia et al., ‘Accurate segmentation of green fruit based on optimized mask RCNN application in complex orchard’, Front Plant Sci, vol. 13, p. 955256, 2022.

[83] P. Cong, J. Zhou, S. Li, K. Lv, and H. Feng, ‘Citrus Tree Crown Segmentation of Orchard Spraying Robot Based on RGB-D Image and Improved Mask R-CNN’, Applied Sciences, vol. 13, no. 1, p. 164, 2022.

[84] M. Karthikeyan, T. S. Subashini, R. Srinivasan, C. Santhanakrishnan, and A. Ahilan, ‘YOLOAPPLE: Augment Yolov3 deep learning algorithm for apple fruit quality detection’, Signal Image Video Process, pp. 1-10, 2023.

[85] L. Ma, L. Zhao, Z. Wang, J. Zhang, and G. Chen, ‘Detection and Counting of Small Target Apples under Complicated Environments by Using Improved YOLOv7-tiny’, Agronomy, vol. 13, no. 5, p. 1419, 2023.

[86] M. Carranza-García, J. Torres-Mateo, P. Lara-Benítez, and J. García-Gutiérrez, ‘On the performance of onestage and two-stage object detectors in autonomous vehicles using camera data’, Remote Sens (Basel), vol. 13, no. 1, p. 89, 2020.

[87] G. Yang, J. Wang, Z. Nie, H. Yang, and S. Yu, ‘A lightweight YOLOv8 tomato detection algorithm combining feature enhancement and attention’, Agronomy, vol. 13, no. 7, p. 1824, 2023.

[88] X. Yue, K. Qi, X. Na, Y. Zhang, Y. Liu, and C. Liu, ‘Improved YOLOv8-Seg Network for Instance Segmentation of Healthy and Diseased Tomato Plants in the Growth Stage’, Agriculture, vol. 13, no. 8, p. 1643, 2023.

[89] B. Jabir, K. El Moutaouakil, and N. Falih, ‘Developing an Efficient System with Mask R-CNN for Agricultural Applications’, Agris on-line Papers in Economics and Informatics, vol. 15, no. 1, pp. 61-72, 2023.

[90] H. Duong-Trung and N. Duong-Trung, ‘Integrating YOLOv8-agri and DeepSORT for Advanced Motion Detection in Agriculture and Fisheries’, EAI Endorsed Transactions on Industrial Networks and Intelligent Systems, vol. 11, no. 1, pp. e4-e4, 2024.

[91] B. Xu et al., ‘Livestock classification and counting in quadcopter aerial images using Mask R-CNN’, Int

[92] X. Mu, L. He, P. Heinemann, J. Schupp, and M. Karkee, ‘Mask R-CNN based apple flower detection and king flower identification for precision pollination’, Smart Agricultural Technology, vol. 4, p. 100151, 2023.

[93] C. Yu et al., ‘Segmentation and density statistics of mariculture cages from remote sensing images using mask R-CNN’, Information Processing in Agriculture, vol. 9, no. 3, pp. 417-430, 2022.

[94] P. Bharati and A. Pramanik, ‘Deep learning techniques-R-CNN to mask R-CNN: a survey’, Computational Intelligence in Pattern Recognition: Proceedings of CIPR 2019, pp. 657-668, 2020.

[95] G. Hoogenboom, ‘Contribution of agrometeorology to the simulation of crop production and its applications’, Agric For Meteorol, vol. 103, no. 1-2, pp. 137-157, 2000.

[96] L. Zhang, J. Wu, Y. Fan, H. Gao, and Y. Shao, ‘An efficient building extraction method from high spatial resolution remote sensing images based on improved mask R-CNN’, Sensors, vol. 20, no. 5, p. 1465, 2020.

[97] T. Wang et al., ‘Tea picking point detection and location based on Mask-RCNN’, Information Processing in Agriculture, vol. 10, no. 2, pp. 267-275, 2023.

[98] C. Tang et al., ‘A fine recognition method of strawberry ripeness combining Mask R-CNN and region segmentation’, Front Plant Sci, vol. 14, p. 1211830, 2023.

[99] B. R. Amogi, R. Ranjan, and L. R. Khot, ‘Mask R-CNN aided fruit surface temperature monitoring algorithm with edge compute enabled internet of things system for automated apple heat stress management’, Information Processing in Agriculture, 2023.

[100] Y. Li, Q. Fan, H. Huang, Z. Han, and Q. Gu, ‘A modified YOLOv8 detection network for UAV aerial image recognition’, Drones, vol. 7, no. 5, p. 304, 2023.

[101] G. Wang, Y. Chen, P. An, H. Hong, J. Hu, and T. Huang, ‘UAV-YOLOv8: a small-object-detection model based on improved YOLOv8 for UAV aerial photography scenarios’, Sensors, vol. 23, no. 16, p. 7190, 2023.

[102] L. Zhang, G. Ding, C. Li, and D. Li, ‘DCF-Yolov8: An Improved Algorithm for Aggregating Low-Level Features to Detect Agricultural Pests and Diseases’, Agronomy, vol. 13, no. 8, p. 2012, 2023.

[103] X. Wang and J. Liu, ‘Vegetable disease detection using an improved YOLOv8 algorithm in the greenhouse plant environment’, Sci Rep, vol. 14, no. 1, p. 4261, 2024.

[104] G. Yang, J. Wang, Z. Nie, H. Yang, and S. Yu, ‘A lightweight YOLOv8 tomato detection algorithm combining feature enhancement and attention’, Agronomy, vol. 13, no. 7, p. 1824, 2023.

[105] S. Yang, W. Wang, S. Gao, and Z. Deng, ‘Strawberry ripeness detection based on YOLOv8 algorithm fused with LW-Swin Transformer’, Comput Electron Agric, vol. 215, p. 108360, 2023.

[106] R. Sapkota, D. Ahmed, M. Churuvija, and M. Karkee, ‘Immature Green Apple Detection and Sizing in Commercial Orchards using YOLOv8 and Shape Fitting Techniques’, IEEE Access, vol. 12, pp. 4343643452, 2024.

[107] G. Chen, Y. Hou, T. Cui, H. Li, F. Shangguan, and L. Cao, ‘YOLOv8-CML: A lightweight target detection method for Color-changing melon ripening in intelligent agriculture’, 2023.

[108] J. Wei, Y. Ding, J. Liu, M. Z. Ullah, X. Yin, and W. Jia, ‘Novel green-fruit detection algorithm based on D2D framework’, International Journal of Agricultural and Biological Engineering, vol. 15, no. 1, pp. 251259, 2022.

[109] M. Sun, L. Xu, R. Luo, Y. Lu, and W. Jia, ‘GHFormer-Net: Towards more accurate small green apple/begonia fruit detection in the nighttime’, Journal of King Saud University-Computer and Information Sciences, vol. 34, no. 7, pp. 4421-4432, 2022.

[110] M. Liu, W. Jia, Z. Wang, Y. Niu, X. Yang, and C. Ruan, ‘An accurate detection and segmentation model of obscured green fruits’, Comput Electron Agric, vol. 197, p. 106984, 2022.

[111] W. Jia et al., ‘FoveaMask: A fast and accurate deep learning model for green fruit instance segmentation’, Comput Electron Agric, vol. 191, p. 106488, 2021.

[112] S. Sun, M. Jiang, D. He, Y. Long, and H. Song, ‘Recognition of green apples in an orchard environment by combining the GrabCut model and Ncut algorithm’, Biosyst Eng, vol. 187, pp. 201-213, 2019.

[113] A. Prabhu and N. S. Rani, ‘Semiautomated Segmentation Model to Extract Fruit Images from Trees’, in 2021 International Conference on Intelligent Technologies (CONIT), IEEE, 2021, pp. 1-13.

[114] Y. Tian, G. Yang, Z. Wang, H. Wang, E. Li, and Z. Liang, ‘Apple detection during different growth stages in orchards using the improved YOLO-V3 model’, Comput Electron Agric, vol. 157, pp. 417-426, 2019.

[115] S. Wan and S. Goudos, ‘Faster R-CNN for multi-class fruit detection using a robotic vision system’, Computer Networks, vol. 168, p. 107036, 2020.

[116] M. Liu, W. Jia, Z. Wang, Y. Niu, X. Yang, and C. Ruan, ‘An accurate detection and segmentation model of obscured green fruits’, Comput Electron Agric, vol. 197, p. 106984, 2022.

[117] E. Kok, X. Wang, and C. Chen, ‘Obscured tree branches segmentation and 3D reconstruction using deep learning and geometrical constraints’, Comput Electron Agric, vol. 210, p. 107884, 2023.

[118] D.-H. Kim, C.-U. Ko, D.-G. Kim, J.-T. Kang, J.-M. Park, and H.-J. Cho, ‘Automated Segmentation of Individual Tree Structures Using Deep Learning over LiDAR Point Cloud Data’, Forests, vol. 14, no. 6, p. 1159, 2023.

[119] R. Sapkota et al., ‘YOLOv10 to Its Genesis: A Decadal and Comprehensive Review of The You Only Look Once Series’, arXiv preprint arXiv:2406.19407, 2024.

[120] A. Wang et al., ‘Yolov10: Real-time end-to-end object detection’, arXiv preprint arXiv:2405.14458, 2024.