DOI: https://doi.org/10.2196/59479

PMID: https://pubmed.ncbi.nlm.nih.gov/39105570

تاريخ النشر: 2024-07-29

فرص ومخاطر نماذج اللغة الكبيرة في الصحة النفسية

الملخص

تزداد معدلات القلق النفسي على مستوى العالم، وهناك إدراك متزايد بأن النماذج الحالية للرعاية النفسية لن تتوسع بشكل كافٍ لتلبية الطلب. مع ظهور نماذج اللغة الكبيرة (LLMs) جاء تفاؤل كبير بشأن وعدها بخلق حلول جديدة وواسعة النطاق لدعم الصحة النفسية. على الرغم من بدايتها، تم تطبيق نماذج اللغة الكبيرة بالفعل على مهام متعلقة بالصحة النفسية. في هذه الورقة، نقوم بتلخيص الأدبيات الموجودة حول الجهود المبذولة لاستخدام نماذج اللغة الكبيرة لتوفير التعليم والتقييم والتدخل في مجال الصحة النفسية، ونبرز الفرص الرئيسية لتحقيق تأثير إيجابي في كل مجال. ثم نبرز المخاطر المرتبطة بتطبيق نماذج اللغة الكبيرة على الصحة النفسية ونشجع على اعتماد استراتيجيات للتخفيف من هذه المخاطر. يجب أن يتوازن الطلب العاجل على دعم الصحة النفسية مع التطوير المسؤول، والاختبار، ونشر نماذج اللغة الكبيرة المتعلقة بالصحة النفسية. من الضروري بشكل خاص التأكد من أن نماذج اللغة الكبيرة المتعلقة بالصحة النفسية تم ضبطها بدقة للصحة النفسية، وتعزز العدالة في الصحة النفسية، وتلتزم بالمعايير الأخلاقية، وأن الأشخاص، بما في ذلك أولئك الذين لديهم تجارب حية مع القضايا النفسية، يشاركون في جميع المراحل من التطوير إلى النشر. ستساعد أولوية هذه الجهود في تقليل الأضرار المحتملة للصحة النفسية وزيادة احتمالية أن تؤثر نماذج اللغة الكبيرة بشكل إيجابي على الصحة النفسية على مستوى العالم.

الكلمات المفتاحية: الذكاء الاصطناعي؛ الذكاء الاصطناعي؛ الذكاء الاصطناعي التوليدي؛ نماذج اللغة الكبيرة؛ الصحة النفسية؛ تعليم الصحة النفسية؛ نموذج اللغة؛ رعاية الصحة النفسية؛ العدالة الصحية؛ الأخلاقية؛ التنمية؛ النشر

مقدمة

فيما يتعلق بتطبيقها على الصحة النفسية وإمكاناتها لتقديم مثل هذه الحلول نظرًا لصلتها بتعليم الصحة النفسية، والتقييم، والتدخل. تعتبر نماذج اللغة الكبيرة نماذج ذكاء اصطناعي تم تدريبها باستخدام مجموعات بيانات واسعة للتنبؤ بتسلسلات اللغة. من خلال الاستفادة من هياكل عصبية ضخمة، يمكن لنماذج اللغة الكبيرة تنظيم مفاهيم معقدة وتجريدية. وهذا يمكنها من التعرف على المحتوى وترجمته وتنبؤه وتوليد محتوى جديد. يمكن ضبط نماذج اللغة الكبيرة لتناسب مجالات محددة (مثل الصحة النفسية) وتمكن من التفاعل بلغة طبيعية، كما تفعل العديد من تقييمات وتدخلات الصحة النفسية، مما يبرز الإمكانات الهائلة التي تمتلكها لإحداث ثورة في رعاية الصحة النفسية. في هذه الورقة، نقوم أولاً بتلخيص الأبحاث التي أجريت حتى الآن حول تطبيق نماذج اللغة الكبيرة على الصحة النفسية. ثم نبرز الفرص والمخاطر الرئيسية المرتبطة بنماذج اللغة الكبيرة في الصحة النفسية ونقدم اقتراحات.

استراتيجيات التخفيف من المخاطر. أخيرًا، نقدم توصيات للاستخدام المسؤول لنماذج اللغة الكبيرة في مجال الصحة النفسية.

تطبيقات نماذج اللغة الكبيرة في الصحة النفسية

نظرة عامة

التعليم

التقييم

الأطباء المتخصصين في الطب استجابةً لدراسات حالة صعبة، بعضها كان يتضمن تشخيصات نفسية [22].

التدخل

الحفاظ على المحادثات العلاجية [14] وأن الأفراد يمكنهم إقامة علاقة علاجية مع روبوتات المحادثة [41].

المخاطر المرتبطة بنماذج اللغة الكبيرة في الصحة النفسية

نظرة عامة

لتخفيف المخاطر، ووضع خطط لمراقبة المخاطر المستمرة أو الجديدة وغير المتوقعة

- يجب أن تشارك نماذج اللغة الكبيرة فقط في المهام المتعلقة بالصحة النفسية عندما تكون مدربة وأظهرت أداءً جيدًا.

- يجب أن تعزز نماذج اللغة الكبيرة للصحة النفسية العدالة في الصحة النفسية.

- يجب أن تكون الخصوصية أو السرية في المقام الأول عندما تعمل نماذج اللغة الكبيرة لدعم الصحة النفسية.

- يجب الحصول على موافقة مستنيرة عندما يتفاعل الناس مع نماذج اللغة الكبيرة للصحة النفسية.

- يجب أن تستجيب نماذج اللغة الكبيرة للصحة النفسية بشكل مناسب لمخاطر الصحة النفسية.

- يجب أن تعمل نماذج اللغة الكبيرة للصحة النفسية فقط ضمن حدود كفاءتها.

- يجب أن تكون نماذج اللغة الكبيرة للصحة النفسية شفافة وقادرة على التفسير.

- يجب أن يقدم البشر الإشراف والتعليقات على نماذج اللغة الكبيرة للصحة النفسية.

| التعليم النفسي | التقييم النفسي | التدخل النفسي | |

| ت perpetuate inequalities, disparities, and stigma | متوسط | أعلى | أعلى |

| تقديم خدمات الصحة النفسية بشكل غير أخلاقي | |||

| ممارسة خارج حدود الكفاءة | أقل | أعلى | أعلى |

| الإغفال عن الحصول على موافقة مستنيرة | أقل | أعلى | أعلى |

| الفشل في الحفاظ على السرية أو الخصوصية | أقل | أعلى | أعلى |

| بناء والحفاظ على مستويات غير مناسبة من الثقة | أقل | متوسط | أعلى |

| نقص الموثوقية | أقل | أعلى | أعلى |

| توليد مخرجات غير دقيقة أو ناتجة عن العلاج | متوسط | أعلى | أعلى |

| نقص الشفافية أو القابلية للتفسير | أقل | متوسط | متوسط |

| إهمال إشراك البشر | أقل | متوسط | أعلى |

تعزيز عدم المساواة والفجوات والوصمة

والتقييمات والتدخلات الفعالة للسكان الذين تم دفعهم إلى الهوامش. يمكن أن يؤدي تدريب نماذج اللغة الكبيرة على البيانات الموجودة دون وجود ضمانات مناسبة وإشراف وتقييم بشري مدروس إلى توليد محتوى متحيز وأداء نماذج متباين لمجموعات مختلفة [45،55-57] (من الجدير بالذكر، مع ذلك، أن هناك بعض الأدلة على أن الأطباء يشعرون بوجود تحيز أقل في ردود نماذج اللغة الكبيرة مقارنة بردود الأطباء، مما يشير إلى أن نماذج اللغة الكبيرة قد تكون لديها القدرة على تقليل التحيز مقارنة بالأطباء البشر).

من التحيز، مثل التحيزات الدلالية، في نماذج اللغة الكبيرة [59]. يجب استكشاف الفرص لتدريب النماذج على تحديد واستبعاد اللغة السامة والتمييزية، سواء أثناء تدريب النماذج الأساسية أو أثناء الضبط الدقيق الخاص بالمجال (انظر كيلين [59] لمناقشة التوازنات في تصفية البيانات في هذا السياق) [45]. إذا كانت نماذج اللغة الكبيرة تؤدي بشكل مختلف لمجموعات مختلفة أو تولد لغة إشكالية أو موصومة أثناء الاختبار، فإن ضبط النموذج الإضافي مطلوب قبل النشر. يجب أن يكون الأفراد الذين يقومون بتطوير نماذج اللغة الكبيرة شفافين بشأن قيود بيانات التدريب، والنهج المتبعة في تصفية البيانات والضبط الدقيق، والسكان الذين لم يتم إثبات أداء نماذج اللغة الكبيرة بشكل كافٍ لهم.

الفشل في تقديم خدمات الصحة النفسية بشكل أخلاقي

يجب عدم نشر نموذج اللغة الكبيرة حتى يتم اكتساب الكفاءة المطلوبة (على سبيل المثال، من خلال إعادة التدريب وضبط النماذج مع التحقق البشري).

موثوقية غير كافية

عدم الدقة

موثوقة، ومحددة للرعاية الصحية القائمة على الأدلة، وتمثل مجموعات سكانية متنوعة [46،58]. في الصحة النفسية، تستمر طبيعة الإجماع في التطور، ويستمر تزايد كمية البيانات المتاحة، والتي يجب أخذها في الاعتبار عند النظر في ما إذا كان يجب تحسين النماذج بشكل أكبر. قد تساعد استراتيجيات مثل تنفيذ نظام توليد معزز بالاسترجاع، حيث يتم منح نماذج اللغة الكبيرة الوصول إلى قاعدة بيانات خارجية تحتوي على معلومات محدثة وموثوقة الجودة لتضمينها في عملية التوليد، في تحسين الدقة وتمكين الروابط بالمصادر مع الحفاظ أيضًا على الوصول إلى المعلومات المحدثة. يجب مراقبة دقة نماذج اللغة الكبيرة بمرور الوقت لضمان تحسين دقة النموذج وعدم تدهورها مع المعلومات الجديدة [45].

نقص الشفافية وقابلية التفسير

إهمال إشراك البشر

م engaging، وأكثر جاذبية، وربما أيضًا أكثر شبهًا بالبشر. ومع ذلك، فإنه يزيد أيضًا من خطر أن تنتج نماذج اللغة الكبيرة محتوى ضارًا أو غير علاجي عندما تُكلف بتقديم خدمات الصحة النفسية بشكل مستقل. هناك حاجة إلى أطر قانونية وتنظيمية لحماية سلامة الأفراد وصحتهم النفسية عند التفاعل مع نماذج اللغة الكبيرة، بالإضافة إلى توضيح مسؤولية الأطباء عند استخدام نماذج اللغة الكبيرة لدعم عملهم أو لتوضيح مسؤولية الأفراد والشركات التي تطور هذه النماذج. هناك مناقشات جارية بشأن تنظيم نماذج اللغة الكبيرة في الطب [74-76] يمكن أن تُفيد في كيفية دعم نماذج اللغة الكبيرة للصحة النفسية مع الحد من إمكانية الضرر والمسؤولية.

الاستنتاجات

تقرير مناسب، وكشف المخاطر المتعلقة بالقلق النفسي الحاد لتحقيق التقدم في هذه المجالات.

شكر وتقدير

تعارض المصالح

References

- McGrath JJ, Al-Hamzawi A, Alonso J, et al. Age of onset and cumulative risk of mental disorders: a cross-national analysis of population surveys from 29 countries. Lancet Psychiatry. Sep 2023;10(9):668-681. [doi: 10.1016/S2215-0366(23)00193-1] [Medline: 37531964]

- World mental health report: transforming mental health for all. World Health Organization; 2022. URL: https://www. who.int/publications/i/item/9789240049338 [Accessed 2024-07-18]

- 2022 National Healthcare Quality and Disparities Report. Agency for Healthcare Research and Quality; 2022. URL: https://www.ahrq.gov/research/findings/nhqrdr/nhqdr22/index.html [Accessed 2024-07-18]

- Wainberg ML, Scorza P, Shultz JM, et al. Challenges and opportunities in global mental health: a research-to-practice perspective. Curr Psychiatry Rep. May 2017;19(5):28. [doi: 10.1007/s11920-017-0780-z] [Medline: 28425023]

- Mental health by the numbers. National Alliance on Mental Illness. 2023. URL: https://www.nami.org/about-mental-illness/mental-health-by-the-numbers/ [Accessed 2024-07-29]

- Thirunavukarasu AJ, Ting DSJ, Elangovan K, Gutierrez L, Tan TF, Ting DSW. Large language models in medicine. Nat Med. Aug 2023;29(8):1930-1940. [doi: 10.1038/s41591-023-02448-8] [Medline: 37460753]

- Gao Y, Xiong Y, Gao X, et al. Retrieval-augmented generation for large language models: a survey. arXiv. Preprint posted online on Dec 18, 2023. [doi: 10.48550/arXiv.2312.10997]

- Ayers JW, Poliak A, Dredze M, et al. Comparing physician and artificial intelligence chatbot responses to patient questions posted to a public social media forum. JAMA Intern Med. Jun 1, 2023;183(6):589-596. [doi: 10.1001/ jamainternmed.2023.1838] [Medline: 37115527]

- Singhal K, Tu T, Gottweis J, et al. Towards expert-level medical question answering with large language models. arXiv. Preprint posted online on May 16, 2023. [doi: 10.48550/arXiv.2305.09617]

- Lai T, Shi Y, Du Z, et al. Psy-LLM: scaling up global mental health psychological services with AI-based large language models. arXiv. Preprint posted online on Sep 1, 2023. [doi: 10.48550/arXiv.2307.11991]

- Sezgin E, Chekeni F, Lee J, Keim S. Clinical accuracy of large language models and Google search responses to postpartum depression questions: cross-sectional study. J Med Internet Res. Sep 11, 2023;25:e49240. [doi: 10.2196/ 49240] [Medline: 37695668]

- Spallek S, Birrell L, Kershaw S, Devine EK, Thornton L. Can we use ChatGPT for mental health and substance use education? Examining its quality and potential harms. JMIR Med Educ. Nov 30, 2023;9:e51243. [doi: 10.2196/51243] [Medline: 38032714]

- Barish G, Marlotte L, Drayton M, Mogil C, Lester P. Automatically enriching content for a behavioral health learning management system: a first look. Presented at: The 9th World Congress on Electrical Engineering and Computer Systems and Science; Aug 3-5, 2023; London, United Kingdom. [doi: 10.11159/cist23.125]

- Chan C, Li F. Developing a natural language-based AI-chatbot for social work training: an illustrative case study. China J Soc Work. May 4, 2023;16(2):121-136. [doi: 10.1080/17525098.2023.2176901]

- Sharma A, Lin IW, Miner AS, Atkins DC, Althoff T. Human-AI collaboration enables more empathic conversations in text-based peer-to-peer mental health support. Nat Mach Intell. 2023;5(1):46-57. [doi: 10.1038/s42256-022-00593-2]

- Ji S, Zhang T, Ansari L, Fu J, Tiwari P, Cambria E. MentalBERT: publicly available pretrained language models for mental healthcare. arXiv. Preprint posted online on Oct 29, 2021. [doi: 10.48550/arXiv.2110.15621]

- Lamichhane B. Evaluation of ChatGPT for NLP-based mental health applications. arXiv. Preprint posted online on Mar 28, 2023. [doi: 10.48550/arXiv.2303.15727]

- Amin MM, Cambria E, Schuller BW. Will affective computing emerge from foundation models and general AI? A first evaluation on ChatGPT. arXiv. Preprint posted online on Mar 3, 2023. [doi: 10.48550/arXiv.2303.03186]

- Xu X, Yao B, Dong Y, et al. Mental-LLM: leveraging large language models for mental health prediction via online text data. arXiv. Preprint posted online on Aug 16, 2023. [doi: 10.48550/arXiv.2307.14385]

- Galatzer-Levy IR, McDuff D, Natarajan V, Karthikesalingam A, Malgaroli M. The capability of large language models to measure psychiatric functioning. arXiv. Preprint posted online on Aug 3, 2023. [doi: 10.48550/arXiv.2308.01834]

- Barnhill JW. DSM-5-TR® clinical cases. Psychiatry online. URL: https://dsm.psychiatryonline.org/doi/book/10.1176/ appi.books. 9781615375295 [Accessed 2024-07-18]

- McDuff D, Schaekermann M, Tu T, et al. Towards accurate differential diagnosis with large language models. arXiv. Preprint posted online on Nov 30, 2023. [doi: 10.48550/arXiv.2312.00164]

- Elyoseph Z, Levkovich I. Beyond human expertise: the promise and limitations of ChatGPT in suicide risk assessment. Front Psychiatry. Aug 2023;14:1213141. [doi: 10.3389/fpsyt.2023.1213141] [Medline: 37593450]

- Levi-Belz Y, Gamliel E. The effect of perceived burdensomeness and thwarted belongingness on therapists’ assessment of patients’ suicide risk. Psychother Res. Jul 2016;26(4):436-445. [doi: 10.1080/10503307.2015.1013161] [Medline: 25751580]

- Joiner T. Why People Die by Suicide. Harvard University Press; 2007.

- Van Orden KA, Witte TK, Cukrowicz KC, Braithwaite SR, Selby EA, Joiner TE. The interpersonal theory of suicide. Psychol Rev. Apr 2010;117(2):575-600. [doi: 10.1037/a0018697] [Medline: 20438238]

- Darcy A, Beaudette A, Chiauzzi E, et al. Anatomy of a Woebot® (WB001): agent guided CBT for women with postpartum depression. Expert Rev Med Devices. Apr 2022;19(4):287-301. Retracted in: Expert Rev Med Devices. 2023;20(11):989. [doi: 10.1080/17434440.2023.2267389] [Medline: 37801290]

- Inkster B, Sarda S, Subramanian V. An empathy-driven, conversational artificial intelligence agent (Wysa) for digital mental well-being: real-world data evaluation mixed-methods study. JMIR Mhealth Uhealth. Nov 23, 2018;6(11):e12106. [doi: 10.2196/12106] [Medline: 30470676]

- Fulmer R, Joerin A, Gentile B, Lakerink L, Rauws M. Using psychological artificial intelligence (Tess) to relieve symptoms of depression and anxiety: randomized controlled trial. JMIR Ment Health. Dec 13, 2018;5(4):e64. [doi: 10. 2196/mental.9782] [Medline: 30545815]

- Murphy M, Templin J. Our story. Replika. 2021. URL: https://replika.ai/about/story [Accessed 2023-08-20]

- Kim H, Yang H, Shin D, Lee JH. Design principles and architecture of a second language learning chatbot. Lang Learn Technol. 2022;26:1-18. URL: https://scholarspace.manoa.hawaii.edu/server/api/core/bitstreams/b3aa08a8-579d-4bf6-b94a-05c2ff67351a/content [Accessed 2024-07-18]

- Wilbourne P, Dexter G, Shoup D. Research driven: Sibly and the transformation of mental health and wellness. Presented at: Proceedings of the 12th EAI International Conference on Pervasive Computing Technologies for Healthcare; May 21-24, 2018:389-391; New York, NY. [doi: 10.1145/3240925.3240932]

- Denecke K, Abd-Alrazaq A, Househ M. Artificial intelligence for chatbots in mental health: opportunities and challenges. In: Househ M, Borycki E, Kushniruk A, editors. Multiple Perspectives on Artificial Intelligence in Healthcare: Opportunities and Challenges. Springer International Publishing; 2021:115-128. [doi: 10.1007/978-3-030-67303-1]

- Omarov B, Zhumanov Z, Gumar A, Kuntunova L. Artificial intelligence enabled mobile chatbot psychologist using AIML and cognitive behavioral therapy. IJACSA. 2023;14(6). [doi: 10.14569/IJACSA.2023.0140616]

- Pham KT, Nabizadeh A, Selek S. Artificial intelligence and chatbots in psychiatry. Psychiatr Q. Mar 2022;93(1):249-253. [doi: 10.1007/s11126-022-09973-8] [Medline: 35212940]

- Abd-Alrazaq AA, Rababeh A, Alajlani M, Bewick BM, Househ M. Effectiveness and safety of using chatbots to improve mental health: systematic review and meta-analysis. J Med Internet Res. Jul 2020;22(7):e16021. [doi: 10.2196/ 16021] [Medline: 32673216]

- Brocki L, Dyer GC, Gładka A, Chung NC. Deep learning mental health dialogue system. Presented at: 2023 IEEE International Conference on Big Data and Smart Computing (BigComp); Feb 13-16, 2023:395-398. Jeju, Korea.

- Martinengo L, Lum E, Car J. Evaluation of chatbot-delivered interventions for self-management of depression: content analysis. J Affect Disord. Dec 2022;319:598-607. [doi: 10.1016/j.jad.2022.09.028] [Medline: 36150405]

- You Y, Tsai CH, Li Y, Ma F, Heron C, Gui X. Beyond self-diagnosis: how a chatbot-based symptom checker should respond. ACM Trans Comput-Hum Interact. Aug 31, 2023;30(4):1-44. [doi: 10.1145/3589959]

- Ma Z, Mei Y, Su Z. Understanding the benefits and challenges of using large language model-based conversational agents for mental well-being support. AMIA Annu Symp Proc. Jan 11, 2024;2023:1105-1114. [Medline: 38222348]

- Lee J, Lee JG, Lee D. Influence of rapport and social presence with an AI psychotherapy chatbot on users’ selfdisclosure. SSRN. Preprint posted online on Mar 22, 2022. [doi: 10.2139/ssrn.4063508]

- Das A, Selek S, Warner AR, et al. Conversational bots for psychotherapy: a study of generative transformer models using domain-specific dialogues. In: Demner-Fushman D, Cohen KB, Ananiadou S, Tsujii J, editors. Proceedings of the 21st Workshop on Biomedical Language Processing. Association for Computational Linguistics; 2022:285-297. [doi: 10. 18653/v1/2022.bionlp-1.27]

- Demner-Fushman D, Ananiadou S, Cohen KB, editors. The 22nd Workshop on Biomedical Natural Language Processing and BioNLP Shared Tasks. Association for Computational Linguistics; 2023.

- Heston TF. Evaluating risk progression in mental health chatbots using escalating prompts. medRxiv. Preprint posted online on Sep 12, 2023. [doi: 10.1101/2023.09.10.23295321]

- Weidinger L, Mellor J, Rauh M, et al. Ethical and social risks of harm from language models. arXiv. Preprint posted online on Dec 8, 2021. [doi: 10.48550/arXiv.2112.04359]

- Harrer S. Attention is not all you need: the complicated case of ethically using large language models in healthcare and medicine. eBioMedicine. Apr 2023;90:104512. [doi: 10.1016/j.ebiom.2023.104512] [Medline: 36924620]

- Koutsouleris N, Hauser TU, Skvortsova V, De Choudhury M. From promise to practice: towards the realisation of AIinformed mental health care. Lancet Digital Health. Nov 2022;4(11):e829-e840. [doi: 10.1016/S2589-7500(22)00153-4]

- Sickel AE, Seacat JD, Nabors NA. Mental health stigma update: a review of consequences. Adv Ment Health. Dec 2014;12(3):202-215. [doi: 10.1080/18374905.2014.11081898]

- Alegría M, Green JG, McLaughlin KA, Loder S. Disparities in child and adolescent mental health and mental health services in the U.S. William T. Grant Foundation. Mar 2015. URL: https://wtgrantfoundation.org/wp-content/uploads/ 2015/09/Disparities-in-Child-and-Adolescent-Mental-Health.pdf [Accessed 2024-07-18]

- Primm AB, Vasquez MJT, Mays RA. The role of public health in addressing racial and ethnic disparities in mental health and mental illness. Prev Chronic Dis. Jan 2010;7(1):A20. [Medline: 20040235]

- Schwartz RC, Blankenship DM. Racial disparities in psychotic disorder diagnosis: a review of empirical literature. World J Psychiatry. Dec 2014;4(4):133. [doi: 10.5498/wjp.v4.i4.133] [Medline: 25540728]

- McGuire TG, Miranda J. New evidence regarding racial and ethnic disparities in mental health: policy implications. Health Aff (Millwood). Mar 2008;27(2):393-403. [doi: 10.1377/hlthaff.27.2.393] [Medline: 18332495]

- Snowden LR, Cheung FK. Use of inpatient mental health services by members of ethnic minority groups. Am Psychol. Mar 1990;45(3):347-355. [doi: 10.1037//0003-066x.45.3.347] [Medline: 2310083]

- Henrich J, Heine SJ, Norenzayan A. Beyond WEIRD: towards a broad-based behavioral science. Behav Brain Sci. Jun 2010;33(2-3):111-135. [doi: 10.1017/S0140525X10000725]

- Lin I, Njoo L, Field A, et al. Gendered mental health stigma in masked language models. arXiv. Preprint posted online on Oct 27, 2022. [doi: 10.48550/arXiv.2210.15144]

- Liu Y, et al. Trustworthy LLMs: a survey and guideline for evaluating large language models’ alignment. arXiv. Preprint posted online on Aug 10, 2023. [doi: 10.48550/arXiv.2308.05374]

- Straw I, Callison-Burch C. Artificial intelligence in mental health and the biases of language based models. PLoS One. Dec 2020;15(12):e0240376. [doi: 10.1371/journal.pone.0240376] [Medline: 33332380]

- Singhal K, Azizi S, Tu T, et al. Large language models encode clinical knowledge. Nature. Aug 2023;620(7972):172-180. [doi: 10.1038/s41586-023-06291-2] [Medline: 37438534]

- Keeling G. Algorithmic bias, generalist models, and clinical medicine. arXiv. Preprint posted online on May 6, 2023. [doi: 10.48550/arXiv.2305.04008]

- Koocher GP, Keith-Spiegel P. Ethics in Psychology and the Mental Health Professions: Standards and Cases. Oxford University Press; 2008.

- Varkey B. Principles of clinical ethics and their application to practice. Med Princ Pract. Feb 2021;30(1):17-28. [doi: 10. 1159/000509119] [Medline: 32498071]

- Rajagopal A, Nirmala V, Andrew J, Arun M. Novel AI to avert the mental health crisis in COVID-19: novel application of GPT2 in cognitive behaviour therapy. Research Square. Preprint posted online on Apr 1, 2021. [doi: 10.21203/rs.3.rs382748/v1]

- Gratch J, Lucas G. Rapport between humans and socially interactive agents. In: Lugrin B, Pelachaud C, Traum D, editors. The Handbook on Socially Interactive Agents: 20 Years of Research on Embodied Conversational Agents, Intelligent Virtual Agents, and Social Robotics Volume 1: Methods, Behavior, Cognition. Association for Computing Machinery; 2021:433-462. [doi: 10.1145/3477322.3477335]

- McDuff D, Czerwinski M. Designing emotionally sentient agents. Commun ACM. Nov 20, 2018;61(12):74-83. [doi: 10. 1145/3186591]

- Lundin RM, Berk M, Østergaard SD. ChatGPT on ECT: can large language models support psychoeducation? J ECT. Sep 1, 2023;39(3):130-133. [doi: 10.1097/YCT.0000000000000941] [Medline: 37310145]

- Wang B, Min S, Deng X, et al. Towards understanding chain-of-thought prompting: an empirical study of what matters. arXiv. Preprint posted online on Dec 20, 2022. [doi: 10.48550/arXiv.2212.10001]

- Glenberg AM, Havas D, Becker R, Rinck M. Grounding language in bodily states: the case for emotion. In: Grounding Cognition: The Role of Perception and Action in Memory, Language, and Thinking. Cambridge University Press; 2005:115-128. [doi: 10.1017/CBO9780511499968]

- Zhong Y, Chen YJ, Zhou Y, Lyu YAH, Yin JJ, Gao YJ. The artificial intelligence large language models and neuropsychiatry practice and research ethic. Asian J Psychiatr. Jun 2023;84:103577. [doi: 10.1016/j.ajp.2023.103577] [Medline: 37019020]

- Gilbert S, Harvey H, Melvin T, Vollebregt E, Wicks P. Large language model AI chatbots require approval as medical devices. Nat Med. Oct 2023;29(10):2396-2398. [doi: 10.1038/s41591-023-02412-6] [Medline: 37391665]

- Singh OP. Artificial intelligence in the era of ChatGPT – opportunities and challenges in mental health care. Indian J Psychiatry. Mar 2023;65(3):297-298. [doi: 10.4103/indianjpsychiatry.indianjpsychiatry_112_23] [Medline: 37204980]

- Yang K, Ji S, Zhang T, Xie Q, Kuang Z, Ananiadou S. Towards interpretable mental health analysis with large language models. arXiv. Preprint posted online on Oct 11, 2023. [doi: 10.48550/arXiv.2304.03347]

- Balasubramaniam N, Kauppinen M, Rannisto A, Hiekkanen K, Kujala S. Transparency and explainability of AI systems: from ethical guidelines to requirements. Inf Softw Technol. Jul 2023;159:107197. [doi: 10.1016/j.infsof.2023.107197]

- Wei J, Wang X, Schuurmans D, et al. Chain-of-thought prompting elicits reasoning in large language models. In: Koyejo S, Mohamed S, Agarwal A, editors. Advances in Neural Information Processing Systems. Vol 35. Curran Associates, Inc; 2022:24824-24837.

- Meskó B, Topol EJ. The imperative for regulatory oversight of large language models (or generative AI) in healthcare. NPJ Digit Med. Jul 6, 2023;6(1):120. [doi: 10.1038/s41746-023-00873-0] [Medline: 37414860]

- Ong JCL, Chang SYH, William W, et al. Ethical and regulatory challenges of large language models in medicine. Lancet Digit Health. Jun 2024;6(6):e428-e432. [doi: 10.1016/S2589-7500(24)00061-X] [Medline: 38658283]

- Minssen T, Vayena E, Cohen IG. The challenges for regulating medical use of ChatGPT and other large language models. JAMA. Jul 25, 2023;330(4):315-316. [doi: 10.1001/jama.2023.9651] [Medline: 37410482]

- van Heerden AC, Pozuelo JR, Kohrt BA. Global mental health services and the impact of artificial intelligence-powered large language models. JAMA Psychiatry. Jul 1, 2023;80(7):662-664. [doi: 10.1001/jamapsychiatry.2023.1253] [Medline: 37195694]

- Cabrera J, Loyola MS, Magaña I, Rojas R. Ethical dilemmas, mental health, artificial intelligence, and LLM-based chatbots. In: Bioinformatics and Biomedical Engineering. Springer Nature Switzerland; 2023:313-326. [doi: 10.1007/ 978-3-031-34960-7_2]

الاختصارات

بيرت: تمثيلات الترميز ثنائية الاتجاه من المحولات

DSM-5: الدليل التشخيصي والإحصائي للاضطرابات النفسية، الطبعة الخامسة

EBP: الممارسة المستندة إلى الأدلة

HIPAA: قانون قابلية نقل التأمين الصحي والمساءلة

نموذج اللغة الكبير

نموذج اللغة الكبير للدعم النفسي

لورانس إتش آر، شنايدر آر إيه، روبين إس بي، ماتاريك إم جي، مكدف دي جي، جونز بيل إم

فرص ومخاطر نماذج اللغة الكبيرة في الصحة النفسية

JMIR الصحة النفسية 2024؛11:e59479

رابط: https://mental.jmir.org/2024/1/e59479

doi: 10.2196/59479

© هانا ر لورانس، رينيه أ شنايدر، سوزان ب روبين، مايا ج ماتاريك، دانيال ج مكدف، ميغان جونز بيل. نشرت أصلاً في JMIR الصحة النفسية (https://mental.jmir.org), 29.07.2024. هذه مقالة مفتوحة الوصول موزعة بموجب شروط ترخيص المشاع الإبداعي (https://creativecommons.org/licenses/by/4.0/), والتي تسمح بالاستخدام غير المقيد، والتوزيع، وإعادة الإنتاج في أي وسيلة، بشرط أن يتم الاقتباس بشكل صحيح من العمل الأصلي، الذي نُشر لأول مرة في JMIR الصحة النفسية. يجب تضمين المعلومات الببليوغرافية الكاملة، ورابط إلى النشر الأصلي على https://mental.jmir.org/، بالإضافة إلى معلومات حقوق الطبع والنشر والترخيص هذه.

DOI: https://doi.org/10.2196/59479

PMID: https://pubmed.ncbi.nlm.nih.gov/39105570

Publication Date: 2024-07-29

The Opportunities and Risks of Large Language Models in Mental Health

Abstract

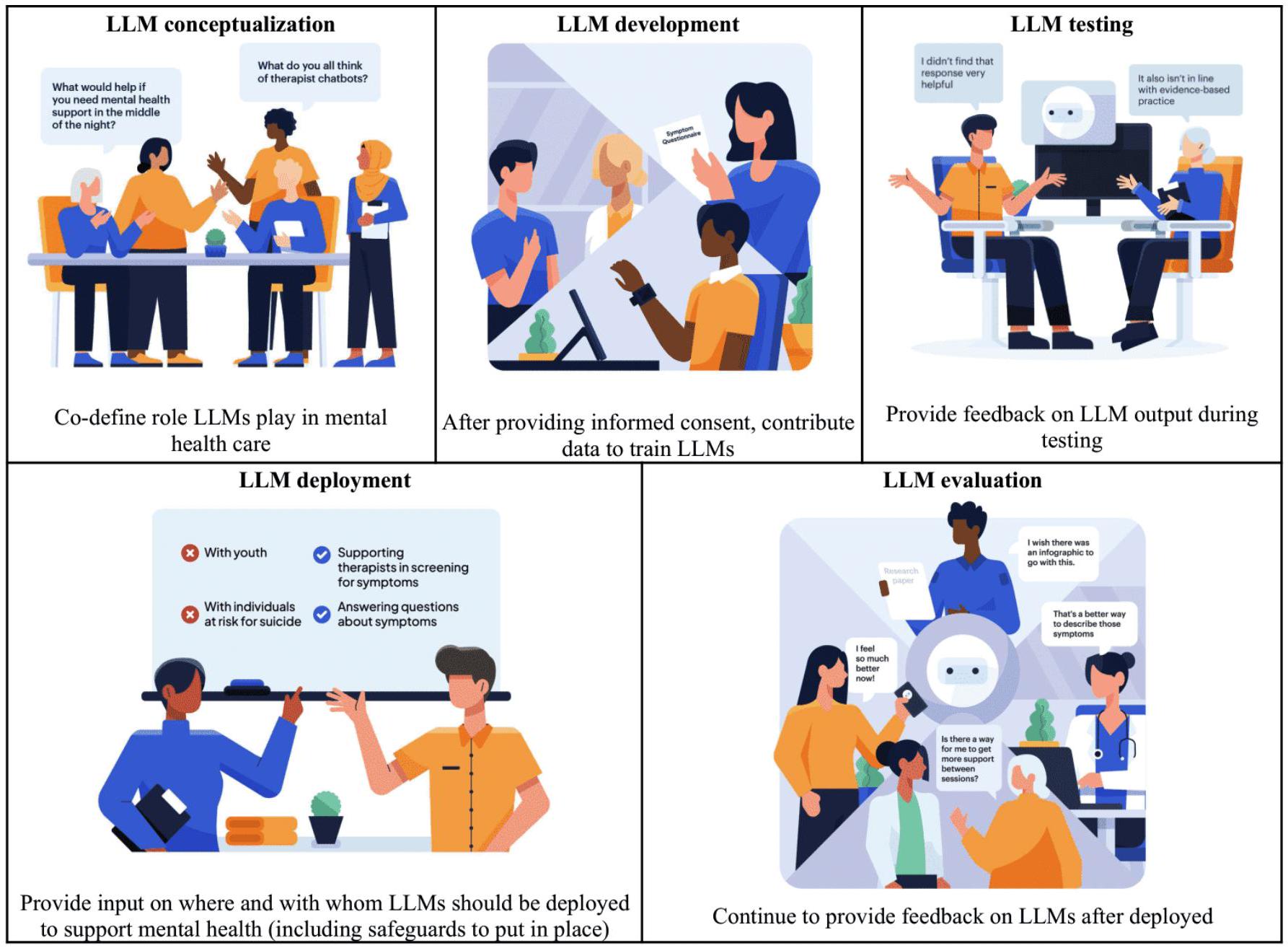

Global rates of mental health concerns are rising, and there is increasing realization that existing models of mental health care will not adequately expand to meet the demand. With the emergence of large language models (LLMs) has come great optimism regarding their promise to create novel, large-scale solutions to support mental health. Despite their nascence, LLMs have already been applied to mental health-related tasks. In this paper, we summarize the extant literature on efforts to use LLMs to provide mental health education, assessment, and intervention and highlight key opportunities for positive impact in each area. We then highlight risks associated with LLMs’ application to mental health and encourage the adoption of strategies to mitigate these risks. The urgent need for mental health support must be balanced with responsible development, testing, and deployment of mental health LLMs. It is especially critical to ensure that mental health LLMs are fine-tuned for mental health, enhance mental health equity, and adhere to ethical standards and that people, including those with lived experience with mental health concerns, are involved in all stages from development through deployment. Prioritizing these efforts will minimize potential harms to mental health and maximize the likelihood that LLMs will positively impact mental health globally.

Keywords: artificial intelligence; AI; generative AI; large language models; mental health; mental health education; language model; mental health care; health equity; ethical; development; deployment

Introduction

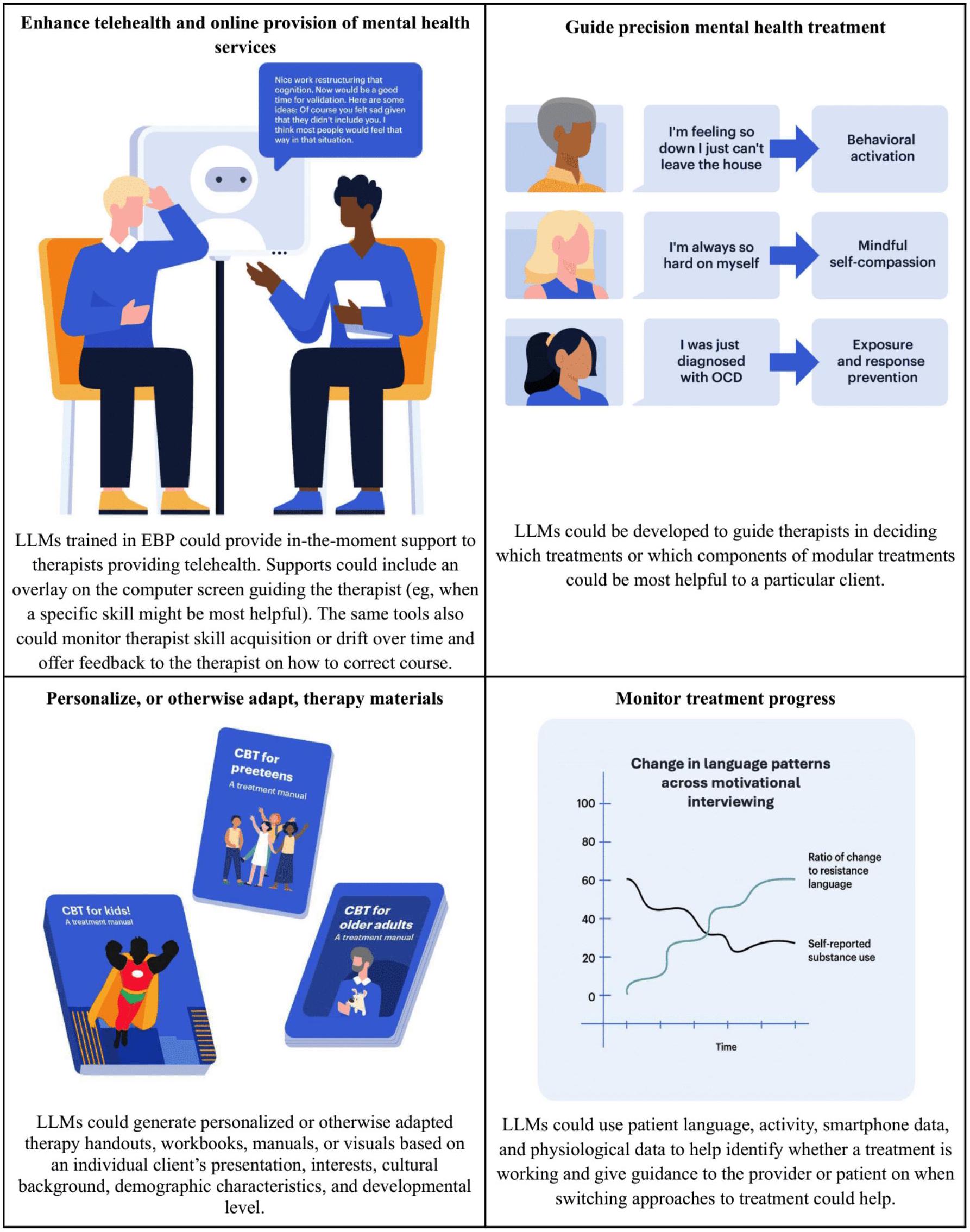

regarding their application to mental health and their potential to provide such solutions due to their relevance to mental health education, assessment, and intervention. LLMs are artificial intelligence models trained using extensive data sets to predict language sequences [6]. By leveraging huge neural architectures, LLMs can organize complex and abstract concepts. This enables them to identify, translate, predict, and generate new content. LLMs can be fine-tuned for specific domains (eg, mental health) and enable interactions in natural language, as do many mental health assessments and interventions, highlighting the enormous potential they have to revolutionize mental health care. In this paper, we first summarize the research done to date applying LLMs to mental health. Then, we highlight key opportunities and risks associated with mental health LLMs and put forth suggested

risk mitigation strategies. Finally, we make recommendations for the responsible use of LLMs in the mental health domain.

Applications of LLMs to Mental Health

Overview

Education

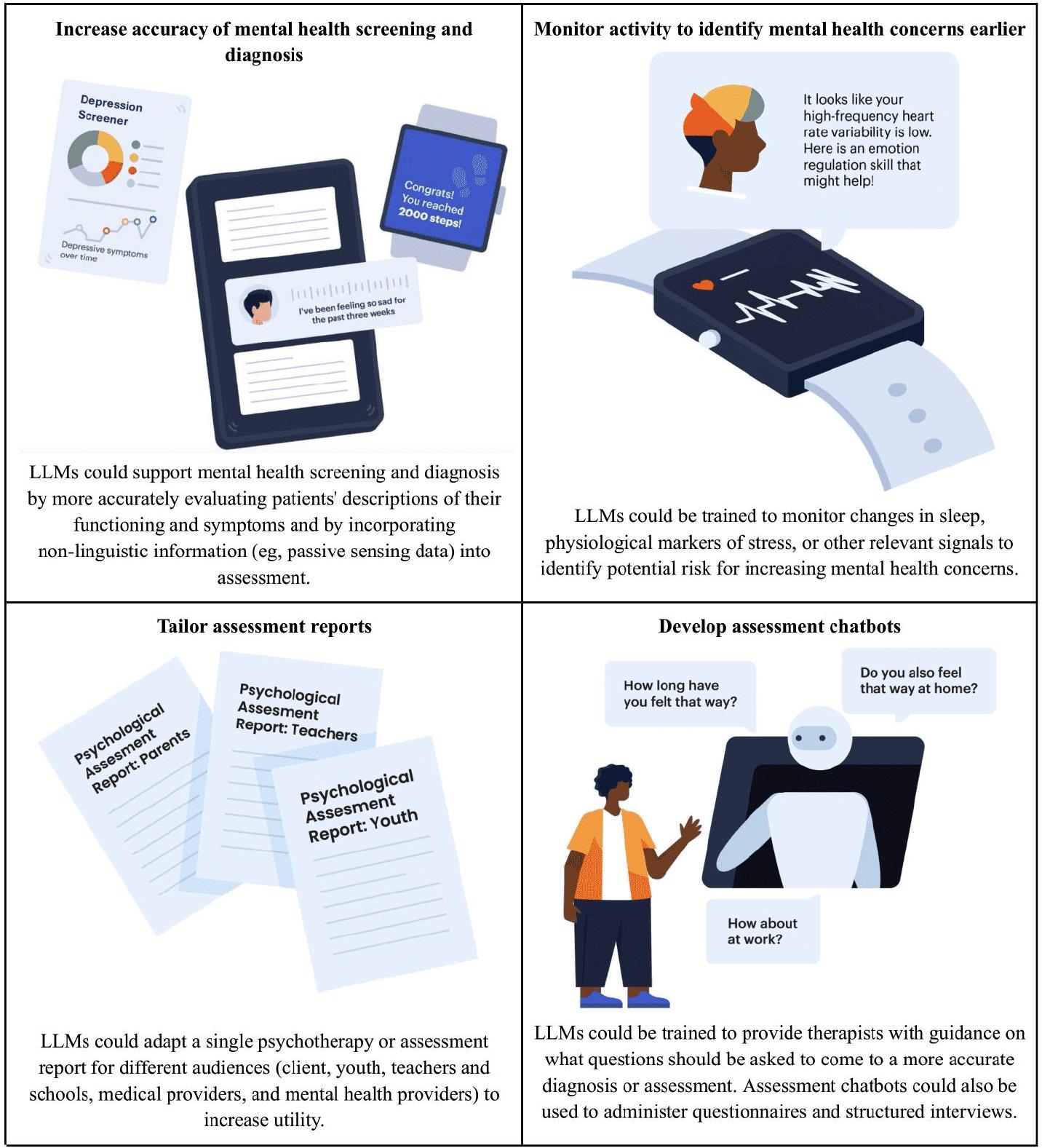

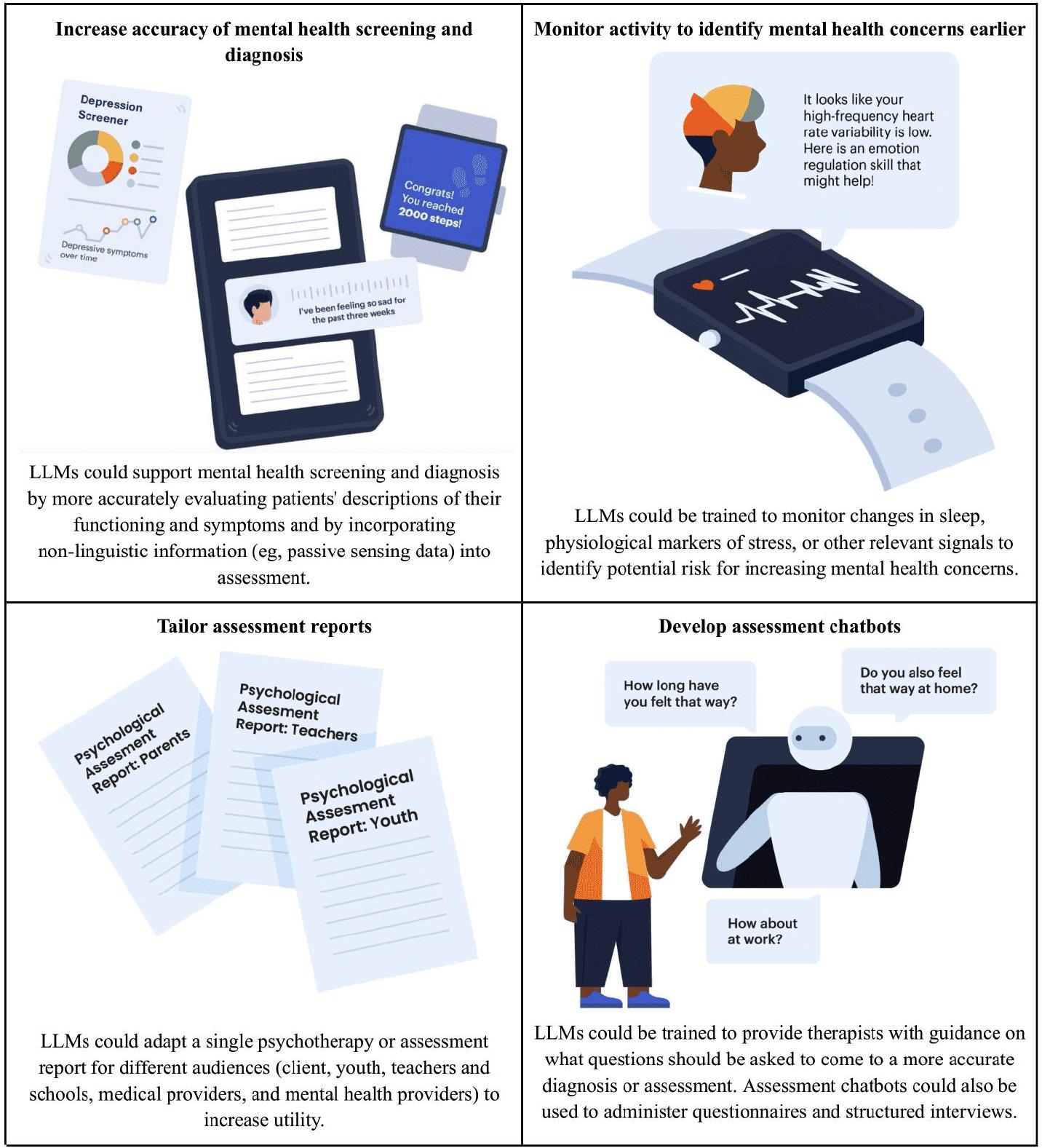

Assessment

specialist medical doctors in response to challenging case studies, some of which involved psychiatric diagnoses [22].

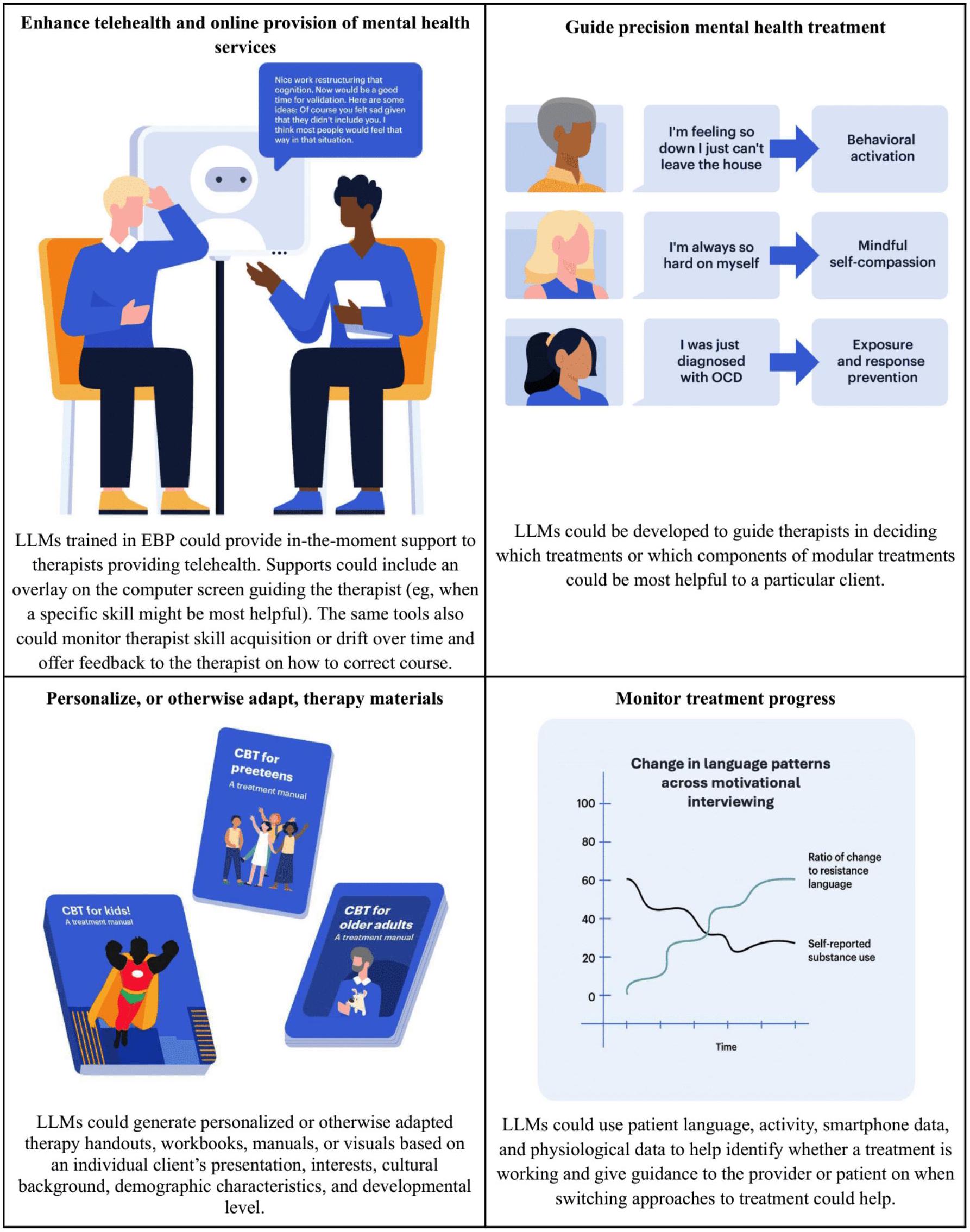

Intervention

maintain therapeutic conversations [14] and that individuals can establish therapeutic rapport with chatbots [41].

Risks Associated With Mental Health LLMs

Overview

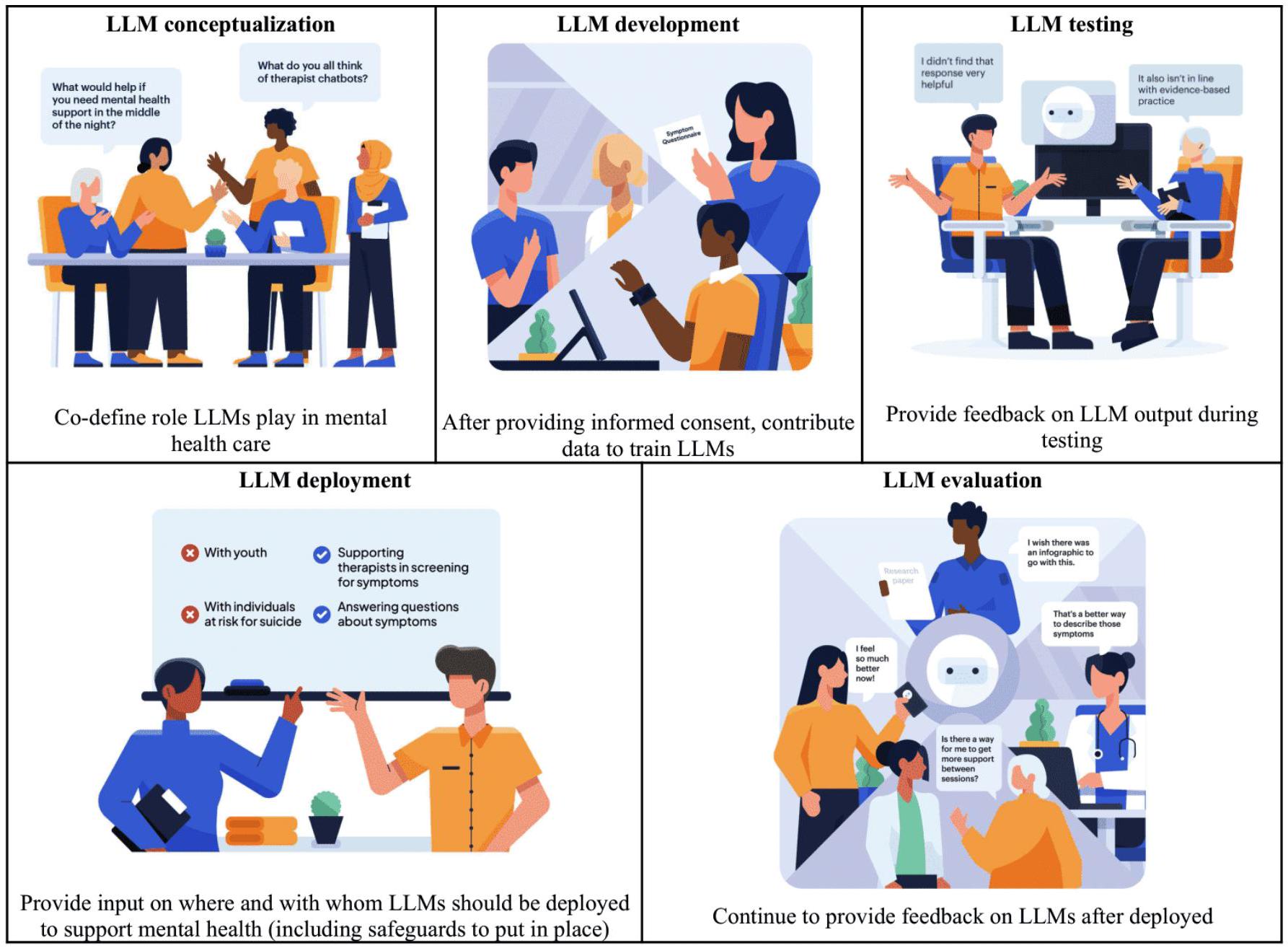

steps to mitigate risks, and establishing plans to monitor for ongoing or new and unexpected risks

- LLMs should only engage in mental health tasks when trained and shown to perform well.

- Mental health LLMs should advance mental health equity.

- Privacy or confidentiality should be paramount when LLMs operate to support mental health.

- Informed consent should be obtained when people engage with mental health LLMs.

- Mental health LLMs should respond appropriately to mental health risk.

- Mental health LLMs should only operate within the bounds of their competence.

- Mental health LLMs should be transparent and capable of explanation.

- Humans should provide oversight and feedback to mental health LLMs.

| Mental health education | Mental health assessment | Mental health intervention | |

| Perpetuate inequalities, disparities, and stigma | Medium | Higher | Higher |

| Unethical provision of mental health services | |||

| Practice beyond the boundaries of competence | Lower | Higher | Higher |

| Neglect to obtain informed consent | Lower | Higher | Higher |

| Fail to preserve confidentiality or privacy | Lower | Higher | Higher |

| Build and maintain inappropriate levels of trust | Lower | Medium | Higher |

| Lack reliability | Lower | Higher | Higher |

| Generate inaccurate or iatrogenic output | Medium | Higher | Higher |

| Lack transparency or explainability | Lower | Medium | Medium |

| Neglect to involve humans | Lower | Medium | Higher |

Perpetuating Inequalities, Disparities, and Stigma

concerns and effective assessments and interventions for populations that have been pushed to the margins. Training LLMs on existing data without appropriate safeguards and thoughtful human supervision and evaluation can, therefore, lead to problematic generation of biased content and disparate model performance for different groups [45,55-57] (of note, however, there is some evidence that clinicians perceive less bias in LLM-generated responses [58] relative to cliniciangenerated responses, suggesting that LLMs may have the potential to reduce bias compared to human clinicians).

of bias, such as semantic biases, may arise in LLMs [59]. Opportunities to train models to identify and exclude toxic and discriminatory language should be explored, both during the training of the underlying foundation models and during the domain-specific fine-tuning (see Keeling [59] for a discussion of the trade-offs of data filtration in this context) [45]. If LLMs perform differently for different groups or generate problematic or stigmatizing language during testing, additional model fine-tuning is required prior to deployment. Individuals developing LLMs should be transparent about the limitations of the training data, the approaches to data filtration and fine-tuning, and the populations for whom LLM performance has not been sufficiently demonstrated.

Failing to Provide Mental Health Services Ethically

the LLM should no longer be deployed until the needed competence is gained (eg, via retraining and fine-tuning models with human validation).

Insufficient Reliability

Inaccuracy

trusted sources, be specific to evidence-based health care, and represent diverse populations [46,58]. In mental health, the nature of consensus is continuing to evolve, and the amount of data available is continuing to increase, which should be taken into account when considering whether to further fine-tune models. Strategies such as implementing a Retrieval Augmented Generation system, in which LLMs are given access to an external database of up-to-date, quality-verified information to incorporate in the generation process, may help to improve accuracy and enable links to sources while also maintaining access to updated information. Accuracy of LLMs should be monitored over time to ensure that model accuracy improves and does not deteriorate with new information [45].

Lack of Transparency and Explainability

Neglecting to Involve Humans

engaging, more appealing, and perhaps also more humanlike. However, it also increases the risk that LLMs may produce harmful or nontherapeutic content when tasked with independently providing mental health services. Legal and regulatory frameworks are needed to protect individuals’ safety and mental health when interacting with LLMs, as well as to clarify clinician liability when using LLMs to support their work or to clarify the liability of individuals and companies who develop these LLMs. There are ongoing discussions regarding the regulation of LLMs in medicine [74-76] that can inform how LLMs can support mental health while limiting the potential for harm and liability.

Conclusions

appropriate rapport, and to detect risk for high-acuity mental health concerns for progress to be made in these areas.

Acknowledgments

Conflicts of Interest

References

- McGrath JJ, Al-Hamzawi A, Alonso J, et al. Age of onset and cumulative risk of mental disorders: a cross-national analysis of population surveys from 29 countries. Lancet Psychiatry. Sep 2023;10(9):668-681. [doi: 10.1016/S2215-0366(23)00193-1] [Medline: 37531964]

- World mental health report: transforming mental health for all. World Health Organization; 2022. URL: https://www. who.int/publications/i/item/9789240049338 [Accessed 2024-07-18]

- 2022 National Healthcare Quality and Disparities Report. Agency for Healthcare Research and Quality; 2022. URL: https://www.ahrq.gov/research/findings/nhqrdr/nhqdr22/index.html [Accessed 2024-07-18]

- Wainberg ML, Scorza P, Shultz JM, et al. Challenges and opportunities in global mental health: a research-to-practice perspective. Curr Psychiatry Rep. May 2017;19(5):28. [doi: 10.1007/s11920-017-0780-z] [Medline: 28425023]

- Mental health by the numbers. National Alliance on Mental Illness. 2023. URL: https://www.nami.org/about-mental-illness/mental-health-by-the-numbers/ [Accessed 2024-07-29]

- Thirunavukarasu AJ, Ting DSJ, Elangovan K, Gutierrez L, Tan TF, Ting DSW. Large language models in medicine. Nat Med. Aug 2023;29(8):1930-1940. [doi: 10.1038/s41591-023-02448-8] [Medline: 37460753]

- Gao Y, Xiong Y, Gao X, et al. Retrieval-augmented generation for large language models: a survey. arXiv. Preprint posted online on Dec 18, 2023. [doi: 10.48550/arXiv.2312.10997]

- Ayers JW, Poliak A, Dredze M, et al. Comparing physician and artificial intelligence chatbot responses to patient questions posted to a public social media forum. JAMA Intern Med. Jun 1, 2023;183(6):589-596. [doi: 10.1001/ jamainternmed.2023.1838] [Medline: 37115527]

- Singhal K, Tu T, Gottweis J, et al. Towards expert-level medical question answering with large language models. arXiv. Preprint posted online on May 16, 2023. [doi: 10.48550/arXiv.2305.09617]

- Lai T, Shi Y, Du Z, et al. Psy-LLM: scaling up global mental health psychological services with AI-based large language models. arXiv. Preprint posted online on Sep 1, 2023. [doi: 10.48550/arXiv.2307.11991]

- Sezgin E, Chekeni F, Lee J, Keim S. Clinical accuracy of large language models and Google search responses to postpartum depression questions: cross-sectional study. J Med Internet Res. Sep 11, 2023;25:e49240. [doi: 10.2196/ 49240] [Medline: 37695668]

- Spallek S, Birrell L, Kershaw S, Devine EK, Thornton L. Can we use ChatGPT for mental health and substance use education? Examining its quality and potential harms. JMIR Med Educ. Nov 30, 2023;9:e51243. [doi: 10.2196/51243] [Medline: 38032714]

- Barish G, Marlotte L, Drayton M, Mogil C, Lester P. Automatically enriching content for a behavioral health learning management system: a first look. Presented at: The 9th World Congress on Electrical Engineering and Computer Systems and Science; Aug 3-5, 2023; London, United Kingdom. [doi: 10.11159/cist23.125]

- Chan C, Li F. Developing a natural language-based AI-chatbot for social work training: an illustrative case study. China J Soc Work. May 4, 2023;16(2):121-136. [doi: 10.1080/17525098.2023.2176901]

- Sharma A, Lin IW, Miner AS, Atkins DC, Althoff T. Human-AI collaboration enables more empathic conversations in text-based peer-to-peer mental health support. Nat Mach Intell. 2023;5(1):46-57. [doi: 10.1038/s42256-022-00593-2]

- Ji S, Zhang T, Ansari L, Fu J, Tiwari P, Cambria E. MentalBERT: publicly available pretrained language models for mental healthcare. arXiv. Preprint posted online on Oct 29, 2021. [doi: 10.48550/arXiv.2110.15621]

- Lamichhane B. Evaluation of ChatGPT for NLP-based mental health applications. arXiv. Preprint posted online on Mar 28, 2023. [doi: 10.48550/arXiv.2303.15727]

- Amin MM, Cambria E, Schuller BW. Will affective computing emerge from foundation models and general AI? A first evaluation on ChatGPT. arXiv. Preprint posted online on Mar 3, 2023. [doi: 10.48550/arXiv.2303.03186]

- Xu X, Yao B, Dong Y, et al. Mental-LLM: leveraging large language models for mental health prediction via online text data. arXiv. Preprint posted online on Aug 16, 2023. [doi: 10.48550/arXiv.2307.14385]

- Galatzer-Levy IR, McDuff D, Natarajan V, Karthikesalingam A, Malgaroli M. The capability of large language models to measure psychiatric functioning. arXiv. Preprint posted online on Aug 3, 2023. [doi: 10.48550/arXiv.2308.01834]

- Barnhill JW. DSM-5-TR® clinical cases. Psychiatry online. URL: https://dsm.psychiatryonline.org/doi/book/10.1176/ appi.books. 9781615375295 [Accessed 2024-07-18]

- McDuff D, Schaekermann M, Tu T, et al. Towards accurate differential diagnosis with large language models. arXiv. Preprint posted online on Nov 30, 2023. [doi: 10.48550/arXiv.2312.00164]

- Elyoseph Z, Levkovich I. Beyond human expertise: the promise and limitations of ChatGPT in suicide risk assessment. Front Psychiatry. Aug 2023;14:1213141. [doi: 10.3389/fpsyt.2023.1213141] [Medline: 37593450]

- Levi-Belz Y, Gamliel E. The effect of perceived burdensomeness and thwarted belongingness on therapists’ assessment of patients’ suicide risk. Psychother Res. Jul 2016;26(4):436-445. [doi: 10.1080/10503307.2015.1013161] [Medline: 25751580]

- Joiner T. Why People Die by Suicide. Harvard University Press; 2007.

- Van Orden KA, Witte TK, Cukrowicz KC, Braithwaite SR, Selby EA, Joiner TE. The interpersonal theory of suicide. Psychol Rev. Apr 2010;117(2):575-600. [doi: 10.1037/a0018697] [Medline: 20438238]

- Darcy A, Beaudette A, Chiauzzi E, et al. Anatomy of a Woebot® (WB001): agent guided CBT for women with postpartum depression. Expert Rev Med Devices. Apr 2022;19(4):287-301. Retracted in: Expert Rev Med Devices. 2023;20(11):989. [doi: 10.1080/17434440.2023.2267389] [Medline: 37801290]

- Inkster B, Sarda S, Subramanian V. An empathy-driven, conversational artificial intelligence agent (Wysa) for digital mental well-being: real-world data evaluation mixed-methods study. JMIR Mhealth Uhealth. Nov 23, 2018;6(11):e12106. [doi: 10.2196/12106] [Medline: 30470676]

- Fulmer R, Joerin A, Gentile B, Lakerink L, Rauws M. Using psychological artificial intelligence (Tess) to relieve symptoms of depression and anxiety: randomized controlled trial. JMIR Ment Health. Dec 13, 2018;5(4):e64. [doi: 10. 2196/mental.9782] [Medline: 30545815]

- Murphy M, Templin J. Our story. Replika. 2021. URL: https://replika.ai/about/story [Accessed 2023-08-20]

- Kim H, Yang H, Shin D, Lee JH. Design principles and architecture of a second language learning chatbot. Lang Learn Technol. 2022;26:1-18. URL: https://scholarspace.manoa.hawaii.edu/server/api/core/bitstreams/b3aa08a8-579d-4bf6-b94a-05c2ff67351a/content [Accessed 2024-07-18]

- Wilbourne P, Dexter G, Shoup D. Research driven: Sibly and the transformation of mental health and wellness. Presented at: Proceedings of the 12th EAI International Conference on Pervasive Computing Technologies for Healthcare; May 21-24, 2018:389-391; New York, NY. [doi: 10.1145/3240925.3240932]

- Denecke K, Abd-Alrazaq A, Househ M. Artificial intelligence for chatbots in mental health: opportunities and challenges. In: Househ M, Borycki E, Kushniruk A, editors. Multiple Perspectives on Artificial Intelligence in Healthcare: Opportunities and Challenges. Springer International Publishing; 2021:115-128. [doi: 10.1007/978-3-030-67303-1]

- Omarov B, Zhumanov Z, Gumar A, Kuntunova L. Artificial intelligence enabled mobile chatbot psychologist using AIML and cognitive behavioral therapy. IJACSA. 2023;14(6). [doi: 10.14569/IJACSA.2023.0140616]

- Pham KT, Nabizadeh A, Selek S. Artificial intelligence and chatbots in psychiatry. Psychiatr Q. Mar 2022;93(1):249-253. [doi: 10.1007/s11126-022-09973-8] [Medline: 35212940]

- Abd-Alrazaq AA, Rababeh A, Alajlani M, Bewick BM, Househ M. Effectiveness and safety of using chatbots to improve mental health: systematic review and meta-analysis. J Med Internet Res. Jul 2020;22(7):e16021. [doi: 10.2196/ 16021] [Medline: 32673216]

- Brocki L, Dyer GC, Gładka A, Chung NC. Deep learning mental health dialogue system. Presented at: 2023 IEEE International Conference on Big Data and Smart Computing (BigComp); Feb 13-16, 2023:395-398. Jeju, Korea.

- Martinengo L, Lum E, Car J. Evaluation of chatbot-delivered interventions for self-management of depression: content analysis. J Affect Disord. Dec 2022;319:598-607. [doi: 10.1016/j.jad.2022.09.028] [Medline: 36150405]

- You Y, Tsai CH, Li Y, Ma F, Heron C, Gui X. Beyond self-diagnosis: how a chatbot-based symptom checker should respond. ACM Trans Comput-Hum Interact. Aug 31, 2023;30(4):1-44. [doi: 10.1145/3589959]

- Ma Z, Mei Y, Su Z. Understanding the benefits and challenges of using large language model-based conversational agents for mental well-being support. AMIA Annu Symp Proc. Jan 11, 2024;2023:1105-1114. [Medline: 38222348]

- Lee J, Lee JG, Lee D. Influence of rapport and social presence with an AI psychotherapy chatbot on users’ selfdisclosure. SSRN. Preprint posted online on Mar 22, 2022. [doi: 10.2139/ssrn.4063508]

- Das A, Selek S, Warner AR, et al. Conversational bots for psychotherapy: a study of generative transformer models using domain-specific dialogues. In: Demner-Fushman D, Cohen KB, Ananiadou S, Tsujii J, editors. Proceedings of the 21st Workshop on Biomedical Language Processing. Association for Computational Linguistics; 2022:285-297. [doi: 10. 18653/v1/2022.bionlp-1.27]

- Demner-Fushman D, Ananiadou S, Cohen KB, editors. The 22nd Workshop on Biomedical Natural Language Processing and BioNLP Shared Tasks. Association for Computational Linguistics; 2023.

- Heston TF. Evaluating risk progression in mental health chatbots using escalating prompts. medRxiv. Preprint posted online on Sep 12, 2023. [doi: 10.1101/2023.09.10.23295321]

- Weidinger L, Mellor J, Rauh M, et al. Ethical and social risks of harm from language models. arXiv. Preprint posted online on Dec 8, 2021. [doi: 10.48550/arXiv.2112.04359]

- Harrer S. Attention is not all you need: the complicated case of ethically using large language models in healthcare and medicine. eBioMedicine. Apr 2023;90:104512. [doi: 10.1016/j.ebiom.2023.104512] [Medline: 36924620]

- Koutsouleris N, Hauser TU, Skvortsova V, De Choudhury M. From promise to practice: towards the realisation of AIinformed mental health care. Lancet Digital Health. Nov 2022;4(11):e829-e840. [doi: 10.1016/S2589-7500(22)00153-4]

- Sickel AE, Seacat JD, Nabors NA. Mental health stigma update: a review of consequences. Adv Ment Health. Dec 2014;12(3):202-215. [doi: 10.1080/18374905.2014.11081898]

- Alegría M, Green JG, McLaughlin KA, Loder S. Disparities in child and adolescent mental health and mental health services in the U.S. William T. Grant Foundation. Mar 2015. URL: https://wtgrantfoundation.org/wp-content/uploads/ 2015/09/Disparities-in-Child-and-Adolescent-Mental-Health.pdf [Accessed 2024-07-18]

- Primm AB, Vasquez MJT, Mays RA. The role of public health in addressing racial and ethnic disparities in mental health and mental illness. Prev Chronic Dis. Jan 2010;7(1):A20. [Medline: 20040235]

- Schwartz RC, Blankenship DM. Racial disparities in psychotic disorder diagnosis: a review of empirical literature. World J Psychiatry. Dec 2014;4(4):133. [doi: 10.5498/wjp.v4.i4.133] [Medline: 25540728]

- McGuire TG, Miranda J. New evidence regarding racial and ethnic disparities in mental health: policy implications. Health Aff (Millwood). Mar 2008;27(2):393-403. [doi: 10.1377/hlthaff.27.2.393] [Medline: 18332495]

- Snowden LR, Cheung FK. Use of inpatient mental health services by members of ethnic minority groups. Am Psychol. Mar 1990;45(3):347-355. [doi: 10.1037//0003-066x.45.3.347] [Medline: 2310083]

- Henrich J, Heine SJ, Norenzayan A. Beyond WEIRD: towards a broad-based behavioral science. Behav Brain Sci. Jun 2010;33(2-3):111-135. [doi: 10.1017/S0140525X10000725]

- Lin I, Njoo L, Field A, et al. Gendered mental health stigma in masked language models. arXiv. Preprint posted online on Oct 27, 2022. [doi: 10.48550/arXiv.2210.15144]

- Liu Y, et al. Trustworthy LLMs: a survey and guideline for evaluating large language models’ alignment. arXiv. Preprint posted online on Aug 10, 2023. [doi: 10.48550/arXiv.2308.05374]

- Straw I, Callison-Burch C. Artificial intelligence in mental health and the biases of language based models. PLoS One. Dec 2020;15(12):e0240376. [doi: 10.1371/journal.pone.0240376] [Medline: 33332380]

- Singhal K, Azizi S, Tu T, et al. Large language models encode clinical knowledge. Nature. Aug 2023;620(7972):172-180. [doi: 10.1038/s41586-023-06291-2] [Medline: 37438534]

- Keeling G. Algorithmic bias, generalist models, and clinical medicine. arXiv. Preprint posted online on May 6, 2023. [doi: 10.48550/arXiv.2305.04008]

- Koocher GP, Keith-Spiegel P. Ethics in Psychology and the Mental Health Professions: Standards and Cases. Oxford University Press; 2008.

- Varkey B. Principles of clinical ethics and their application to practice. Med Princ Pract. Feb 2021;30(1):17-28. [doi: 10. 1159/000509119] [Medline: 32498071]

- Rajagopal A, Nirmala V, Andrew J, Arun M. Novel AI to avert the mental health crisis in COVID-19: novel application of GPT2 in cognitive behaviour therapy. Research Square. Preprint posted online on Apr 1, 2021. [doi: 10.21203/rs.3.rs382748/v1]

- Gratch J, Lucas G. Rapport between humans and socially interactive agents. In: Lugrin B, Pelachaud C, Traum D, editors. The Handbook on Socially Interactive Agents: 20 Years of Research on Embodied Conversational Agents, Intelligent Virtual Agents, and Social Robotics Volume 1: Methods, Behavior, Cognition. Association for Computing Machinery; 2021:433-462. [doi: 10.1145/3477322.3477335]

- McDuff D, Czerwinski M. Designing emotionally sentient agents. Commun ACM. Nov 20, 2018;61(12):74-83. [doi: 10. 1145/3186591]

- Lundin RM, Berk M, Østergaard SD. ChatGPT on ECT: can large language models support psychoeducation? J ECT. Sep 1, 2023;39(3):130-133. [doi: 10.1097/YCT.0000000000000941] [Medline: 37310145]

- Wang B, Min S, Deng X, et al. Towards understanding chain-of-thought prompting: an empirical study of what matters. arXiv. Preprint posted online on Dec 20, 2022. [doi: 10.48550/arXiv.2212.10001]

- Glenberg AM, Havas D, Becker R, Rinck M. Grounding language in bodily states: the case for emotion. In: Grounding Cognition: The Role of Perception and Action in Memory, Language, and Thinking. Cambridge University Press; 2005:115-128. [doi: 10.1017/CBO9780511499968]

- Zhong Y, Chen YJ, Zhou Y, Lyu YAH, Yin JJ, Gao YJ. The artificial intelligence large language models and neuropsychiatry practice and research ethic. Asian J Psychiatr. Jun 2023;84:103577. [doi: 10.1016/j.ajp.2023.103577] [Medline: 37019020]

- Gilbert S, Harvey H, Melvin T, Vollebregt E, Wicks P. Large language model AI chatbots require approval as medical devices. Nat Med. Oct 2023;29(10):2396-2398. [doi: 10.1038/s41591-023-02412-6] [Medline: 37391665]

- Singh OP. Artificial intelligence in the era of ChatGPT – opportunities and challenges in mental health care. Indian J Psychiatry. Mar 2023;65(3):297-298. [doi: 10.4103/indianjpsychiatry.indianjpsychiatry_112_23] [Medline: 37204980]

- Yang K, Ji S, Zhang T, Xie Q, Kuang Z, Ananiadou S. Towards interpretable mental health analysis with large language models. arXiv. Preprint posted online on Oct 11, 2023. [doi: 10.48550/arXiv.2304.03347]

- Balasubramaniam N, Kauppinen M, Rannisto A, Hiekkanen K, Kujala S. Transparency and explainability of AI systems: from ethical guidelines to requirements. Inf Softw Technol. Jul 2023;159:107197. [doi: 10.1016/j.infsof.2023.107197]

- Wei J, Wang X, Schuurmans D, et al. Chain-of-thought prompting elicits reasoning in large language models. In: Koyejo S, Mohamed S, Agarwal A, editors. Advances in Neural Information Processing Systems. Vol 35. Curran Associates, Inc; 2022:24824-24837.

- Meskó B, Topol EJ. The imperative for regulatory oversight of large language models (or generative AI) in healthcare. NPJ Digit Med. Jul 6, 2023;6(1):120. [doi: 10.1038/s41746-023-00873-0] [Medline: 37414860]

- Ong JCL, Chang SYH, William W, et al. Ethical and regulatory challenges of large language models in medicine. Lancet Digit Health. Jun 2024;6(6):e428-e432. [doi: 10.1016/S2589-7500(24)00061-X] [Medline: 38658283]

- Minssen T, Vayena E, Cohen IG. The challenges for regulating medical use of ChatGPT and other large language models. JAMA. Jul 25, 2023;330(4):315-316. [doi: 10.1001/jama.2023.9651] [Medline: 37410482]

- van Heerden AC, Pozuelo JR, Kohrt BA. Global mental health services and the impact of artificial intelligence-powered large language models. JAMA Psychiatry. Jul 1, 2023;80(7):662-664. [doi: 10.1001/jamapsychiatry.2023.1253] [Medline: 37195694]

- Cabrera J, Loyola MS, Magaña I, Rojas R. Ethical dilemmas, mental health, artificial intelligence, and LLM-based chatbots. In: Bioinformatics and Biomedical Engineering. Springer Nature Switzerland; 2023:313-326. [doi: 10.1007/ 978-3-031-34960-7_2]

Abbreviations

BERT: Bidirectional Encoder Representations From Transformers

DSM-5: Diagnostic and Statistical Manual of Mental Disorders, Fifth Edition

EBP: evidence-based practice

HIPAA: Health Insurance Portability and Accountability Act

LLM: large language model

Psy-LLM: psychological support with large language model

Lawrence HR, Schneider RA, Rubin SB, Matarić MJ, McDuff DJ, Jones Bell M

The Opportunities and Risks of Large Language Models in Mental Health

JMIR Ment Health 2024;11:e59479

URL: https://mental.jmir.org/2024/1/e59479

doi: 10.2196/59479

© Hannah R Lawrence, Renee A Schneider, Susan B Rubin, Maja J Matarić, Daniel J McDuff, Megan Jones Bell. Originally published in JMIR Mental Health (https://mental.jmir.org), 29.07.2024. This is an open-access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work, first published in JMIR Mental Health, is properly cited. The complete bibliographic information, a link to the original publication on https://mental.jmir.org/, as well as this copyright and license information must be included.